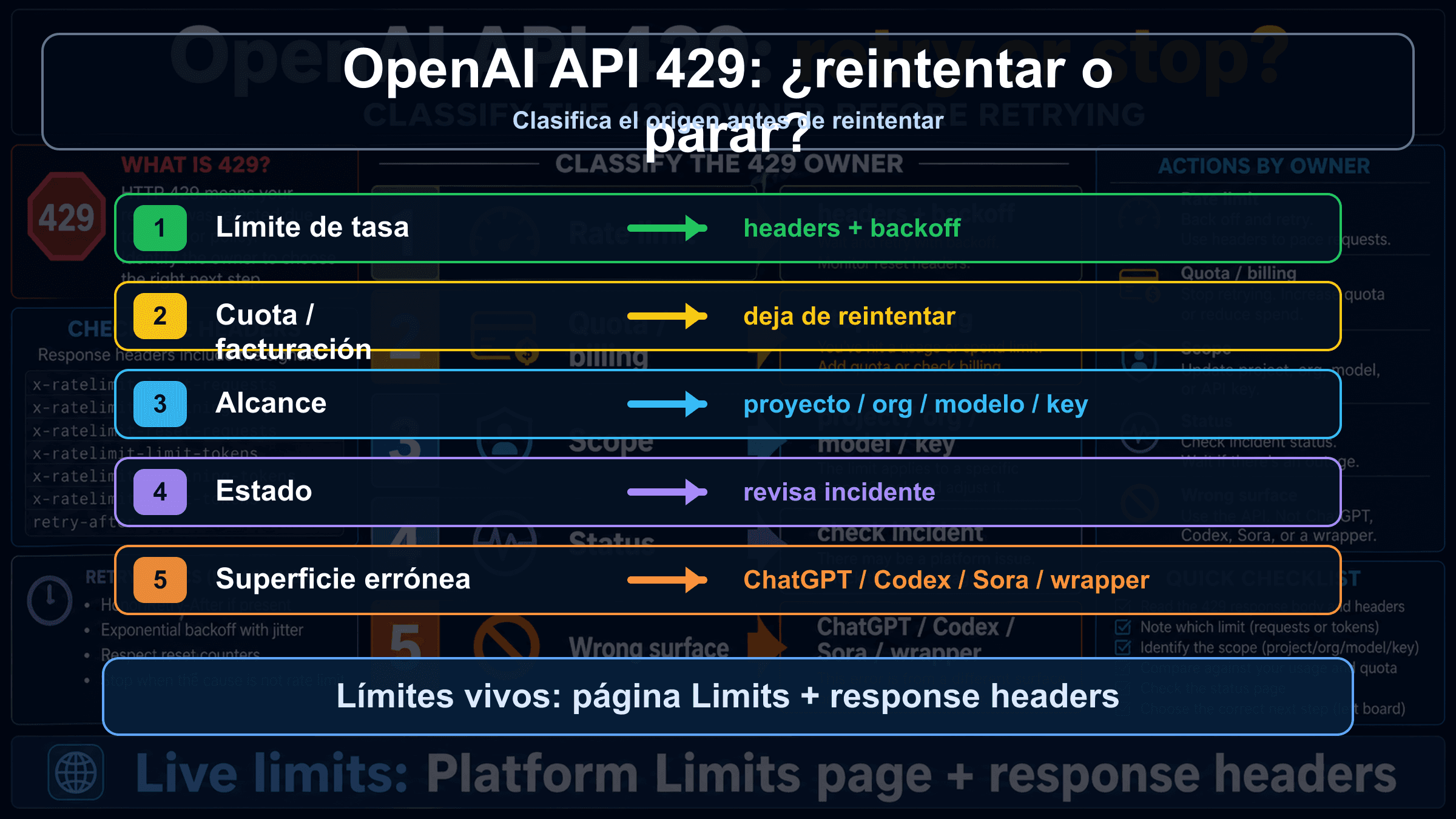

Si una llamada a OpenAI Platform API devuelve 429, no aumentes los reintentos antes de leer el cuerpo. Un rate limit real necesita backoff, throttling o cola; cuota, billing, scope, model access, status incident o wrapper limit necesitan otra ruta.

| Pista | Causa probable | Primera comprobacion | Retry o stop |

|---|---|---|---|

rate limit reached, too many requests o remaining headers casi cero | request/token rate limit | headers, Limits, model family, reset window | retry con backoff y jitter, luego throttle o queue |

You exceeded your current quota o insufficient_quota | cuota, billing o spend cap | Billing, Usage, Limits, monthly spend, account state | stop hasta que cambie el estado |

| un key nuevo falla igual o solo falla un project/model | project, organization, model o key scope | project, organization, model access | arreglar scope antes de cambiar traffic |

| muchas llamadas fallan y Status muestra incidente | platform status o capacity | OpenAI Status, timestamp, request id | esperar y guardar evidencia |

| error desde ChatGPT, Codex, Sora, Azure o wrapper | wrong surface | product surface, provider docs, route, headers | usar ese contrato |

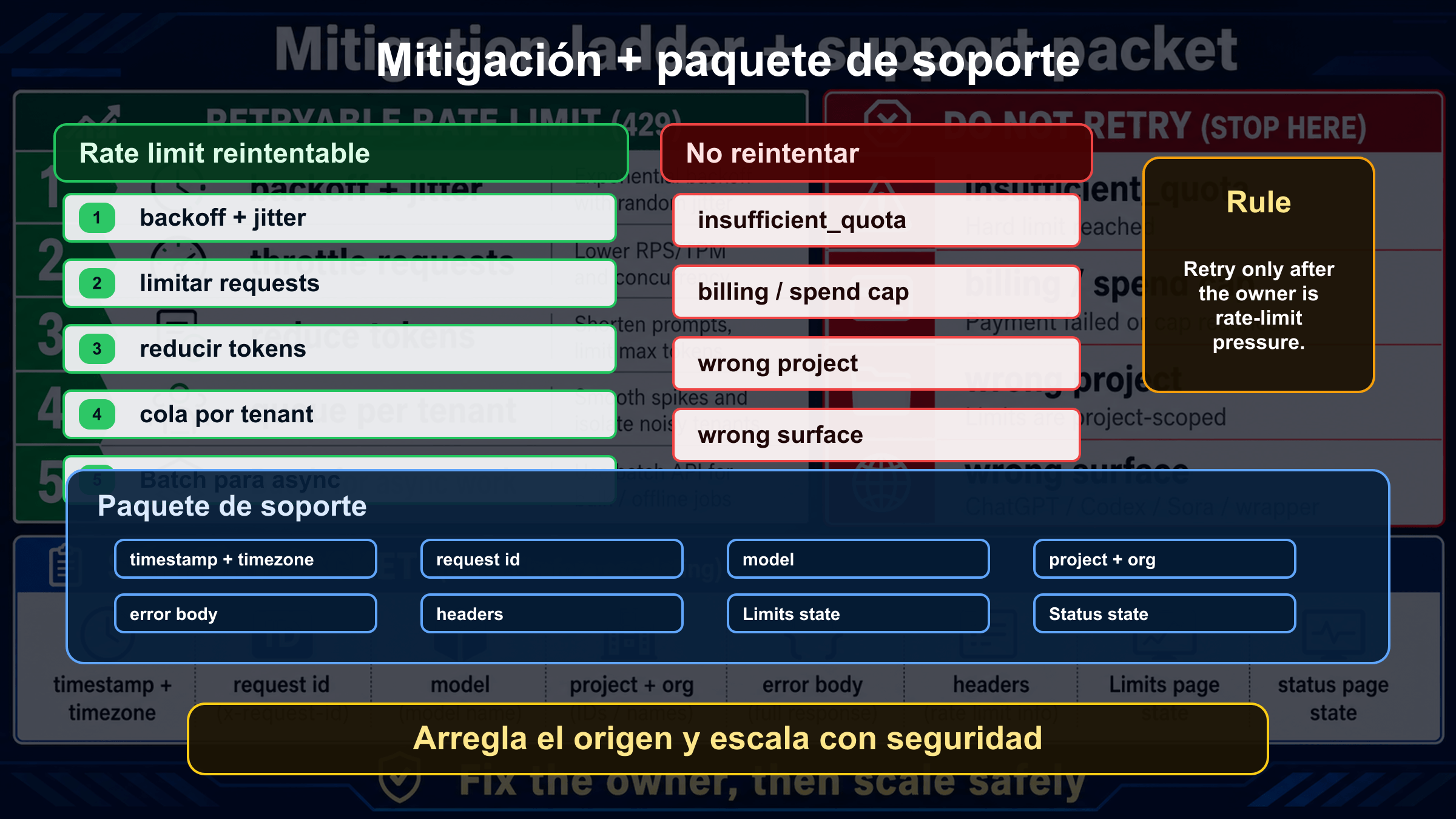

La regla de parada es clara: solo reintenta cuando la causa es presion de requests o tokens y existe una senal de reset. Si el cuerpo apunta a cuota, billing, proyecto equivocado, modelo equivocado, superficie equivocada o incidente, repetir la misma llamada no arregla nada.

Lee el cuerpo del 429 antes de cambiar codigo

OpenAI official docs distinguish at least two 429 families: traffic arriving too fast and current quota exhausted. In local developer searches these usually collapse into one phrase, so the body of the error must own the first decision. Record message, code, type, endpoint, model, project, organization, timestamp, and request id before changing retry policy.

The safe classification is conservative. If insufficient_quota or current quota wording appears, treat it as a quota or billing stop. If the body says rate limit or too many requests and the headers show remaining/reset information, treat it as retryable pressure. If neither branch is clear, hold the route steady and collect evidence instead of changing five variables at once.

This matters in real operations because an ambiguous 429 can tempt teams into the wrong repair. A tight retry loop can consume more minute capacity. A new key can hide the fact that the same project is still blocked. A billing change in the wrong organization can leave the production request untouched.

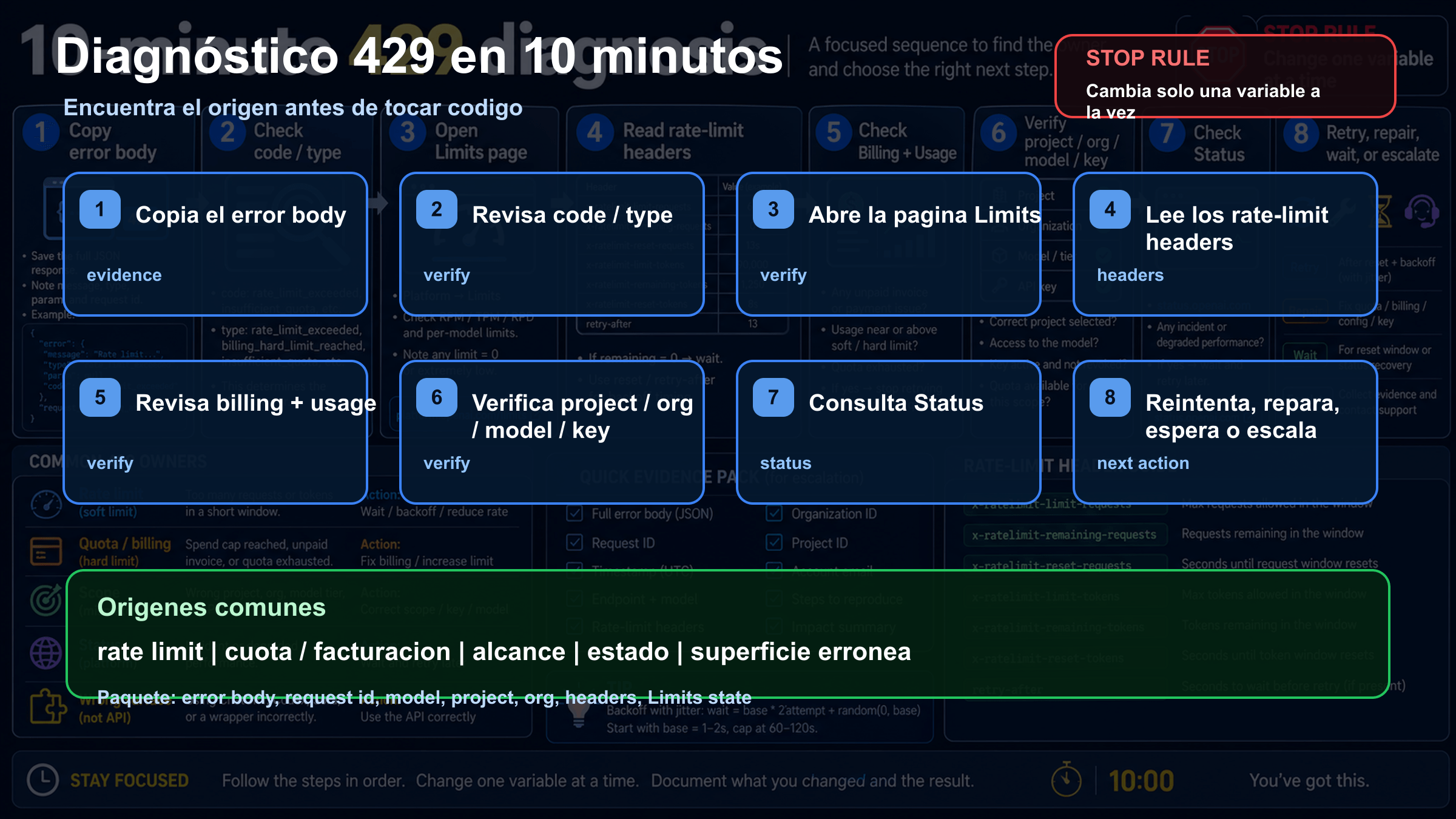

Ruta de recuperacion en diez minutos

Use the first ten minutes to classify the owner, not to experiment randomly. Copy the raw body and headers, open Limits, Billing and Usage for the same project and organization, confirm the model family, check OpenAI Status, then send one smaller controlled request. That sequence keeps the evidence readable.

| Time | Action | What it proves |

|---|---|---|

| 0-1 | Save body and headers | whether the branch is rate, quota, billing or unknown |

| 1-3 | Check Limits, Usage and Billing | whether the account has capacity or billing state problems |

| 3-5 | Compare model and endpoint | whether a stricter or shared model family is involved |

| 5-7 | Check OpenAI Status | whether a public incident changes the response |

| 7-10 | Send one smaller controlled request | whether workload size or account state is likely |

If the smaller request works, investigate concurrency, token size, image throughput or fan-out. If it fails with quota wording, stop retrying. If unrelated endpoints fail during a declared incident, preserve evidence and wait rather than rotating accounts.

Cuando retry y backoff son correctos

Retry and backoff are correct only for temporary request or token pressure. The useful signals are rate-limit wording, low remaining values, reset timing, and a traffic pattern that exceeds the current project/model budget. Retry is not a magic repair; it is a pacing tool.

Use exponential backoff with jitter, cap retry count, and add a central limiter per project and model family. A limiter inside each worker is not enough if workers do not share state. Estimate token size before dispatch, because reducing prompt size or max output can remove TPM pressure before the API rejects the request.

Failed requests can still count toward minute limits. A fleet that retries every second can keep itself inside the failure window. A good system slows down, queues, sheds non-urgent work, or uses Batch for async work.

Cuando seguir reintentando es un error

Retry is wrong when the error points to insufficient_quota, current quota, billing, monthly spend or account state. Waiting a few seconds does not add quota. The correct path is Billing, Usage, Limits, spend cap, organization, project and model access.

Many "I have credits but still get 429" cases are scope problems. The credit can be in another organization. The request can use another project. A monthly spend cap can be active. A model can be unavailable to that project. A wrapper can be applying its own pool. Keep one minimal request stable while checking each scope.

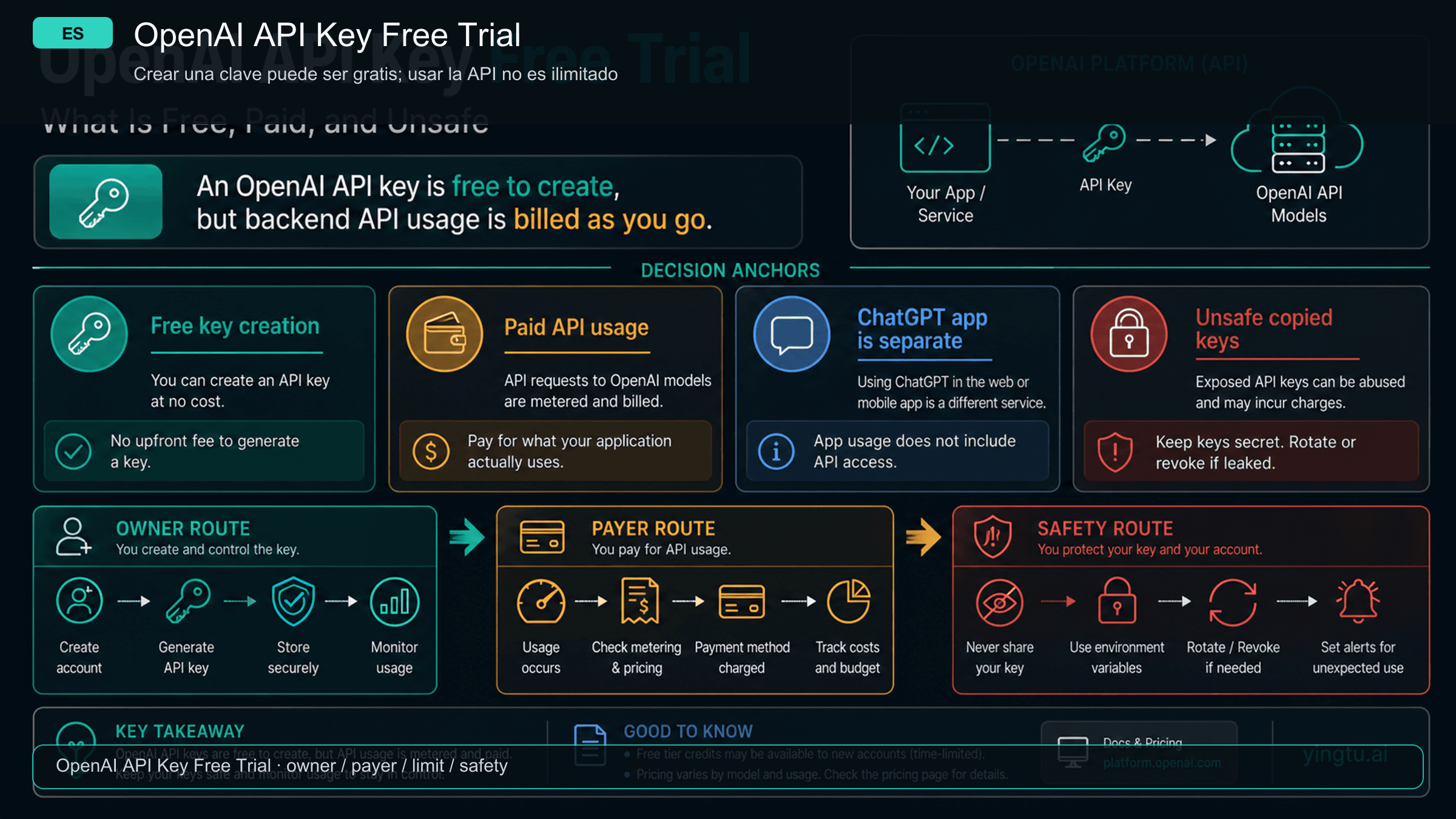

Por que un nuevo API key puede no ayudar

An API key is not a separate capacity bucket. A new key helps when the old key is revoked, leaked, restricted or attached to the wrong project. It does not create capacity if organization, project, model family and billing owner remain the same.

| Scope | Check | Failure pattern |

|---|---|---|

| Organization | request uses the intended org | personal and team orgs have different billing or limits |

| Project | key belongs to the inspected project | Limits checked in one project, traffic sent from another |

| Model family | selected model has access and headroom | stricter or shared family limit is exhausted |

| Team workload | other services share capacity | batch job or another app consumes the pool |

If one model fails, test a small request to a model the project can access. If every model fails with quota wording, inspect account state first. If the key works elsewhere, inspect concurrency and request shape in the failing service.

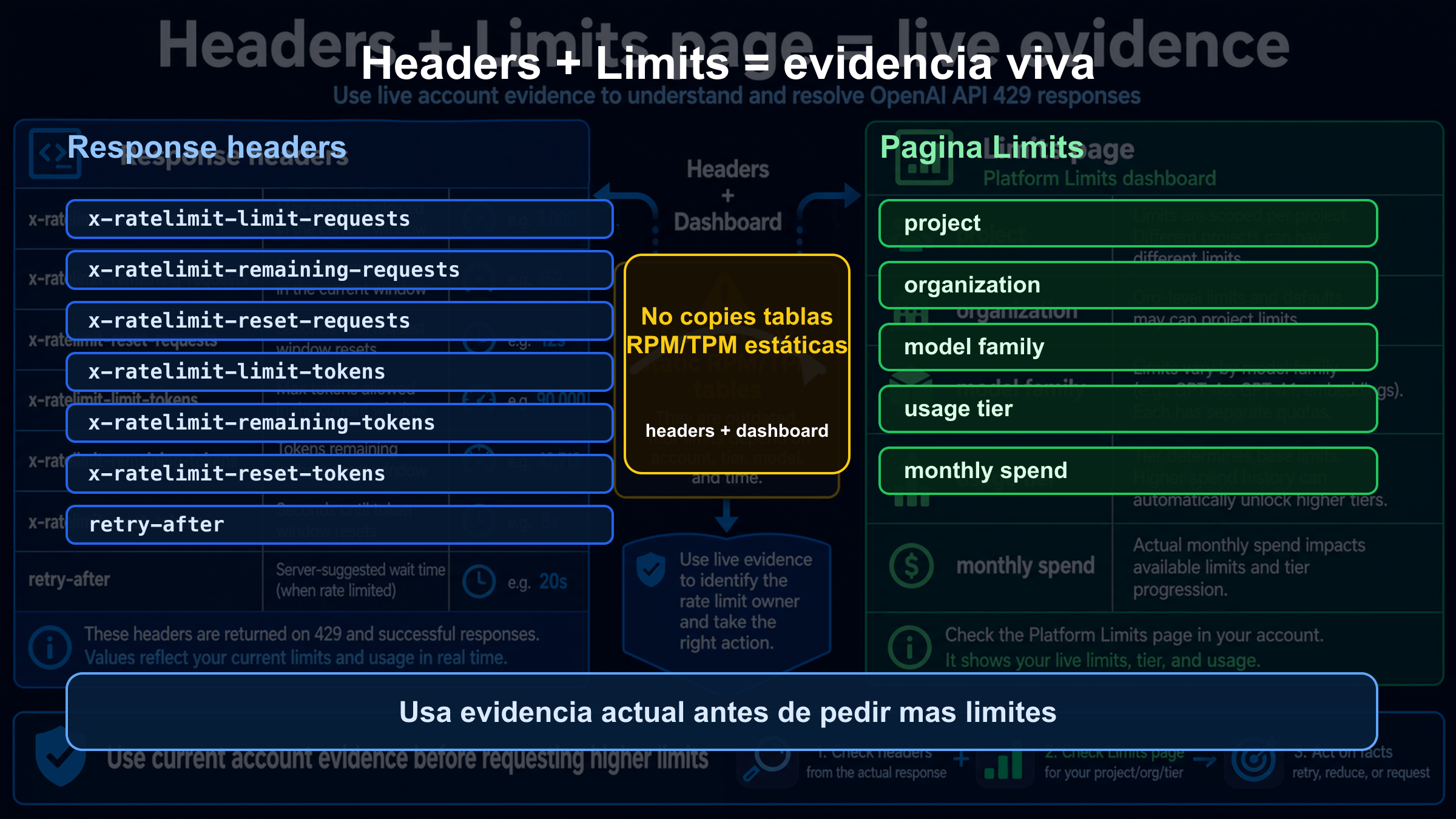

Usa headers y Limits como evidencia viva

The live evidence is the response plus the account. The body gives the branch. Headers can show limit, remaining and reset timing. The Limits page gives the current project, organization and model context. Any static table is weaker than the reader's own live evidence.

| Evidence | Why it matters |

|---|---|

| status and body | separates retryable rate pressure from quota or billing |

| request id | gives support a lookup handle |

| rate-limit headers | shows limit, remaining and reset timing |

| project and organization | confirms who owns the request |

| model and endpoint | exposes stricter model or wrong endpoint |

| Limits and Usage state | records account state during failure |

| Status snapshot | separates incident from account-local failure |

On 2026-04-29 the public OpenAI Status check did not show a broad active incident. That does not guarantee future health. During an incident, check Status live; if it is green, continue through account scope, headers and workload shape.

Reduce el proximo 429 en produccion

After the immediate recovery, move the lesson into production controls. The application should know its budget before OpenAI rejects it: project/model limiters, tenant budgets, token estimates, queue alerts, retry counters and reset-window observations.

Interactive traffic and background jobs should not compete blindly. Queue non-urgent jobs. Split tenants. Reduce prompt size when possible. Route simpler work to cheaper or lower-pressure models when that is an approved product decision. Use Batch when latency is not urgent and the workload fits.

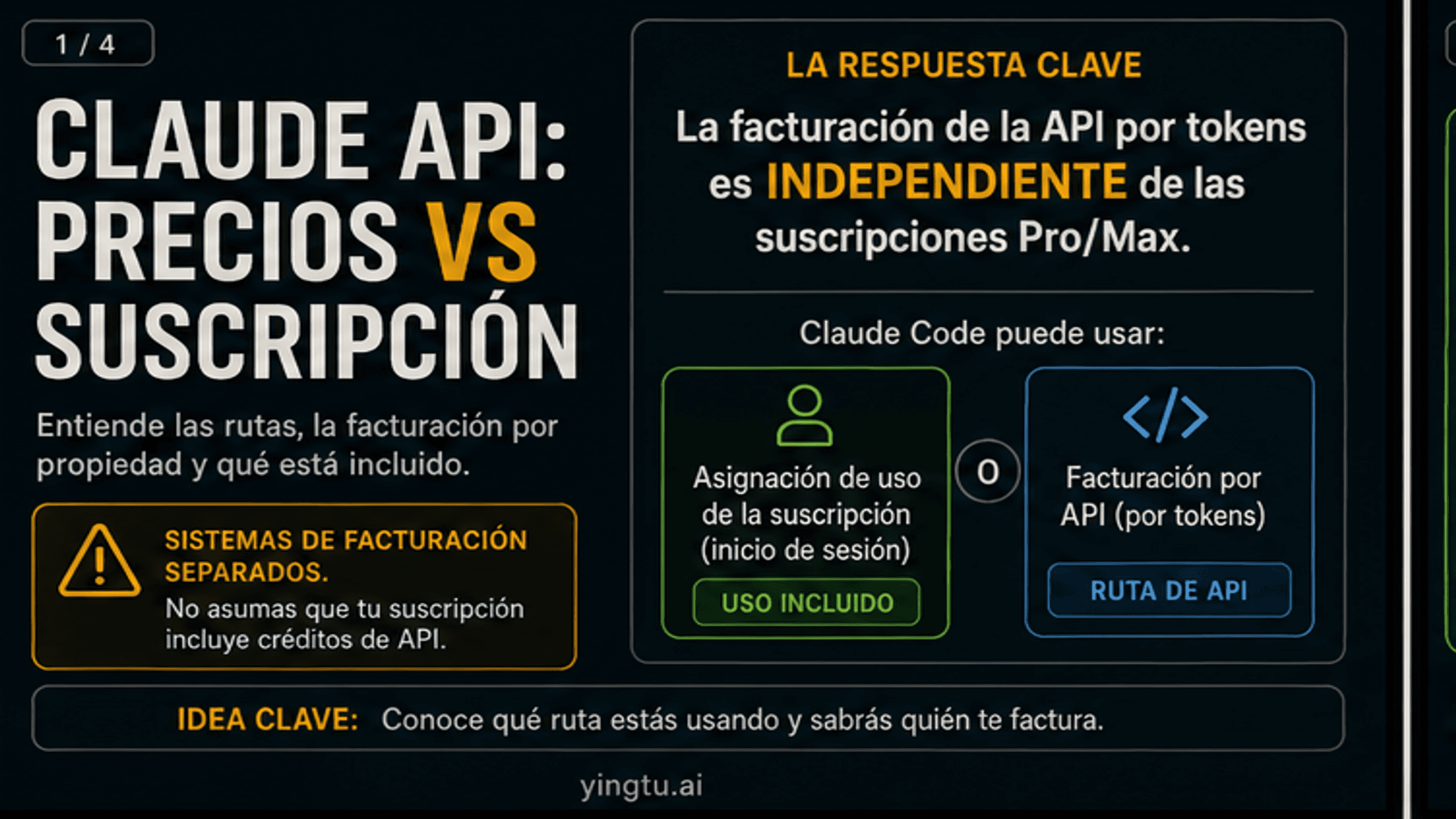

Descarta primero la superficie equivocada

"OpenAI API 429" should mean a Platform API call made by code. ChatGPT, Codex, Sora, Azure OpenAI and wrappers can show limit messages too, but the owner and fix are different.

| Surface | Do not assume | Check instead |

|---|---|---|

| ChatGPT | consumer plan changes API quota | ChatGPT product limits and account state |

| Codex | coding-agent limits equal API RPM/TPM | Codex product contract and status |

| Sora | video capacity equals text API limits | Sora route, queue, plan and video status |

| Azure OpenAI | OpenAI Platform Limits owns deployment | Azure quota, deployment, region and subscription |

| Wrapper | OpenAI headers always pass through | provider dashboard, docs, route id and upstream evidence |

If the request is not sent directly to api.openai.com, identify the provider boundary first. The wrapper may be full, may translate an upstream 429, or may enforce its own account cap.

Escala con evidencia limpia

Escalate only after the branch is stable and secrets are removed. A compact packet should include timestamp, timezone, request id, endpoint, model, SDK version, organization, project, billing owner, safe body, safe headers, Limits and Usage state, Status state, retry count, concurrency, prompt size, queue depth and recent changes.

Do not post API keys, bearer tokens, card details, private prompts or user data in public places. Clean evidence is faster for support and safer for users.

Preguntas frecuentes

Todos los 429 de OpenAI API son retryables?

No. Retry solo corresponde cuando el cuerpo y headers apuntan a presion temporal de requests o tokens. insufficient_quota exige Billing, Usage, Limits, project, organization y model access.

Que significa insufficient_quota?

Significa cuota, billing, spend cap o account state, no un pico de trafico que se arregla esperando segundos.

Por que aparece 429 si ya agregue credito?

Puede ser otro organization/project, spend cap, billing state pendiente, model access, shared family limit o un wrapper con su propia cuota.

Varios API keys aumentan el limite?

No si pertenecen al mismo project y organization. Un key nuevo arregla credenciales, no crea una cuota nueva.

Que headers debo mirar?

Los headers de limit, remaining y reset para requests/tokens cuando esten presentes, junto con la pagina Limits.

Debo mirar OpenAI Status?

Si. Un incidente cambia la respuesta a esperar y guardar evidencia; si esta verde, sigue con account, headers, Limits y workload.

ChatGPT Plus comparte cuota con la API?

No. ChatGPT consumer plans y OpenAI Platform API billing son superficies distintas.

Que envio a soporte?

Timestamp, timezone, request id, endpoint, model, project, organization, safe error body, safe headers, Limits/Usage, Status, retry count, workload y cambios recientes.

Plantilla de revision operativa

Registra cada 429 con el mismo formato: hora, zona horaria, endpoint, modelo, proyecto, organizacion, error body, headers, Limits, Usage, Billing, Status, retry count, concurrencia, tamano de prompt, cola y cambios recientes. Ese formato evita discutir si "parece limite" y obliga a separar rate pressure, cuota, billing, scope, status y provider route.

En la retrospectiva pregunta tres cosas. Que rama dio la primera senal fuerte? Cambiamos key, model, project o billing antes de guardar evidencia? Que control de produccion deberia actuar antes del rechazo de la API: central limiter, tenant budget, token estimate, queue, Batch o alerta por remaining/reset? Con esas respuestas, el siguiente 429 sera mas corto y menos costoso.

Plantilla de revision operativa

Registra cada 429 con el mismo formato: hora, zona horaria, endpoint, modelo, proyecto, organizacion, error body, headers, Limits, Usage, Billing, Status, retry count, concurrencia, tamano de prompt, cola y cambios recientes. Ese formato evita discutir si "parece limite" y obliga a separar rate pressure, cuota, billing, scope, status y provider route.

En la retrospectiva pregunta tres cosas. Que rama dio la primera senal fuerte? Cambiamos key, model, project o billing antes de guardar evidencia? Que control de produccion deberia actuar antes del rechazo de la API: central limiter, tenant budget, token estimate, queue, Batch o alerta por remaining/reset? Con esas respuestas, el siguiente 429 sera mas corto y menos costoso.