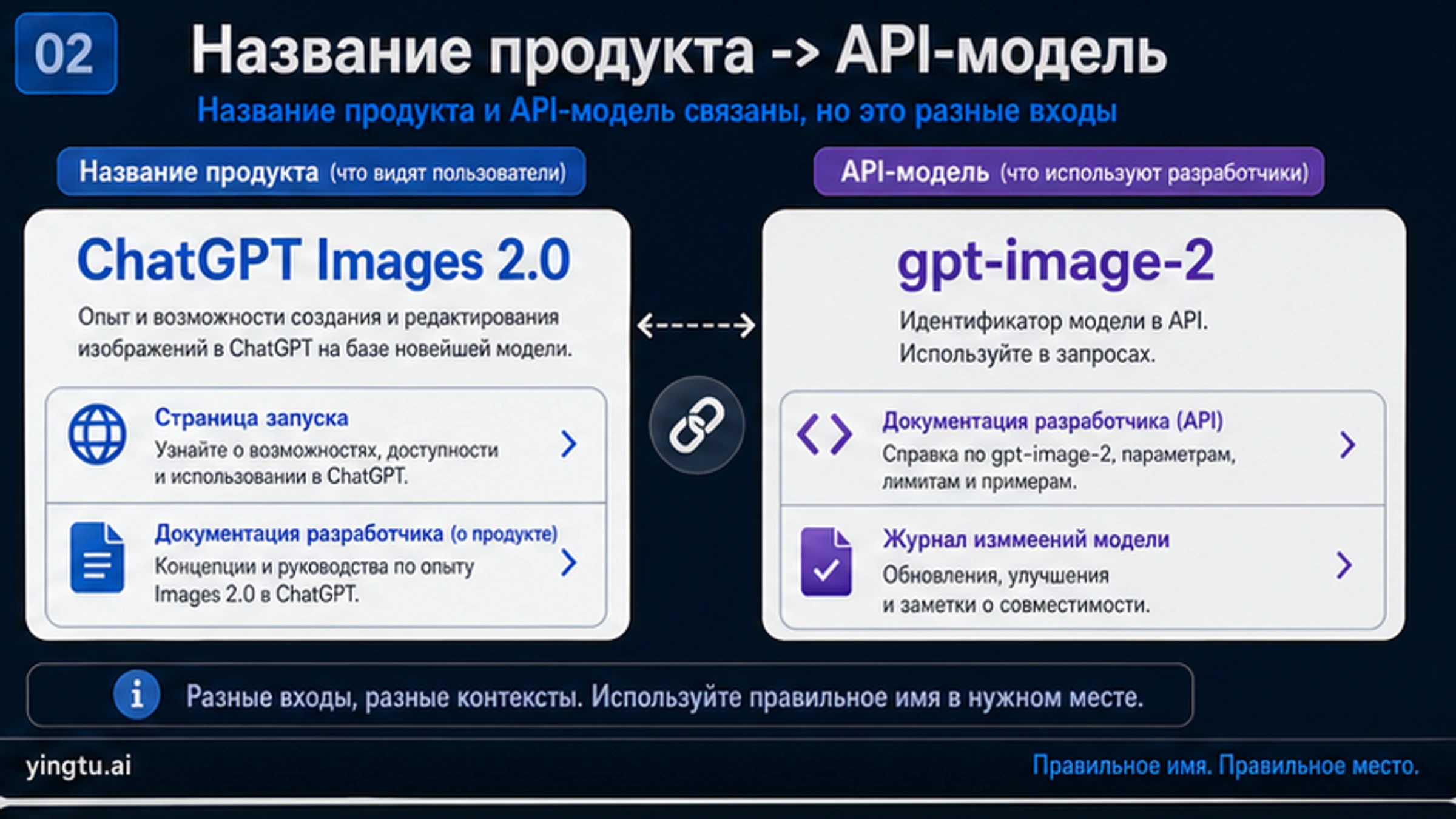

На 25 апреля 2026 года практический вопрос для русскоязычной команды звучит не как «что нового в генерации картинок», а как «с какого маршрута начинать нашу задачу». ChatGPT Images 2.0 — это продуктовый интерфейс внутри ChatGPT. gpt-image-2 — модель ID для разработчиков. Эти маршруты относятся к одной семье возможностей, но у них разные права доступа, контроль, журналирование, стоимость и ожидания от результата.

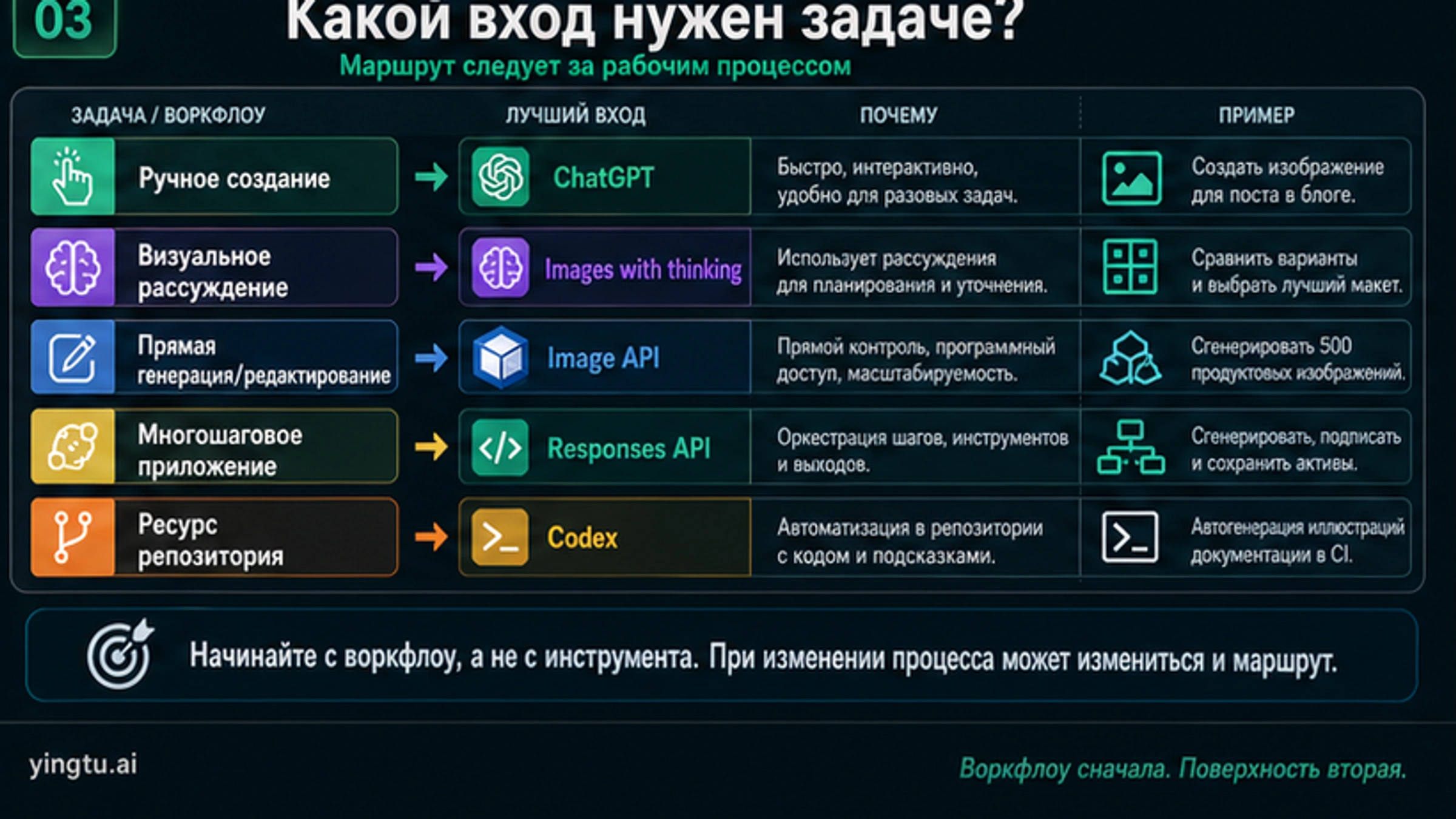

| Что нужно сейчас | С чего начать | Когда подходит | Когда лучше не выбирать |

|---|---|---|---|

| Один человек вручную делает или правит изображение | ChatGPT Images 2.0 | Нужно быстро написать prompt, посмотреть результат, уточнить и экспортировать. | Нужны backend-логи, ретраи, хранилище или учет затрат. |

| Визуальной задаче сначала нужно рассуждение | Images with thinking | Модель должна сравнить макеты, учесть контекст, использовать сведения или проверить несколько вариантов. | Запрос уже точный, и нужна повторяемая API-операция. |

| Продукт должен генерировать или редактировать изображения напрямую | Image API с gpt-image-2 | Приложение управляет prompt, входными файлами, размером, качеством, сохранением и ошибками. | Изображение является шагом внутри более широкого assistant workflow. |

| В одном потоке нужны текст, инструменты и изображения | Responses API image generation tool | Генерация — один инструмент в многошаговом приложении или agent-процессе. | Нужен только прямой generate/edit вызов. |

| Статья, документация или UI требуют прослеживаемых assets | Codex image workflow | Изображения должны жить рядом с файлами, prompt, review, alt text и локализацией. | Достаточно вручную воспользоваться ChatGPT. |

| Реальный вопрос — цена, бесплатный API, 4K, провайдер или сравнение моделей | Узкий отдельный гайд | Общий маршрут понятен, осталось решить более конкретный вопрос. | Пока непонятно, какой интерфейс вообще подходит. |

Короткий ответ: сначала выберите маршрут. Для ручной творческой работы используйте ChatGPT, для сложного визуального рассуждения — Images with thinking, для прямой программной генерации — Image API, для многошаговых приложений — Responses API, для assets внутри репозитория — Codex. Если вопрос на самом деле про стоимость, бесплатный доступ, 4K, провайдера или сравнение моделей, нужен отдельный узкий разбор.

Сначала разделите названия: ChatGPT Images 2.0, GPT Image 2 и gpt-image-2

В русскоязычной среде рядом могут встречаться «ChatGPT Image 2», «GPT Image 2», «OpenAI image 2.0» и gpt-image-2. Они указывают на одну волну возможностей, но не означают один и тот же рабочий договор. ChatGPT Images 2.0 — продуктовый интерфейс. GPT Image 2 — разговорное название поколения модели. gpt-image-2 — точный идентификатор модели для API. Если задача инженерная, важен model ID. Если задача объяснить командe пользовательский интерфейс, важнее название продукта. Если задача про цену, ни одно название не заменяет маршрут биллинга.

| Название | Как лучше понимать | Для чего не использовать |

|---|---|---|

| ChatGPT Images 2.0 | Доступ через ChatGPT, ручное создание, редактирование, Images with thinking. | API-биллинг, SDK-параметры, backend-квоты. |

| GPT Image 2 | Общее обсуждение поколения модели и ее возможностей. | Точные API-вызовы, где нужен model ID. |

gpt-image-2 | Генерация и редактирование через Image API или Responses API. | Обещания по тарифам ChatGPT или лимитам consumer-приложения. |

| Images with thinking | Продуктовый режим, где изображение может планироваться и самопроверяться. | Утверждение, что каждый API-вызов имеет такой же переключатель thinking. |

Такое разделение предотвращает две дорогие ошибки. Первая — прочитать материал о запуске в ChatGPT и решить, что API-интеграция уже понятна. Вторая — увидеть gpt-image-2 и предположить, что интерфейс, тарифы, инструменты и доступность такие же, как в ChatGPT. Поверхности связаны, но маршрут меняет контроль, журналирование и обещания, которые можно давать пользователям.

Что изменилось в ChatGPT Images 2.0

Публичное описание ChatGPT Images 2.0 делает акцент на более сильной работе с текстом, многоязычными надписями, инфографикой, слайдами, картами, комиксами, product mockup и гибкими форматами. Это важно потому, что реальные производственные изображения редко сводятся к «сделай красиво». Нужны читаемые слова, иерархия, разметка, подписи, диаграммы, интерфейсные элементы и локальный язык. Для русскоязычных команд это особенно заметно в презентациях, маркетплейс-визуалах, учебных карточках, Telegram-креативах и продуктовых объяснениях.

Images with thinking меняет не только качество картинки, но и рабочий процесс. В продуктовой логике этот режим может сначала рассуждать, использовать инструменты, учитывать веб-контекст, делать несколько вариантов из одного prompt и проверять результат перед выдачей. Это не делает модель безошибочной, но помогает там, где перед рендером нужно выбрать структуру: кампания, плотная инфографика, карта, многоязычный постер, сравнение продукта или серия согласованных визуалов.

Не превращайте это в обещание идеальности. Более сильный текст не означает безошибочную русскую типографику. Более точные надписи не означают, что даты, цены, названия продуктов, юридические строки и мелкие подписи всегда верны. Чем убедительнее выглядит изображение, тем внимательнее нужно проверять факты, права, likeness, чувствительный контекст и финальные файлы.

| Задача | Где Images 2.0 помогает | Что все равно проверять |

|---|---|---|

| Рекламные постеры и баннеры | Стабильнее текст, композиция и визуальная иерархия. | Орфография, пунктуация, мелкий текст, цены, имена. |

| Инфографика и слайды | Лучше группировка блоков, иконки, подписи и структура. | Данные, порядок, единицы, смысл подписей и источников. |

| Многоязычные assets | Лучше смешанные письменности и локальная раскладка. | Естественность фраз, переносы, шрифты, глифы. |

| Product mockup | Лучше сцены, детали, реалистичность и полировка. | Факты о продукте, бренды, права, compliance. |

| Комиксы и сториборды | Лучше последовательность сцен и единый стиль. | Согласованность персонажей, тон, безопасность, права. |

Практическое улучшение условно. ChatGPT Images 2.0 делает более сложные визуальные задачи достижимыми, но чеклист review становится важнее: убедительная картинка может скрыть маленькую, но критичную фактическую ошибку.

Выбирайте маршрут по workflow

Самый быстрый маршрут не всегда лучший для production. Ручная творческая задача, thinking-mode exploration, прямой API-вызов, многошаговый agent workflow и asset внутри репозитория требуют разных процессов.

Используйте ChatGPT, когда человек работает с изображением вручную. Это хороший путь для быстрых визуальных проб, черновиков кампаний, социальных картинок, клиентских mockup, первых слайдов и prompt-итераций. Сильная сторона — быстрый feedback. Слабая — production control: backend сам по себе не получает долговечные логи, ретраи, пути хранения и учет затрат.

Используйте Images with thinking, когда сложность в визуальном решении. Если модель должна сравнить макеты, понять карту, учесть контекст, предложить несколько направлений или самопроверить плотную инфографику, дополнительное рассуждение может окупить задержку. Если запрос уже точный и нужен повторяемый программный вызов, лучше смотреть на API-контроль.

Используйте Image API, когда продукту нужна прямая генерация или редактирование через gpt-image-2. Это маршрут для команд, которым нужно хранить входы, выходы, ошибки, ретраи, принятые результаты и действия пользователя. Пользователь нажимает кнопку, загружает референс или просит правку; система управляет жизненным циклом.

Используйте Responses API, когда изображение — один инструмент внутри большего потока. Assistant может собрать контекст, вызвать инструменты, рассуждать по brief, сгенерировать картинку, объяснить результат и задать уточняющий вопрос. Тогда картинка не отдельный endpoint-result, а шаг в интерактивном процессе.

Используйте Codex, когда изображение принадлежит работе в репозитории. Обложки статей, boards для документации, UI explainers, локализованные изображения и reviewable prompts должны находиться рядом с исходными файлами. Это важно, когда результат проходит review, build checks, alt text и многоязычную публикацию.

API-детали, которые нужно проверить до разработки

Маршрут разработчика начинается с точного model ID: gpt-image-2. Текущий снимок на странице моделей зафиксирован как gpt-image-2-2026-04-21. Если команде нужны воспроизводимые тесты, записывайте snapshot и дату в внутренние заметки, а не только фразу «новая image model».

Готовность аккаунта тоже часть плана. Документация указывает, что для GPT Image models может требоваться organization verification. Это не мелкая сноска, а ранний implementation blocker. Продуктовый график не должен предполагать, что API уже вызывается из непроверенной организации.

Размеры нужно обрабатывать как инженерные ограничения. gpt-image-2 поддерживает flexible custom sizes, но documented boundaries включают максимальную сторону не более 3840px, обе стороны кратны 16px, соотношение длинной стороны к короткой не более 3:1, а общее число пикселей остается в документированном диапазоне. Если настоящая задача — 4K, точный размер или upscale, используйте отдельный гайд по GPT Image 2 4K, а не превращайте эту маршрутную страницу в полный sizing manual.

Ценообразование требует той же дисциплины. Стоимость изображения зависит от категорий token, входных изображений, качества, размера вывода, маршрута и retry. Это не универсальная фиксированная цена за одну картинку. Если реальный вопрос — дешевый API или provider route, переходите к гайду по дешевому GPT Image 2 API. Если вопрос в том, есть ли официальный бесплатный API, нужен ответ про бесплатный GPT Image 2 API.

Прозрачный фон — отдельная граница. Текущее руководство по image generation не делает transparent-background output прямой возможностью gpt-image-2. Для логотипов, стикеров, UI cutout и прозрачных PNG планируйте compositing или post-processing и проверяйте финальный файл, а не только preview генерации.

Где здесь Codex

Codex не заменяет доступ к изображениям в ChatGPT и не является дешевым обходом API. Он полезен, когда визуал — часть задачи в репозитории: обложка статьи, explanatory board, изображение для docs, UI state diagram, локализованный набор assets или файл, который должен быть прослеживаемым через prompt, путь, review и build.

Операционная модель другая. В ChatGPT финальная картинка часто и есть deliverable. В Codex картинка — один artifact внутри change set. Важны prompt, evidence, выбранное изображение, resized publish asset, alt text, ссылка в тексте, локализация и итоговый review. Для публикационного workflow такая прослеживаемость ценнее декоративного hero-изображения.

Маршрут Codex уместен, когда визуалы обучают: показывают владельца маршрута, различие названий, выбор workflow и production safety. Общая картинка о запуске не помогла бы читателю выбрать путь. Плотная маршрутная доска помогает.

Цена, бесплатный доступ, 4K, провайдеры и сравнения

ChatGPT Images 2.0 сразу порождает несколько последующих вопросов, но это разные reader jobs. Сильная маршрутная страница должна направлять каждый вопрос на правильную поверхность, а не поглощать все в одну перегруженную статью.

Если вопрос про цену, сначала определите владельца договора и единицу биллинга. Прямой OpenAI-billing, Batch-style discounts, flat calls у провайдера, marketplace credits и bundled app access могут говорить об одной модельной способности, но иметь разные поддержку, privacy, failure handling и лимиты. Сравнивать их до определения владельца договора бессмысленно.

Если вопрос про бесплатный доступ, отделите доступ в ChatGPT app от официального API billing. Пользователь может попробовать генерацию в ChatGPT, но это не создает backend API credit. Provider trials, browser tests, local promotions и официальный billing нужно проверять отдельно.

Если вопрос про 4K, это implementation work. Нужны allowed dimensions, сохраненный файл, compression, resize behavior, native generation versus upscale и visual QA. Это sizing guide, а не launch recap.

Если вопрос про сравнение, сначала задайте workload. Text-heavy posters, русский текст, product mockup, reference edits, diagrams и cinematic images нельзя оценивать одним generic prompt. Сравнивайте модель на тех prompt classes, которые действительно нужны продукту.

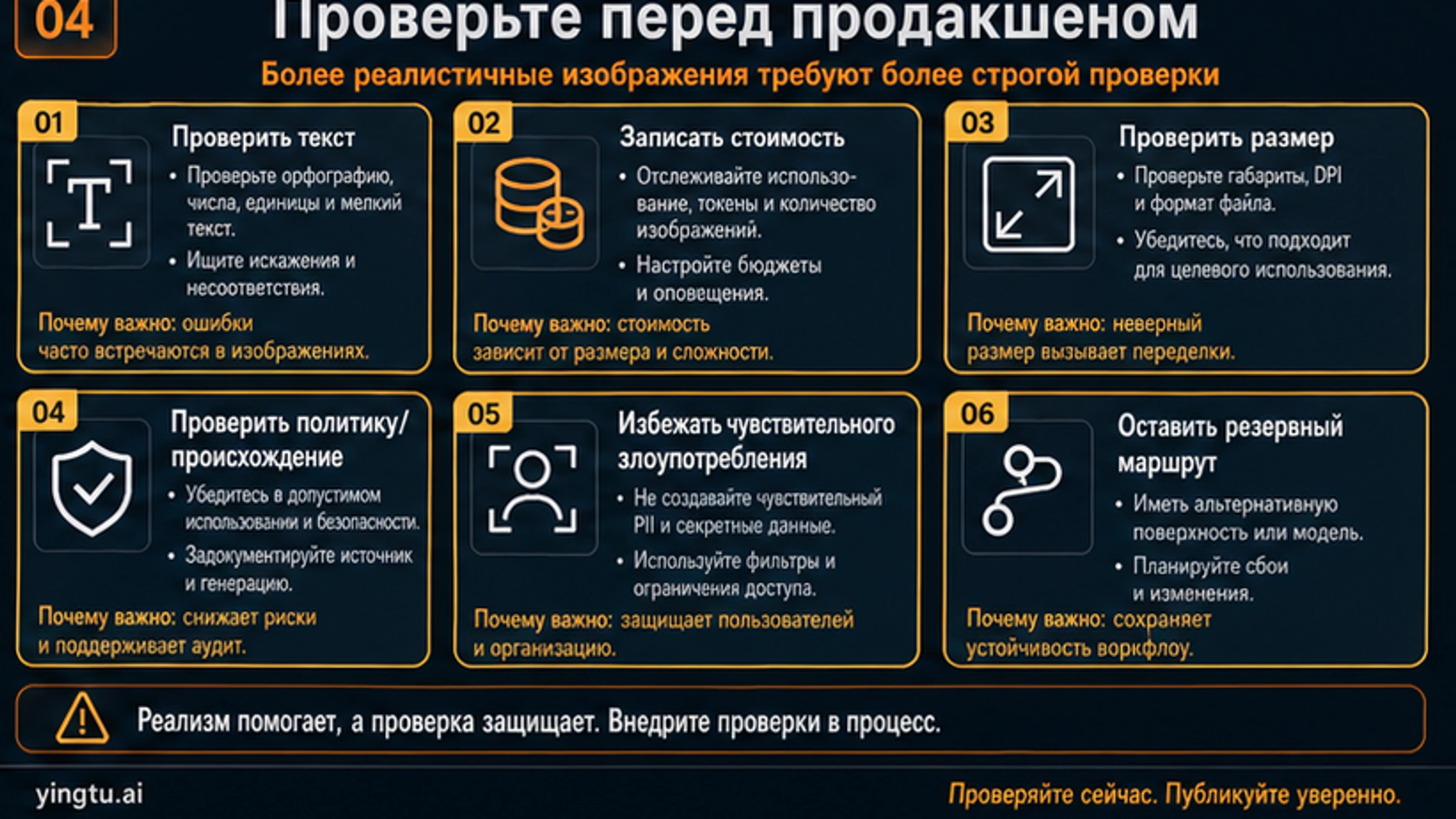

Проверки перед публикацией

Более высокое качество не отменяет review, а меняет то, что review должен поймать. Реалистичная, отполированная картинка может сделать неверное слово, дату, число или claim правдоподобным. Это опаснее, чем явно сломанный результат.

Начните с текста. Проверьте орфографию, регистр, пунктуацию, даты, имена, цены, единицы, labels, product claims, buttons, chart axes и мелкий шрифт. Для русскоязычных и многоязычных assets нужен читатель, который понимает язык и видит переносы. Нельзя утверждать изображение только потому, что оно выглядит профессионально.

Затем проверьте размеры и формат. Сохраните API output и посмотрите реальные width, height, file type, compression и downstream resizing. Social cards, covers, slides, ads и in-app previews имеют разные constraints. Preview генерации не является final asset test.

Поведение стоимости нужно логировать до увеличения volume. Фиксируйте длину prompt, входные изображения, quality, output size, blocked requests, retry count, accepted output rate и final file handling. Маршрут, который кажется дешевым в одном тесте, может стать дорогим при high quality, edits и retry.

Policy и provenance checks должны стоять рядом с visual QA. Храните prompts, source references, rights assumptions, approvals, safety reviews и fallback decisions. Production pipeline должен знать, что происходит, когда маршрут медленный, заблокирован, слишком дорогой или не подходит для prompt.

Правило миграции для существующих image workflows

Не заменяйте стабильный image workflow только потому, что ChatGPT Images 2.0 новее. Тестируйте задачи, которые важны продукту: плотный постер с текстом, product mockup, многоязычный visual, diagram, reference edit и final-size asset. Сравните новый и старый маршрут по accepted quality, editability, cost, latency, safety review, handoff friction и fallback behavior.

Сначала тестируйте ChatGPT Images 2.0, если текущий workflow слаб в тексте, layout или deliberate visual reasoning. Прямые gpt-image-2 API-вызовы подходят, когда продукту нужен programmatic output. Responses API подходит, когда изображения входят в более широкий tool flow. Codex подходит, когда визуал является частью repository publication pipeline. Старый маршрут стоит оставить, если он дешевле, безопаснее или предсказуемее для конкретной задачи.

Это правило миграции менее эффектно, чем заголовок запуска, но оно предотвращает лишнюю инженерную работу. Более сильная image model получает production traffic только тогда, когда побеждает текущий baseline на важных prompt.

FAQ

"OpenAI Images 2.0" — официальное название?

Для продуктового маршрута безопаснее использовать ChatGPT Images 2.0. Формулировка "OpenAI Images 2.0" может встречаться как разговорное обозначение той же волны возможностей, но рабочее разделение такое: ChatGPT Images 2.0 для продукта, gpt-image-2 для API-вызовов.

ChatGPT Images 2.0 уже доступен?

Справочный центр описывает доступность ChatGPT Images 2.0 по тарифам ChatGPT и отдельно указывает доступность Images with thinking. Используйте формулировки с датой и не обещайте точные квоты, если текущие plan details не проверены перед публикацией или релизом.

У ChatGPT Images 2.0 есть API?

Разработчику нужен model ID gpt-image-2. Для прямой генерации или редактирования используйте Image API. Если изображение — часть более широкого app или agent workflow, используйте image tool в Responses API.

gpt-image-2 бесплатный?

Не предполагайте существование поддерживаемого бесплатного официального API. Доступ в ChatGPT app, provider trials, browser tests и API billing — разные договоры. Бесплатный доступ нужно проверять отдельно.

Что выбрать: Image API или Responses API?

Image API подходит, когда приложению нужен прямой вызов генерации или редактирования. Responses API подходит, когда генерация изображения — один инструмент среди reasoning, text, tools и follow-up explanation.

Когда использовать Codex для изображений?

Используйте Codex, когда изображение является частью изменения в репозитории: visuals для статей, docs assets, UI boards, локализованные publish images или любые assets, которым нужны prompt, file path и review traceability.

ChatGPT Images 2.0 делает идеальный русский текст на картинках?

Нет production workflow, где это можно считать гарантией. Модель сильнее в text-heavy и multilingual visuals, но слова, переносы, глифы, даты, имена и claims все равно требуют человеческого review.

Что тестировать перед переносом production traffic?

Тестируйте prompt classes, от которых зависит продукт: плотный текст, русский текст, многоязычный текст, product images, edits, diagrams и final-size assets. Измеряйте accepted output rate, retries, cost, latency, safety review и fallback behavior.