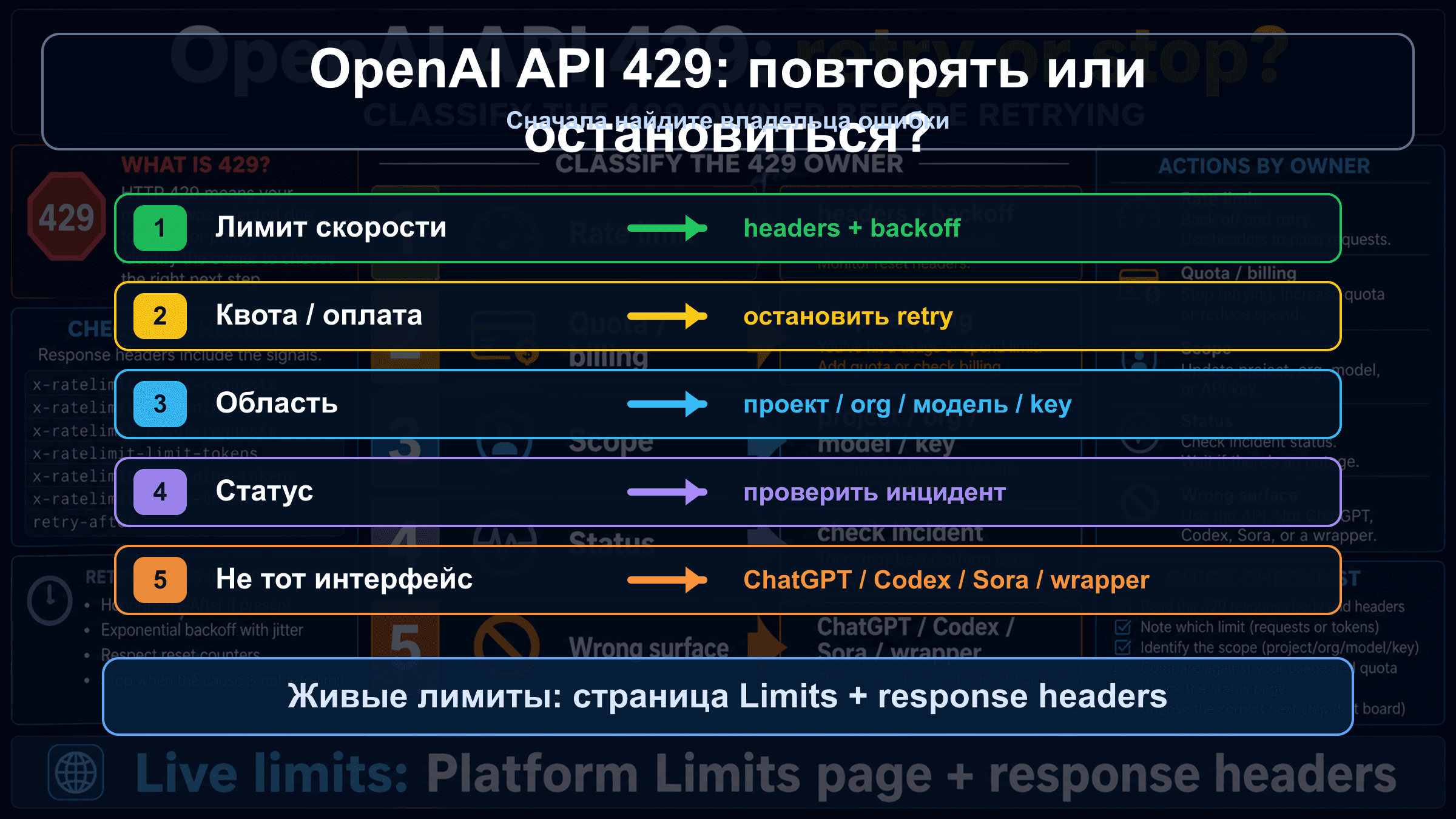

Если OpenAI Platform API возвращает 429, не увеличивайте число retry автоматически. Сначала прочитайте тело ошибки: временное давление на requests или tokens требует backoff, throttling и queue; quota, billing, project scope, model access, status incident или wrapper limit требуют другой проверки.

| Сигнал | Вероятный владелец | Первая проверка | Retry или stop |

|---|---|---|---|

rate limit reached, too many requests, remaining headers near zero | request или token rate limit | headers, Limits, model family, reset window | retry с backoff и jitter, затем throttle или queue |

You exceeded your current quota или insufficient_quota | quota, billing или spend cap | Billing, Usage, Limits, monthly spend, account state | stop до изменения account state |

| новый key падает так же или падает один project/model | project, organization, model или key scope | selected project, organization, model access | исправить scope перед traffic changes |

| много вызовов падает и Status показывает incident | platform status или capacity | OpenAI Status, timestamp, request id | wait, preserve evidence |

| ошибка из ChatGPT, Codex, Sora, Azure или wrapper | wrong surface | product surface, provider docs, route, headers | перейти к этому контракту |

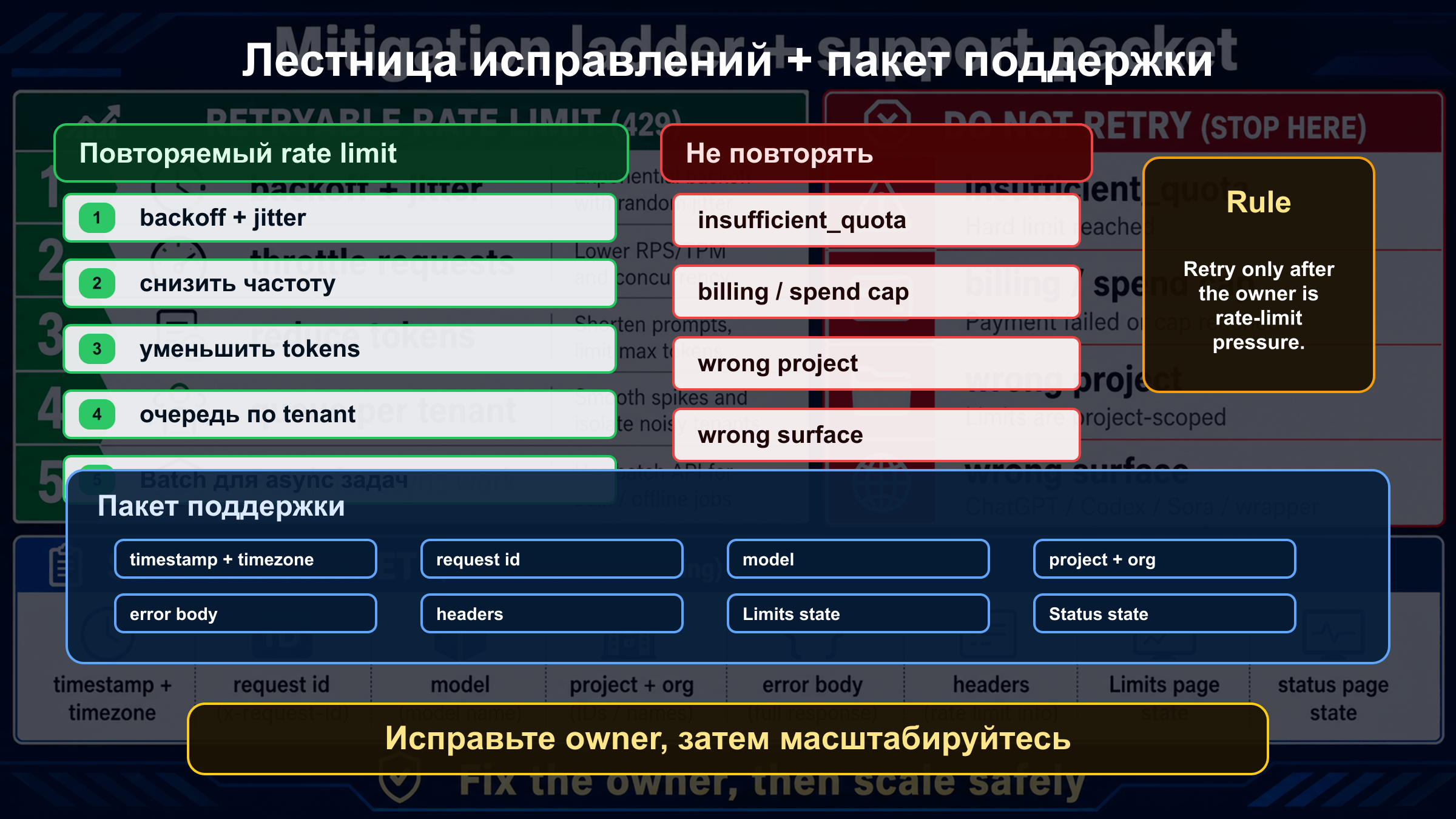

Стоп-правило простое: повторять можно только когда владелец ошибки - request/token pressure и есть сигнал reset. Если тело указывает на quota, billing, wrong project, wrong model, wrong surface или status incident, повторение того же запроса не лечит проблему.

Сначала прочитайте тело 429, а потом меняйте код

OpenAI official docs distinguish at least two 429 families: traffic arriving too fast and current quota exhausted. In local developer searches these usually collapse into one phrase, so the body of the error must own the first decision. Record message, code, type, endpoint, model, project, organization, timestamp, and request id before changing retry policy.

The safe classification is conservative. If insufficient_quota or current quota wording appears, treat it as a quota or billing stop. If the body says rate limit or too many requests and the headers show remaining/reset information, treat it as retryable pressure. If neither branch is clear, hold the route steady and collect evidence instead of changing five variables at once.

This matters in real operations because an ambiguous 429 can tempt teams into the wrong repair. A tight retry loop can consume more minute capacity. A new key can hide the fact that the same project is still blocked. A billing change in the wrong organization can leave the production request untouched.

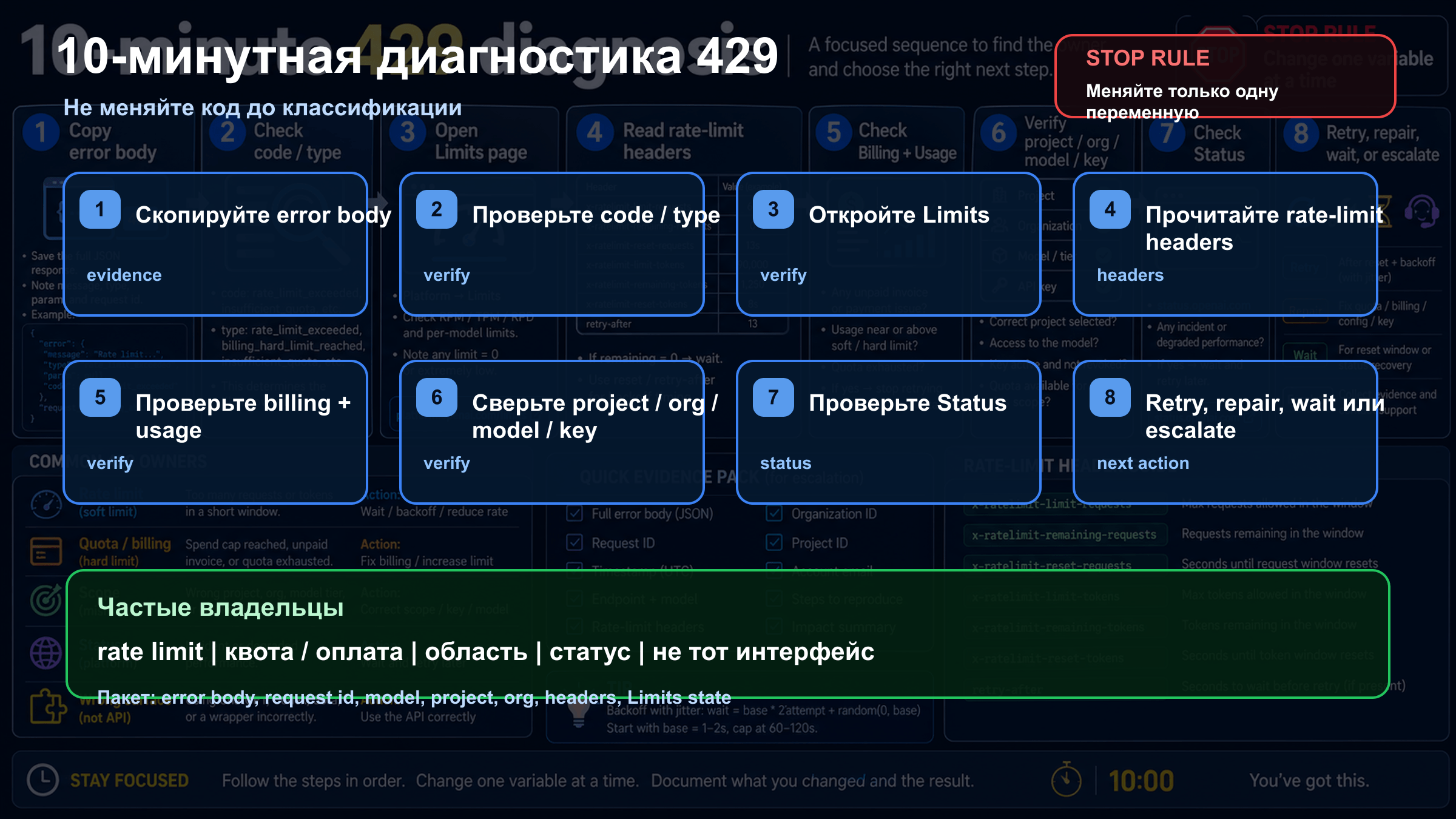

10-минутный маршрут восстановления

Use the first ten minutes to classify the owner, not to experiment randomly. Copy the raw body and headers, open Limits, Billing and Usage for the same project and organization, confirm the model family, check OpenAI Status, then send one smaller controlled request. That sequence keeps the evidence readable.

| Time | Action | What it proves |

|---|---|---|

| 0-1 | Save body and headers | whether the branch is rate, quota, billing or unknown |

| 1-3 | Check Limits, Usage and Billing | whether the account has capacity or billing state problems |

| 3-5 | Compare model and endpoint | whether a stricter or shared model family is involved |

| 5-7 | Check OpenAI Status | whether a public incident changes the response |

| 7-10 | Send one smaller controlled request | whether workload size or account state is likely |

If the smaller request works, investigate concurrency, token size, image throughput or fan-out. If it fails with quota wording, stop retrying. If unrelated endpoints fail during a declared incident, preserve evidence and wait rather than rotating accounts.

Когда retry и backoff действительно нужны

Retry and backoff are correct only for temporary request or token pressure. The useful signals are rate-limit wording, low remaining values, reset timing, and a traffic pattern that exceeds the current project/model budget. Retry is not a magic repair; it is a pacing tool.

Use exponential backoff with jitter, cap retry count, and add a central limiter per project and model family. A limiter inside each worker is not enough if workers do not share state. Estimate token size before dispatch, because reducing prompt size or max output can remove TPM pressure before the API rejects the request.

Failed requests can still count toward minute limits. A fleet that retries every second can keep itself inside the failure window. A good system slows down, queues, sheds non-urgent work, or uses Batch for async work.

Когда retry только ухудшает ситуацию

Retry is wrong when the error points to insufficient_quota, current quota, billing, monthly spend or account state. Waiting a few seconds does not add quota. The correct path is Billing, Usage, Limits, spend cap, organization, project and model access.

Many "I have credits but still get 429" cases are scope problems. The credit can be in another organization. The request can use another project. A monthly spend cap can be active. A model can be unavailable to that project. A wrapper can be applying its own pool. Keep one minimal request stable while checking each scope.

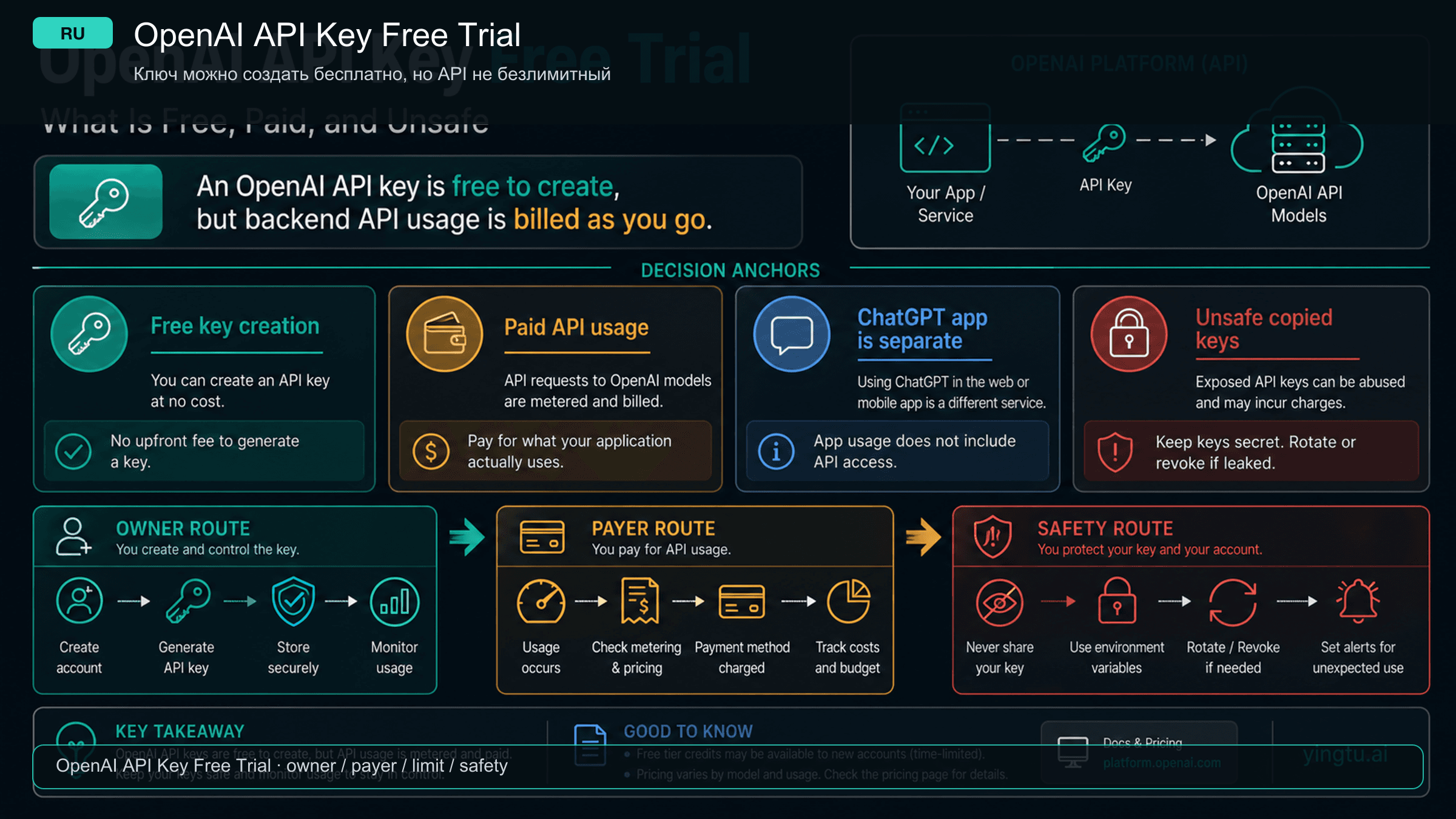

Почему новый API key может не помочь

An API key is not a separate capacity bucket. A new key helps when the old key is revoked, leaked, restricted or attached to the wrong project. It does not create capacity if organization, project, model family and billing owner remain the same.

| Scope | Check | Failure pattern |

|---|---|---|

| Organization | request uses the intended org | personal and team orgs have different billing or limits |

| Project | key belongs to the inspected project | Limits checked in one project, traffic sent from another |

| Model family | selected model has access and headroom | stricter or shared family limit is exhausted |

| Team workload | other services share capacity | batch job or another app consumes the pool |

If one model fails, test a small request to a model the project can access. If every model fails with quota wording, inspect account state first. If the key works elsewhere, inspect concurrency and request shape in the failing service.

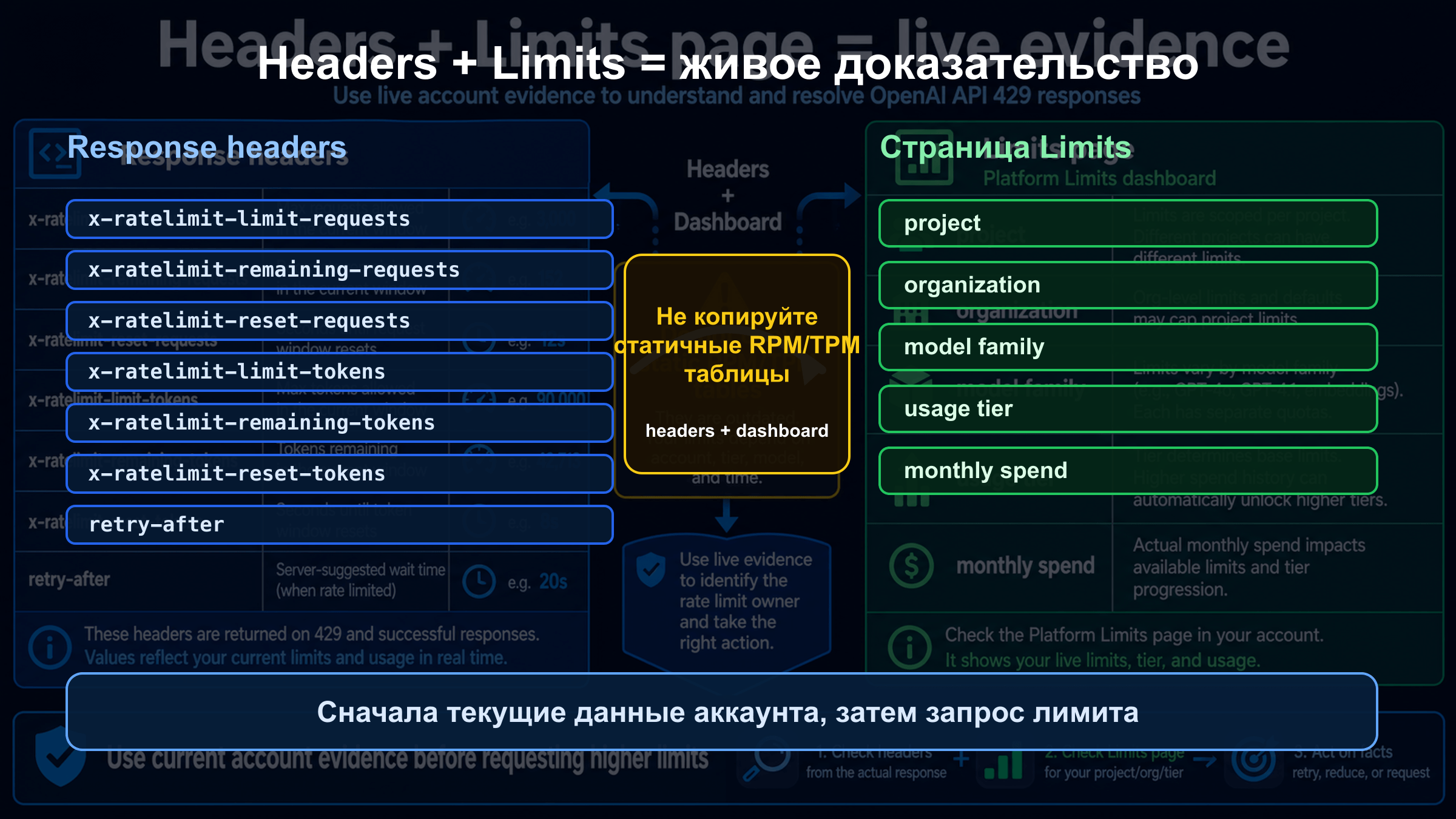

Используйте headers и Limits как живые доказательства

The live evidence is the response plus the account. The body gives the branch. Headers can show limit, remaining and reset timing. The Limits page gives the current project, organization and model context. Any static table is weaker than the reader's own live evidence.

| Evidence | Why it matters |

|---|---|

| status and body | separates retryable rate pressure from quota or billing |

| request id | gives support a lookup handle |

| rate-limit headers | shows limit, remaining and reset timing |

| project and organization | confirms who owns the request |

| model and endpoint | exposes stricter model or wrong endpoint |

| Limits and Usage state | records account state during failure |

| Status snapshot | separates incident from account-local failure |

On 2026-04-29 the public OpenAI Status check did not show a broad active incident. That does not guarantee future health. During an incident, check Status live; if it is green, continue through account scope, headers and workload shape.

Как снизить риск следующей 429 в production

After the immediate recovery, move the lesson into production controls. The application should know its budget before OpenAI rejects it: project/model limiters, tenant budgets, token estimates, queue alerts, retry counters and reset-window observations.

Interactive traffic and background jobs should not compete blindly. Queue non-urgent jobs. Split tenants. Reduce prompt size when possible. Route simpler work to cheaper or lower-pressure models when that is an approved product decision. Use Batch when latency is not urgent and the workload fits.

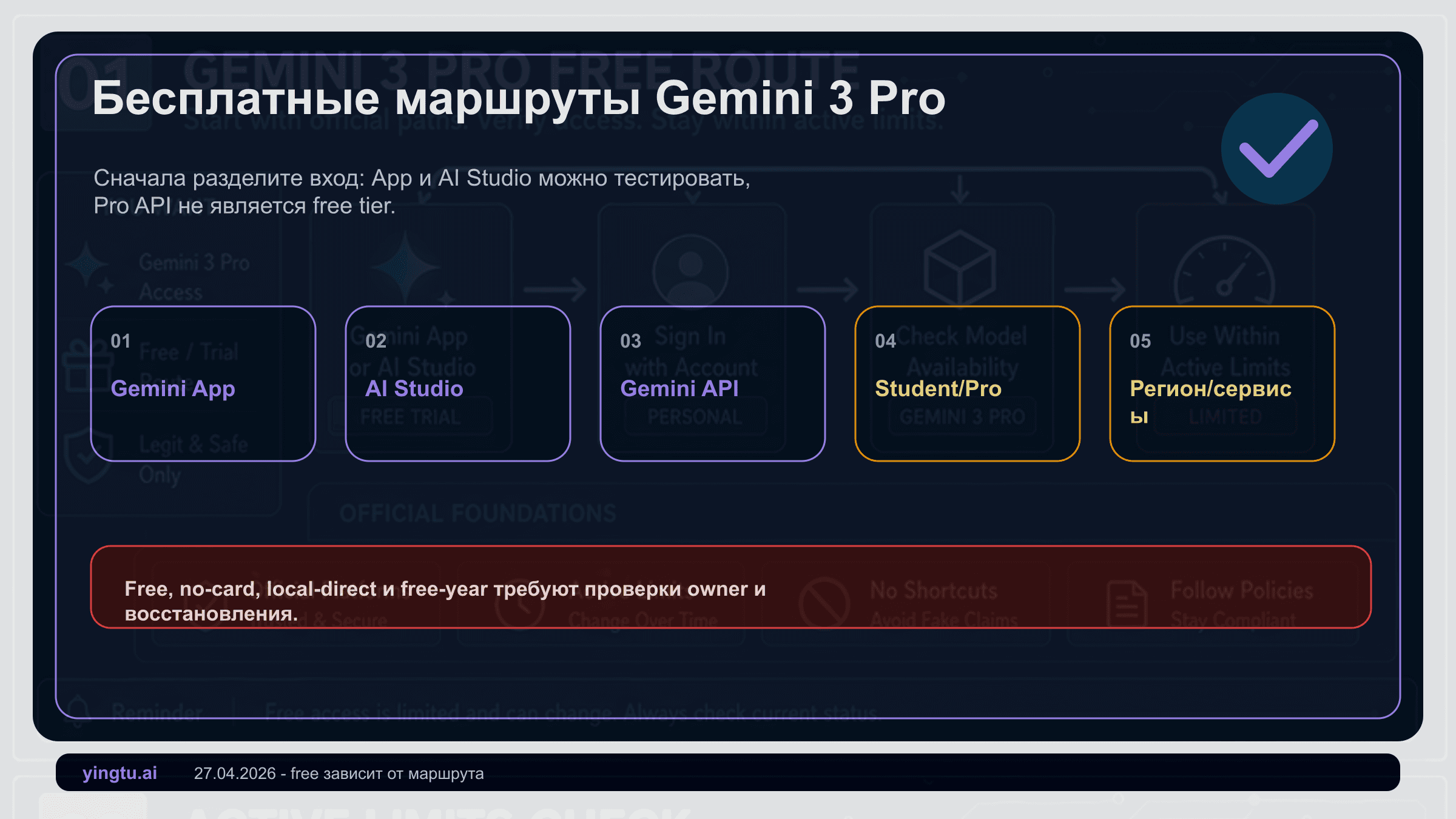

Сначала исключите чужую поверхность

"OpenAI API 429" should mean a Platform API call made by code. ChatGPT, Codex, Sora, Azure OpenAI and wrappers can show limit messages too, but the owner and fix are different.

| Surface | Do not assume | Check instead |

|---|---|---|

| ChatGPT | consumer plan changes API quota | ChatGPT product limits and account state |

| Codex | coding-agent limits equal API RPM/TPM | Codex product contract and status |

| Sora | video capacity equals text API limits | Sora route, queue, plan and video status |

| Azure OpenAI | OpenAI Platform Limits owns deployment | Azure quota, deployment, region and subscription |

| Wrapper | OpenAI headers always pass through | provider dashboard, docs, route id and upstream evidence |

If the request is not sent directly to api.openai.com, identify the provider boundary first. The wrapper may be full, may translate an upstream 429, or may enforce its own account cap.

Эскалация с доказательствами

Escalate only after the branch is stable and secrets are removed. A compact packet should include timestamp, timezone, request id, endpoint, model, SDK version, organization, project, billing owner, safe body, safe headers, Limits and Usage state, Status state, retry count, concurrency, prompt size, queue depth and recent changes.

Do not post API keys, bearer tokens, card details, private prompts or user data in public places. Clean evidence is faster for support and safer for users.

Часто задаваемые вопросы

Все ли OpenAI API 429 можно повторять?

Нет. Retry нужен только при временном request/token pressure. insufficient_quota и current quota exceeded требуют Billing, Usage, Limits, project, organization и model access.

Что означает insufficient_quota?

Это quota, billing, spend cap или account state, а не короткий всплеск трафика. Проверяйте тот же project и organization, откуда ушел запрос.

Почему 429 остается после пополнения?

Возможны другой organization/project, spend cap, задержка billing state, model access, shared model-family limit или provider wrapper pool.

Новые API keys увеличивают лимит?

Нет, если они остаются в том же project и organization. Новый key чинит credential problem, но не создает новый capacity pool.

Какие headers важны?

Limit, remaining и reset для requests/tokens, когда они есть. Их нужно читать вместе с live Limits page.

Нужно ли проверять OpenAI Status?

Да. Incident меняет стратегию на ожидание и evidence; зеленый статус возвращает вас к account, headers, Limits и workload shape.

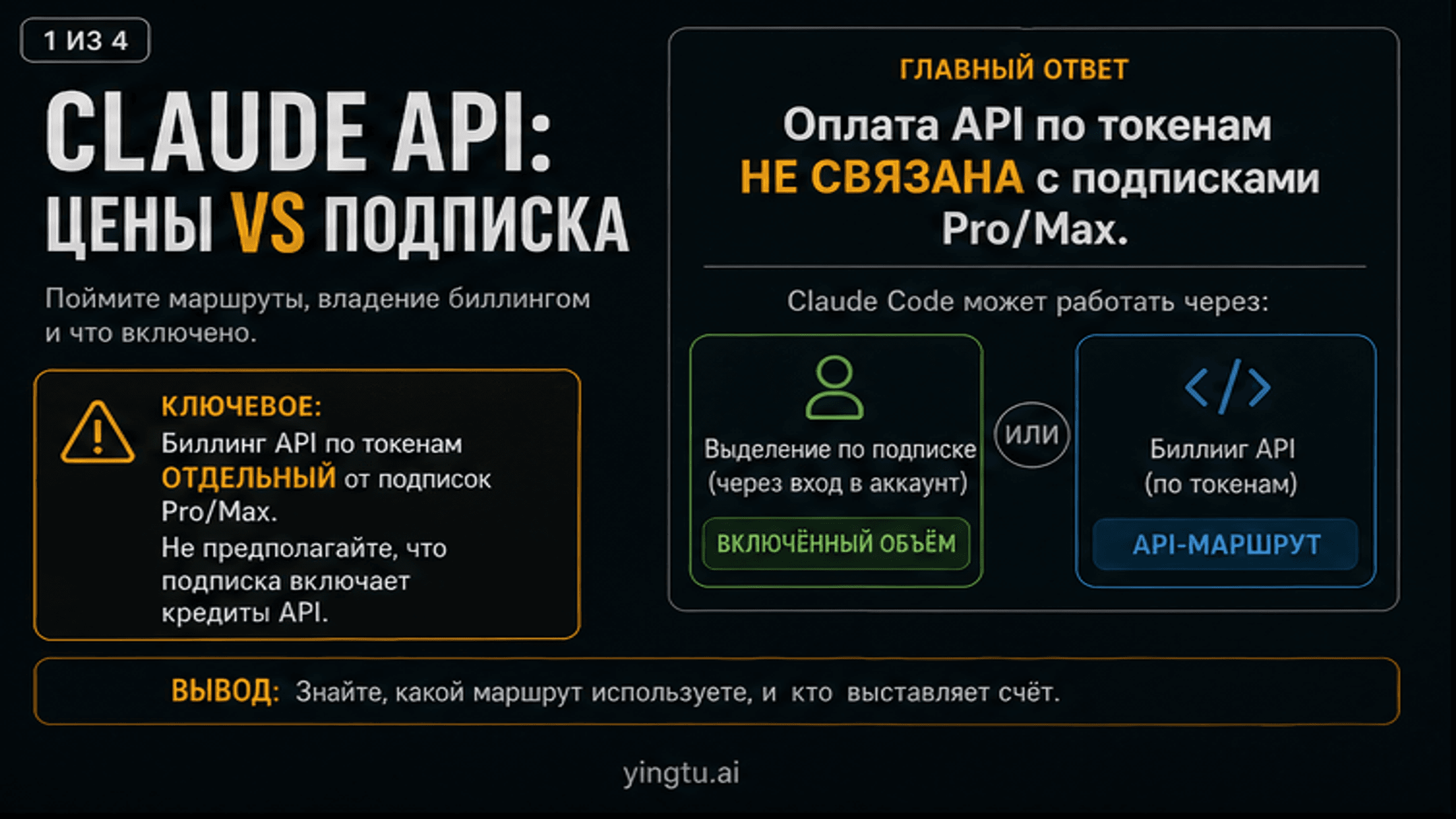

ChatGPT Plus и API quota совпадают?

Нет. Consumer subscription и Platform API billing - разные поверхности.

Что отправить в поддержку?

Timestamp, timezone, request id, endpoint, model, project, organization, safe error body, safe headers, Limits/Usage, Status, retry count, workload shape and recent changes.

Шаблон локального incident review

Записывайте каждый 429 одинаково: время, timezone, endpoint, model, project, organization, error body, headers, Limits, Usage, Billing, Status, retry count, concurrency, prompt size, queue depth and recent deploy. Такой формат превращает спор "похоже на лимит" в сравнение веток: rate pressure, quota, billing, scope, status или provider route.

На разборе задайте три вопроса. Какая ветка первой дала сильный сигнал? Не изменили ли мы key, model, project и billing до фиксации evidence? Какой production control должен сработать раньше API rejection: central limiter, tenant budget, token estimate, queue, Batch или alert по remaining/reset? Если ответ записан, следующая 429 будет короче и дешевле.

Шаблон локального incident review

Записывайте каждый 429 одинаково: время, timezone, endpoint, model, project, organization, error body, headers, Limits, Usage, Billing, Status, retry count, concurrency, prompt size, queue depth and recent deploy. Такой формат превращает спор "похоже на лимит" в сравнение веток: rate pressure, quota, billing, scope, status или provider route.

На разборе задайте три вопроса. Какая ветка первой дала сильный сигнал? Не изменили ли мы key, model, project и billing до фиксации evidence? Какой production control должен сработать раньше API rejection: central limiter, tenant budget, token estimate, queue, Batch или alert по remaining/reset? Если ответ записан, следующая 429 будет короче и дешевле.