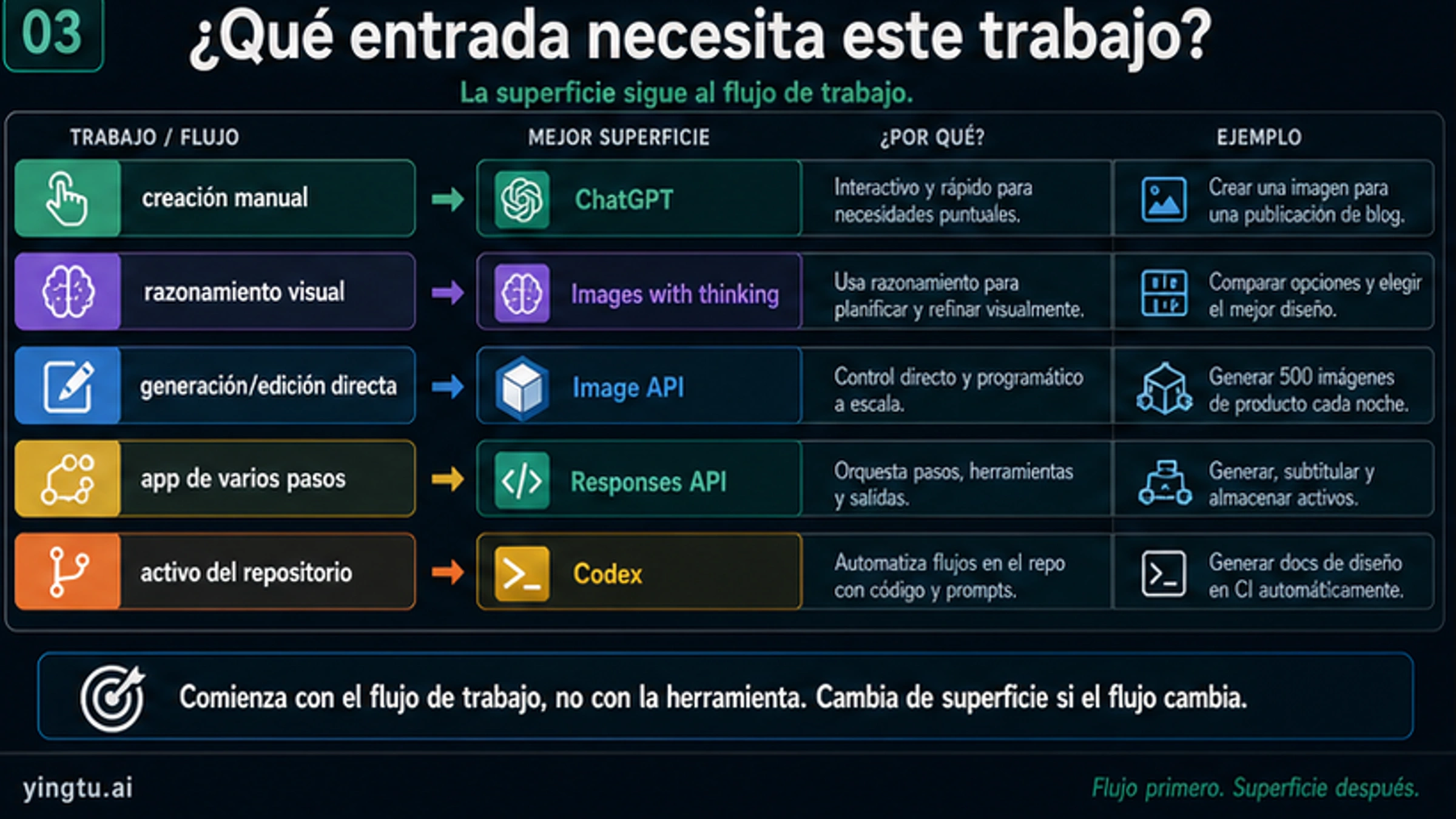

Al 25 de abril de 2026, la respuesta útil en español no es otro resumen del lanzamiento. La pregunta práctica es qué ruta usar. ChatGPT Images 2.0 es la superficie de producto que una persona ve dentro de ChatGPT; gpt-image-2 es el modelo ID que un desarrollador llama por API. El acceso de producto, la implementación por API, las promesas de proveedores, los videos, el acceso gratuito y el precio cambian lo que puedes controlar, registrar, pagar, revisar y publicar.

| Qué necesitas ahora | Empieza por | Úsalo cuando | Evítalo por ahora si |

|---|---|---|---|

| Una persona necesita crear o editar una imagen manualmente | ChatGPT Images 2.0 | Puedes escribir el prompt, revisar, ajustar y exportar dentro de ChatGPT. | Necesitas automatización backend, logs, reintentos, almacenamiento o control de coste. |

| La tarea visual necesita planificación antes de renderizar | Images with thinking | El modelo debe razonar, comparar layouts, usar contexto o autocheckearse. | Necesitas una llamada API simple y repetible. |

| Un producto necesita generar o editar imágenes directamente | Image API con gpt-image-2 | Tu app controla prompts, inputs, tamaño, calidad, archivos de salida y reintentos. | La imagen es solo un paso dentro de un flujo de asistente más amplio. |

| Un workflow necesita texto, herramientas e imágenes juntos | Responses API image generation tool | La imagen se genera dentro de un producto o agent de varios pasos. | Solo necesitas un endpoint directo de generate o edit. |

| Un artículo, doc o proyecto UI necesita assets trazables | Codex image workflow | Las imágenes deben vivir con archivos, prompts, alt text, revisión y localización. | Solo quieres acceso de consumidor en ChatGPT. |

| La pregunta real es precio, API gratis, 4K, proveedor o comparación de modelos | Guía específica | La ruta general ya está clara y la decisión siguiente es más estrecha. | Todavía no sabes qué superficie encaja con tu tarea. |

La respuesta debe empezar por ese mapa: usa ChatGPT para creación manual, Images with thinking para razonamiento visual deliberado, Image API para llamadas directas a gpt-image-2, Responses API cuando la imagen forma parte de un flujo de app más amplio, Codex cuando el asset pertenece a un repositorio, y una guía específica cuando la duda real es precio, acceso gratuito, 4K, proveedores o comparación de modelos.

Nombres: ChatGPT Images 2.0, GPT Image 2 y gpt-image-2

En español se alternan "ChatGPT Images 2.0", "ChatGPT Imagen 2.0", "GPT Image 2", "OpenAI Images 2.0" y gpt-image-2. Apuntan a la misma familia de lanzamiento, pero no tienen el mismo significado operativo. ChatGPT Images 2.0 es el nombre de producto. GPT Image 2 es una forma de hablar de la generación del modelo. gpt-image-2 es el model ID exacto que aparece en llamadas API.

| Nombre | Uso más claro en español | No lo uses para |

|---|---|---|

| ChatGPT Images 2.0 | Acceso de producto, creación manual, interfaz de ChatGPT, Images with thinking. | Facturación API, parámetros SDK o IDs de modelo backend. |

| GPT Image 2 | Explicación general de la familia o generación del modelo. | Llamadas API exactas cuando se requiere un model ID. |

gpt-image-2 | Generación o edición por Image API y Responses API. | Promesas de planes ChatGPT o cuotas de la app de consumidor. |

| Images with thinking | Modo de producto en ChatGPT con más razonamiento antes del resultado. | Afirmar que cada workflow API expone el mismo modo. |

Esta separación evita dos errores comunes. El primero es tratar un anuncio de función en ChatGPT como si ya fuera una guía de integración API. El segundo es ver el model ID y asumir que la misma interfaz, acceso por plan, herramientas y cuotas aplican a usuarios de ChatGPT. La familia de modelos está conectada, pero la ruta cambia el contrato.

Cuando aparezca "OpenAI Images 2.0" en una conversación, devuélvelo a la separación más clara: ChatGPT Images 2.0 para la superficie de producto y gpt-image-2 para llamadas API.

Qué cambió en ChatGPT Images 2.0

OpenAI presenta ChatGPT Images 2.0 como un sistema de generación de imágenes más fuerte para visuales con texto, estructura, varios idiomas y contexto. Para lectores en español eso importa porque muchas tareas reales no son solo "hacer una imagen bonita": incluyen texto legible, layout, jerarquía, etiquetas, diapositivas, infografías, mapas, cómics, product mockups, piezas para redes y claims de marketing que deben ser correctos.

Images with thinking es el cambio de workflow más grande. La descripción del system card habla de un flujo de imagen que puede razonar, usar herramientas, apoyarse en datos web, generar varias imágenes desde un prompt y autocheckear antes del resultado final. Eso vale cuando la tarea requiere planificación antes de los píxeles: una campaña, una infografía densa, un mapa, un póster multilingüe, una tabla comparativa o una slide donde el primer borrador debe interpretar el brief.

No conviertas esas mejoras en promesas de perfección. Mejor texto multilingüe no significa tipografía española impecable. Mejor renderizado no garantiza que cada precio, fecha, aviso legal, nombre de producto, eje de gráfico o etiqueta pequeña sea correcto. Cuanto más realista se ve el resultado, más importante es revisar porque un error pequeño puede parecer fiable.

| Carga de trabajo | Cómo ayuda Images 2.0 | Qué sigue necesitando revisión |

|---|---|---|

| Anuncios y pósteres en español | Mejor layout de texto, jerarquía y acabado visual. | Ortografía, tildes, precios, fechas, letra pequeña y términos de marca. |

| Infografías y diapositivas | Mejor agrupación, estructura, iconos y tableros explicativos. | Datos, orden, unidades, fuentes y etiquetas de gráficos. |

| Assets multilingües | Mejor manejo de escrituras mixtas y layout local. | Fraseo nativo, saltos de línea, glifos y terminología regional. |

| Product mockups | Mejor control de escena y realismo. | Veracidad del producto, derechos, claims y compliance. |

| Cómics y storyboards | Mejor secuencia y consistencia de estilo. | Consistencia de personajes, tono, seguridad y derechos. |

La mejora es real, pero condicional. Ayuda en tareas visuales más difíciles y hace que el checklist de QA sea más específico.

Elige la ruta por workflow

"Cómo usar ChatGPT Images 2.0" puede significar trabajos distintos. Un creador quiere producir una imagen. Un desarrollador quiere un endpoint. Un founder quiere saber coste. Un marketer necesita texto en español dentro de un anuncio. Un equipo de documentación necesita imágenes trazables en un repositorio. No deberían recibir la misma respuesta.

Usa ChatGPT cuando el trabajo pertenece a una persona delante del teclado. Es la ruta correcta para exploración creativa, posts sociales, borradores de campaña, conceptos de producto, mockups para clientes y slides donde importa la iteración rápida. Su fuerza es el feedback. Su debilidad es el control de producción: no entrega automáticamente logs duraderos, reintentos, rutas de almacenamiento ni reportes de coste a tu backend.

Usa Images with thinking cuando la parte difícil es la decisión visual. Si el modelo debe comparar layouts, razonar sobre un mapa, leer contexto, planear una infografía o producir varios candidatos antes de elegir, la deliberación extra puede ayudar. Evítalo cuando el pedido ya está exacto y necesitas salida programática repetible.

Usa Image API cuando una aplicación necesita generación o edición directa con gpt-image-2. Esta ruta encaja con equipos de software porque prompts, inputs, tamaño, calidad, errores, reintentos, almacenamiento y coste pueden quedar bajo control de la app. Un usuario hace clic para generar una imagen de producto, editar una referencia o guardar el resultado dentro de un flujo de producto.

Usa Responses API cuando la generación de imagen es una herramienta dentro de un flujo más amplio. Un producto puede recoger contexto del usuario, usar herramientas, razonar sobre un brief, generar la imagen y devolver una explicación o una pregunta de seguimiento. En esa forma, la imagen pertenece a una respuesta de varios pasos.

Usa Codex cuando la imagen pertenece a trabajo de repositorio: covers de artículos, tableros técnicos, assets de documentación, explicadores UI, imágenes localizadas, prompts, archivos, alt text y evidencia de revisión. Esa trazabilidad vale más que una imagen decorativa suelta cuando el asset debe publicarse con contenido o código.

Detalles API que conviene verificar antes de construir

La ruta de desarrollo empieza con el model ID exacto: gpt-image-2. La página actual de modelos registra el snapshot comprobado como gpt-image-2-2026-04-21. Si tu equipo necesita pruebas reproducibles, registra fecha y model ID en vez de escribir solo "el nuevo modelo de imagen".

La preparación de la cuenta también entra en el plan. La documentación indica que los GPT Image models pueden requerir verificación de organización. Trátalo como un check de implementación. Un equipo de producto no debería prometer una función de imagen hasta confirmar que la cuenta realmente puede llamar al modelo.

Las restricciones de tamaño también son parte de implementación. gpt-image-2 soporta tamaños personalizados flexibles, pero los límites documentados incluyen lado máximo no superior a 3840px, ambas dimensiones como múltiplos de 16px, ratio largo/corto no superior a 3:1 y píxeles totales dentro del rango documentado. Si el problema real es 4K, dimensiones exactas o generación nativa frente a upscale, usa la guía de GPT Image 2 4K.

El precio necesita la misma separación. El coste de imagen depende de categorías de tokens, imágenes de entrada, tamaño de salida, calidad, ruta y reintentos. No es una tarifa universal por imagen. Si la tarea es acceso API barato o ruta de proveedor, usa la guía de GPT Image 2 API barata. Si la pregunta es si existe API oficial gratuita, usa la respuesta sobre GPT Image 2 API gratis.

El fondo transparente es otra frontera práctica. La guía actual de generación de imágenes no convierte la generación con fondo transparente en una capacidad directa de gpt-image-2. Si necesitas logos, stickers, recortes UI o PNG transparentes, planifica composición o postproceso y revisa el archivo final.

Dónde encaja Codex

Codex no sustituye el acceso a imágenes de ChatGPT ni es un atajo de precio para la API. Es útil cuando el visual forma parte de una tarea de repositorio: cover de artículo, tablero explicativo, asset de docs, diagrama UI, set de imágenes localizadas o un asset que debe seguir siendo trazable por prompts, archivos y revisiones.

El modelo operativo cambia. En ChatGPT, el visual final suele ser el entregable. En Codex, la imagen es parte de un change set. Importan el prompt, la imagen elegida, el publish asset redimensionado, el alt text, la referencia del artículo, la localización y la revisión final. Para publicación técnica, esa trazabilidad es una característica de calidad.

Codex encaja aquí porque las imágenes enseñan decisiones: propiedad de ruta, nombres de producto/API, selección de workflow y seguridad de producción. Una hero image genérica no ayudaría al lector a elegir.

Precio, gratis, 4K, proveedores y comparaciones

ChatGPT Images 2.0 genera preguntas obvias, pero no son el mismo trabajo. Una guía de rutas no debe convertirse a la vez en página de precio, página de gratis, página de proveedor y comparación de modelos.

Si la pregunta es precio, identifica primero el dueño del contrato y la unidad de facturación. OpenAI direct pricing, descuentos asíncronos tipo Batch, llamadas planas de proveedor, créditos de marketplace y acceso por plan ChatGPT pueden hablar de la misma familia de modelos mientras cobran con contratos distintos. Compáralos solo cuando sepas quién controla billing, soporte, privacidad, fallos y rate limits.

Si la pregunta es acceso gratis, separa el uso en ChatGPT app de la facturación oficial de API. Poder probar generación en ChatGPT no crea créditos backend de API. Trials de proveedores, demos en navegador, promociones locales y facturación oficial de API son rutas diferentes.

Si la pregunta es 4K, trátala como trabajo de implementación. El lector necesita dimensiones, archivo de salida, compresión, resize, generación nativa frente a upscale y QA visual. El nombre del lanzamiento no resuelve eso por sí solo.

Si la pregunta es comparación de modelos, define primero la carga de trabajo. Pósteres con texto en español, anuncios multilingües, product mockups, reference edits, diagramas e imágenes fotorrealistas requieren pruebas distintas. Un "qué modelo es mejor" genérico rara vez ayuda.

Checks de producción antes de publicar

Más calidad no elimina la revisión; cambia lo que debe detectar. Una imagen pulida puede hacer que un precio, fecha, claim de producto o línea legal incorrecta parezca creíble.

Empieza por el texto. Revisa ortografía, tildes, números, fechas, nombres, unidades, etiquetas, botones, claims de producto y letra pequeña. Si la imagen está localizada, una persona nativa debería revisar wording y saltos de línea. No apruebes un visual por verse profesional si nadie ha leído sus palabras.

Luego revisa dimensiones y formato. Guarda la salida e inspecciona ancho, alto, tipo de archivo, compresión y redimensionado posterior. Covers de artículo, social cards, slides, anuncios y previews in-app tienen requisitos distintos.

Registra el comportamiento de coste antes de subir volumen. Sigue longitud de prompt, imágenes de entrada, quality, tamaño de salida, solicitudes bloqueadas, reintentos, tasa de salida aceptada y almacenamiento final. Una ruta barata en una prueba puede volverse cara con alta calidad, ediciones y retries.

Policy y provenance deben estar junto al QA visual. Guarda prompts, referencias de origen, supuestos de derechos, aprobaciones, revisión de seguridad y ruta fallback. Un pipeline de producción debe saber qué ocurre cuando la ruta va lenta, queda bloqueada, supera presupuesto o no encaja con un prompt.

Regla de migración para workflows de imagen existentes

No reemplaces un workflow estable solo porque ChatGPT Images 2.0 sea nuevo. Prueba tareas que importan: un póster denso en español, un product mockup, un visual multilingüe, un diagrama, una edición con referencia y un asset de tamaño final. Compara la ruta nueva contra el baseline actual en calidad aceptada, editabilidad, coste, latencia, revisión de seguridad, fricción de entrega y fallback.

Prueba primero ChatGPT Images 2.0 cuando el workflow actual falle en texto, layout o razonamiento visual deliberado. Usa llamadas directas a gpt-image-2 cuando el producto necesite salida programática. Usa Responses API cuando la imagen sea parte de un tool flow más amplio. Usa Codex cuando el visual pertenezca a un pipeline de publicación en repositorio. Mantén la ruta anterior si sigue siendo más barata, segura o predecible para el trabajo exacto.

Esta regla es menos llamativa que el titular de lanzamiento, pero evita trabajo de ingeniería desperdiciado. Un modelo más fuerte gana tráfico de producción cuando supera la ruta actual en los prompts que importan.

FAQ

¿"OpenAI Images 2.0" es el nombre oficial?

El sujeto de producto más seguro es ChatGPT Images 2.0. En español puede aparecer OpenAI Images 2.0 como atajo, pero la separación limpia es ChatGPT Images 2.0 para el producto y gpt-image-2 para llamadas API.

¿ChatGPT Images 2.0 ya está disponible?

El Help Center describe la disponibilidad de ChatGPT Images 2.0 por niveles de ChatGPT y separa Images with thinking por disponibilidad de plan. Usa redacción con fecha y evita prometer cuotas exactas si no verificas los detalles actuales del plan.

¿ChatGPT Images 2.0 tiene API?

El modelo para desarrolladores es gpt-image-2. Usa Image API para generación o edición directa, y usa la herramienta de imagen en Responses API cuando el paso de imagen pertenece a una app o agent workflow más amplio.

¿gpt-image-2 es gratis?

No asumas una ruta oficial gratuita y soportada. Acceso en ChatGPT app, trials de proveedores, demos en navegador y facturación API son contratos separados. Trata el acceso gratis como una verificación específica.

¿Conviene usar Image API o Responses API?

Usa Image API cuando la app necesita una llamada directa de generación o edición. Usa Responses API cuando la generación de imagen es una herramienta entre razonamiento, texto, herramientas y explicación de seguimiento.

¿Cuándo conviene usar Codex para imágenes?

Usa Codex cuando la imagen forma parte de un cambio de repositorio: visuales de artículos, assets de documentación, UI boards, imágenes publicables localizadas o cualquier asset que necesite trazabilidad de prompt, archivo y revisión.

¿ChatGPT Images 2.0 puede crear texto perfecto en español?

Ningún workflow de producción debería asumir perfección. El modelo es más fuerte en visuales con texto y varios idiomas, pero palabras, tildes, saltos de línea, fechas, nombres y claims generados todavía necesitan revisión humana.

¿Qué debo probar antes de mover tráfico de producción?

Prueba las clases de prompt de las que depende tu producto: texto denso, texto en español, texto multilingüe, imágenes de producto, ediciones, diagramas y assets de tamaño final. Mide tasa de salida aceptada, reintentos, coste, latencia, revisión de seguridad y comportamiento fallback.