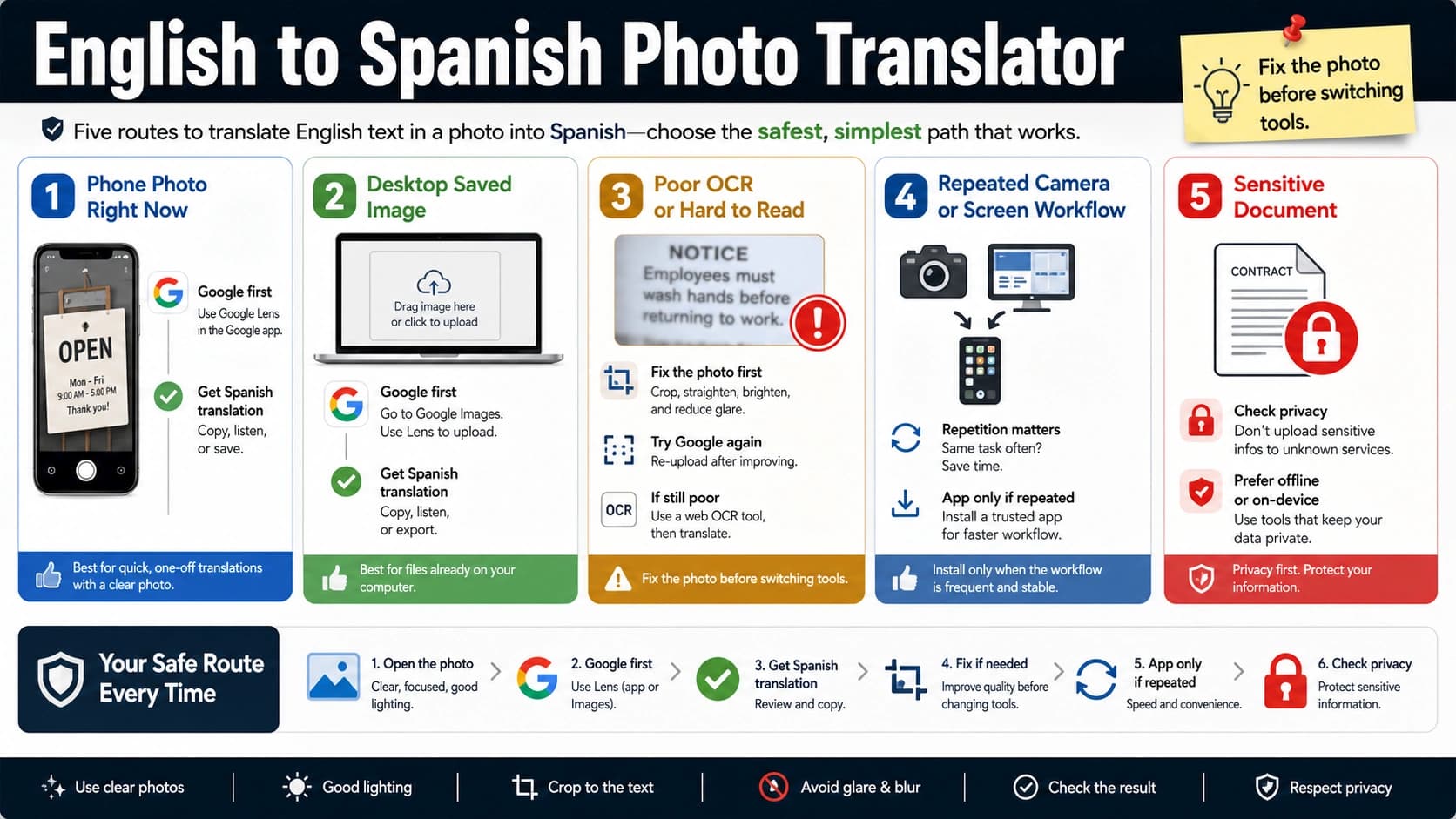

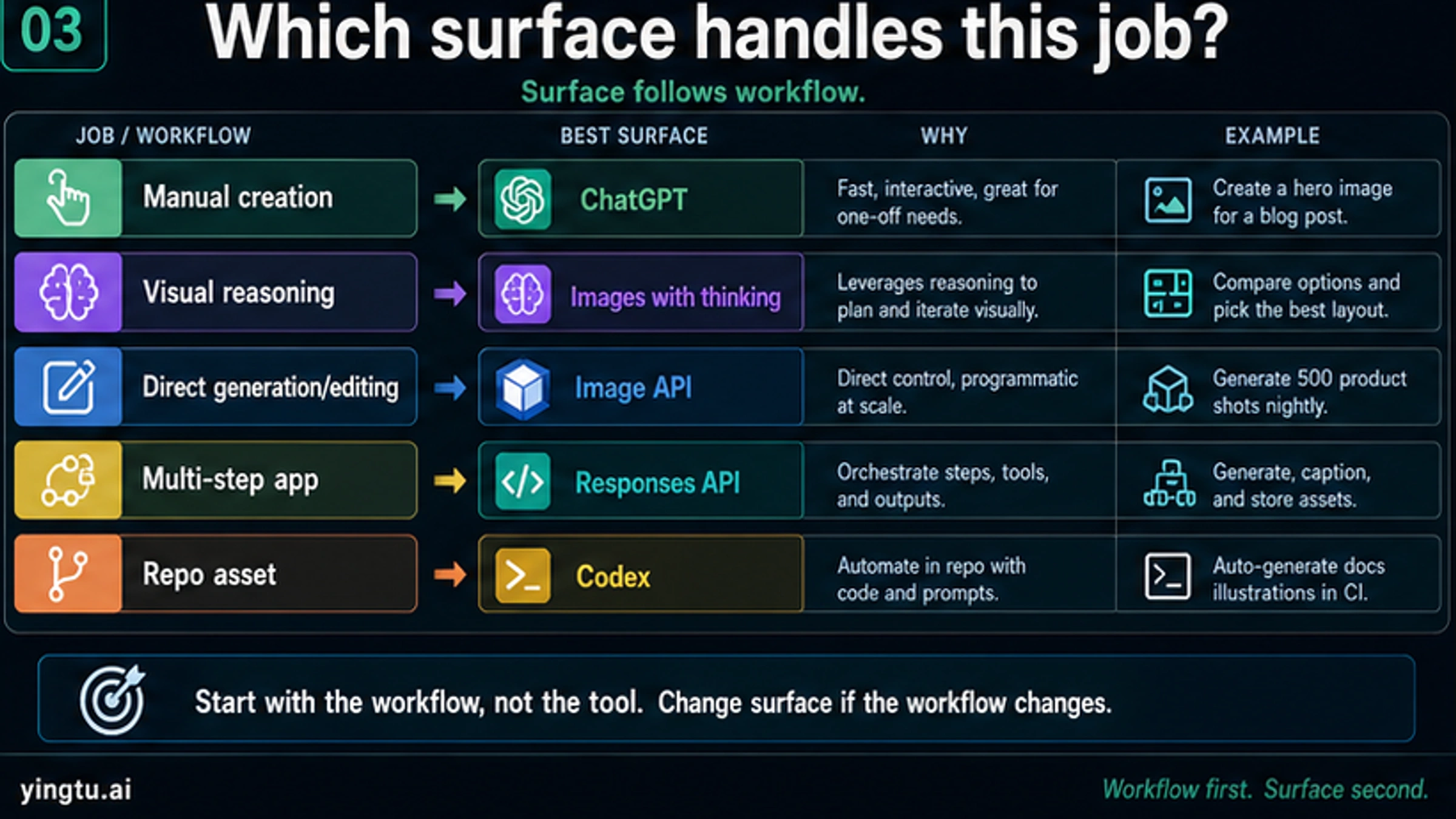

As of April 25, 2026, ChatGPT Images 2.0 is the product surface most users see, and gpt-image-2 is the model ID developers call through OpenAI's API. The phrase "OpenAI Images 2.0" usually points to that same launch, but the first choice is route: ChatGPT for manual creation, Images with thinking for deliberate visual reasoning, Image API for direct generation or editing, Responses API for multi-step apps, and Codex when the image is part of repo work.

| Need now | Start with | Use it when | Hold back when |

|---|---|---|---|

| A creator or marketer wants a polished image by hand | ChatGPT Images 2.0 in ChatGPT | You can prompt, inspect, iterate, and export manually. | You need backend automation or exact API logging. |

| The visual task needs planning, comparison, or context gathering | Images with thinking | You want the model to reason, compare options, or self-check before producing the image. | You need a simple one-shot API call. |

| An app needs direct image generation or editing | Image API with gpt-image-2 | You control prompts, inputs, size, quality, and output handling in code. | The image step is part of a longer conversational workflow. |

| A product flow needs text, tools, and image generation together | Responses API image generation tool | The image is one step inside a multi-step app or agent. | You only need a direct generate/edit endpoint. |

| A repository needs article, docs, or UI assets | Codex image workflow | The image belongs with code, drafts, assets, and review artifacts. | You need consumer ChatGPT access rather than repo-local work. |

| The real question is price, free access, 4K, providers, or model comparison | Focused sibling guide | The broad launch answer is already clear and the next decision is narrower. | You still do not know which surface fits the job. |

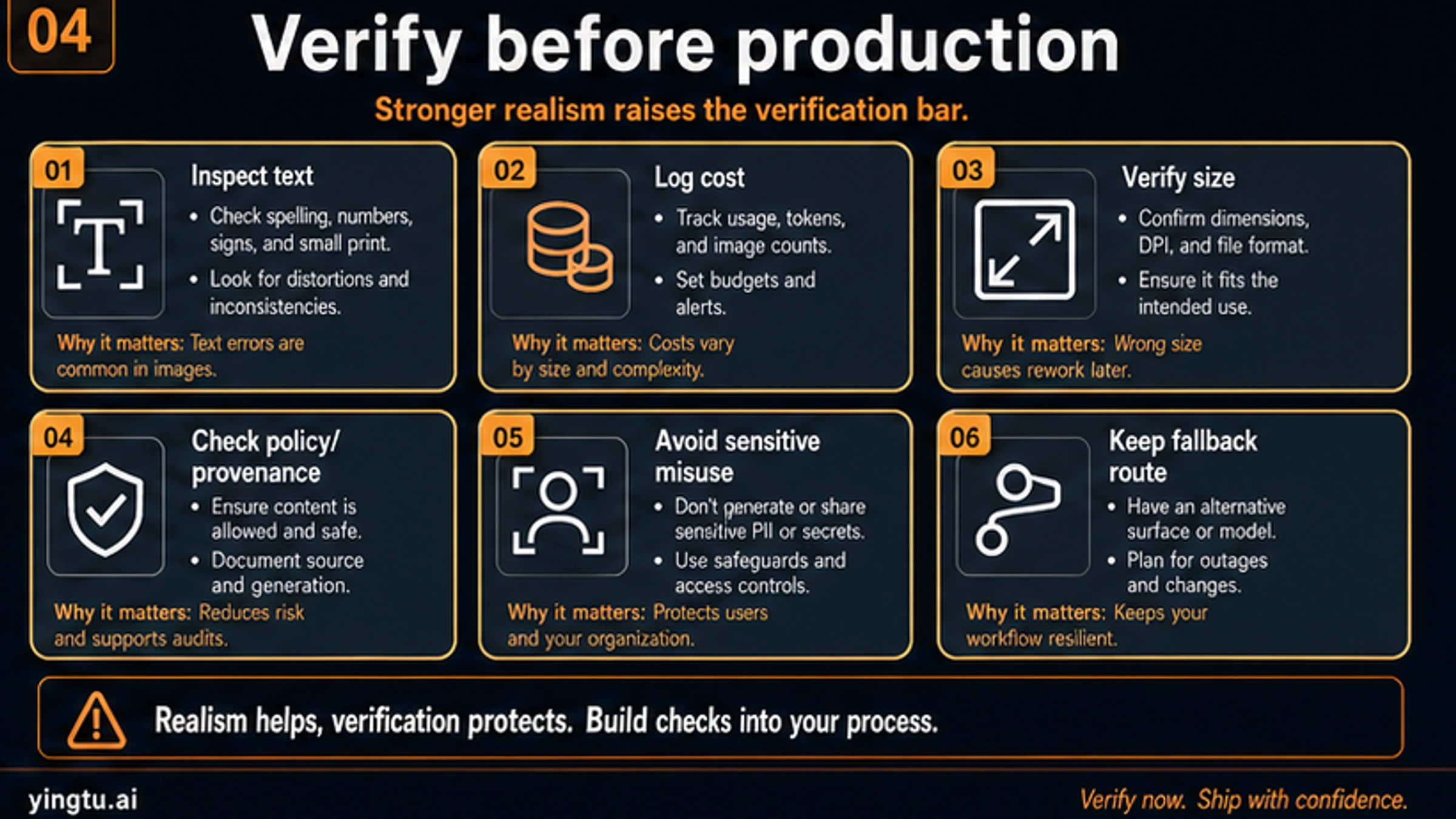

Treat the launch as a route map, not a universal price or capability promise. If the next question is cheapest API access, free API status, exact 4K sizes, provider gateways, or GPT Image 2 versus another model, move to the focused decision path for that job. For production, verify generated text, dimensions, cost behavior, policy fit, provenance, and fallback route before you ship.

What the names mean

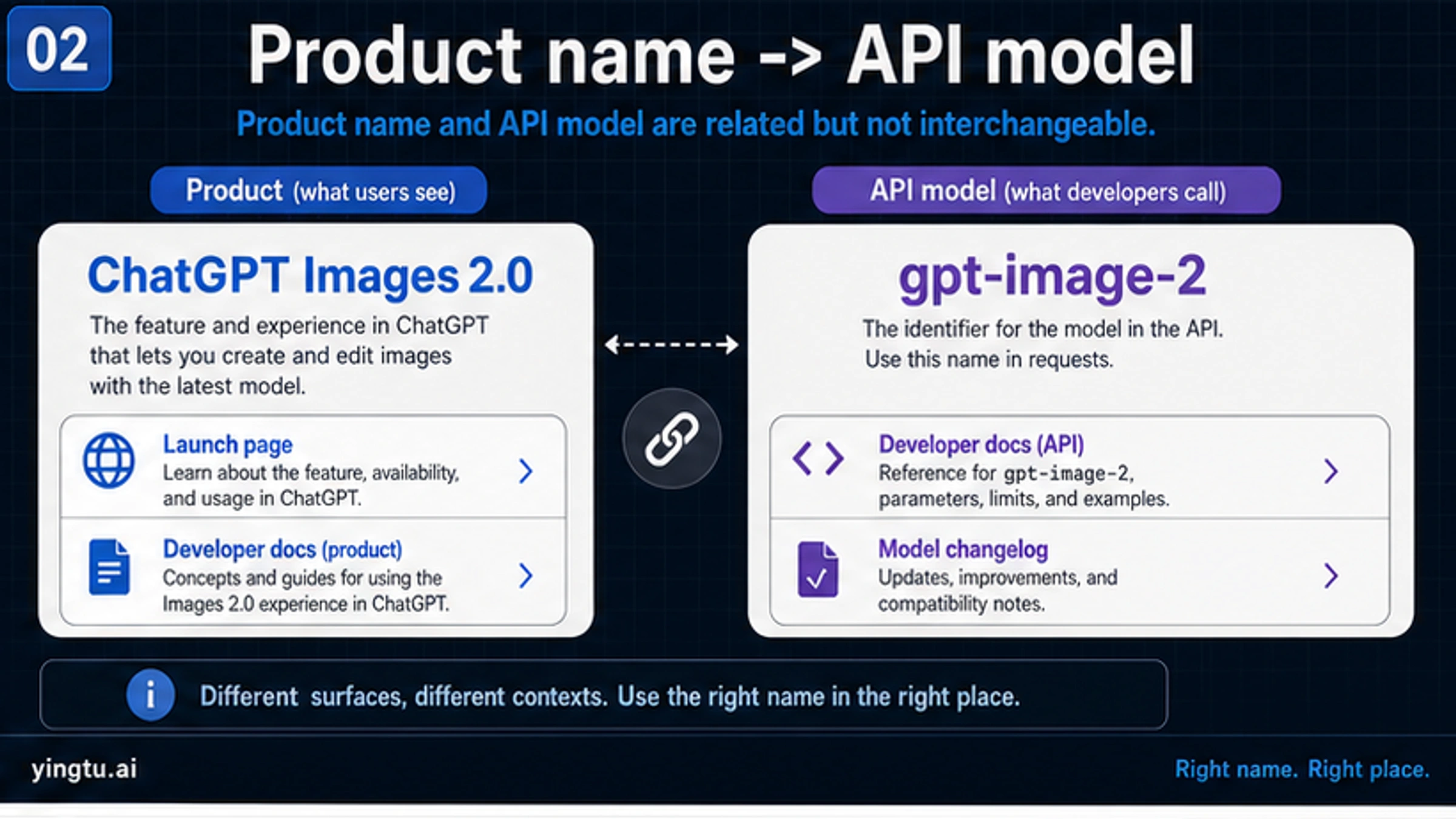

The clean naming split is simple: ChatGPT Images 2.0 is the app-facing launch name, and gpt-image-2 is the developer model ID. GPT Image 2 can describe the model family, but a developer still needs the exact ID in API calls, and a ChatGPT user still experiences the product through the app surface.

That distinction prevents two common mistakes. The first mistake is treating a ChatGPT feature announcement as an API setup tutorial. The second is treating an API model ID as proof of the same tools, quotas, or interface that a ChatGPT user sees. They are connected surfaces, but the contract changes when the route changes.

Use the names this way:

| Name | Best use | Do not use it for |

|---|---|---|

| ChatGPT Images 2.0 | Product access, app workflows, manual creation, Images with thinking | API billing or backend model IDs |

| GPT Image 2 | General model-family discussion | Exact SDK calls when the model ID is needed |

gpt-image-2 | API generation and editing requests | ChatGPT plan promises or consumer-app quotas |

| Images with thinking | ChatGPT mode with more deliberate reasoning and tool use | A blanket claim that every API workflow has the same mode |

The one-line bridge matters only because many people describe the launch with shorthand. After that bridge, the useful question is route ownership.

What changed in ChatGPT Images 2.0

OpenAI presents ChatGPT Images 2.0 as a stronger image-generation model for text-heavy, structured, and context-aware visuals. The launch materials emphasize better text rendering, multilingual text, infographics, slides, maps, comics, product mockups, and flexible formats. Those examples matter because the hardest practical image tasks are rarely just "make a pretty picture." They ask the model to preserve words, layout, visual hierarchy, object relationships, and the intent behind a prompt.

Thinking mode is the bigger product-shape change. OpenAI's system card describes a version of the image workflow that can reason, use tools, draw on live web data, produce multiple images from one prompt, and self-check before final output. That makes sense for tasks where the model should compare options before committing: a campaign visual, a diagram, a localized poster, a slide, or a complex layout where the first draft often needs interpretation before rendering.

Do not turn those launch claims into perfection claims. Improved multilingual rendering is not the same as flawless non-English typography. Stronger text rendering is not a promise that every tiny label, legal disclaimer, or chart axis will be correct. More realistic output is useful, but it also increases the need to inspect sensitive likenesses, source context, and provenance before release.

The practical upgrade is therefore conditional:

| Workload | Why Images 2.0 helps | What still needs human review |

|---|---|---|

| Text-heavy posters and ads | Better chance of readable copy and composed layout | Spelling, small type, claims, brand terms |

| Infographics and slides | More structure and visual hierarchy | Data accuracy, ordering, units, source language |

| Multilingual visual assets | Stronger script handling and localized layout | Native phrasing, line breaks, non-Latin glyphs |

| Product mockups | Better scene control and polish | Product accuracy, rights, compliance |

| Comics and storyboards | Better sequence and style coherence | Character consistency, tone, safety |

Choose the route by workflow

The fastest way to use ChatGPT Images 2.0 is not always the best way to build with it. A one-off creative asset, a deliberate thinking-mode exploration, a backend API call, an agentic product workflow, and a repo-local article image should not share the same operating path.

Use ChatGPT when the work belongs to a person at a keyboard: prompt, inspect, revise, export. This is the right place for quick creative exploration, client-facing mockups, ad concepts, social posts, draft slide visuals, and prompts that benefit from visual back-and-forth.

Use Images with thinking when the hard part is not the final pixels but the visual decision. If the model should research context, compare several layout options, reason about a map or infographic, or generate several related outputs from one prompt, the extra deliberation can be worth the time. Hold back when the job is a simple one-shot generation that you already know how to specify.

Use the Image API when an application needs direct generation or editing with gpt-image-2. That route keeps the request easier to log, retry, store, and cost-control. It is also the cleaner route when a user action should generate one image or edit one input image and your app owns the request lifecycle.

Use the Responses API when image generation is one tool inside a broader workflow. A product might gather user context, reason over a brief, generate an image, then return a written explanation or ask for a revision. In that shape, the image step is not isolated; it belongs inside a tool-using response.

Use Codex when the image is part of repo work. Article covers, visual explainers, docs assets, UI illustrations, and product screenshots often need to live with prompts, source files, reviews, and build artifacts. Keeping those assets inside the same authoring flow makes review and localization more tractable.

API details that matter before building

The developer route starts with the exact model ID: gpt-image-2. OpenAI's current model page records the snapshot as gpt-image-2-2026-04-21, which is useful when you need to document what was tested. The image generation guide also separates direct image calls from image generation inside Responses workflows, so choose the endpoint based on the surrounding app design, not just the model name.

API access can also involve account readiness. OpenAI's docs note that organization verification may be required for GPT Image models. Treat that as an implementation check, not as a prose footnote: verify account status before promising a launch date to a product team.

Size and output settings belong in the API plan. gpt-image-2 supports flexible sizes within documented constraints: the maximum edge is no more than 3840px, both edges must be multiples of 16px, the long-to-short ratio must be no more than 3:1, and total pixels must remain within the documented range. If exact 4K output is the real problem, use the focused GPT Image 2 4K guide rather than compressing all size logic into a launch overview.

Pricing is similar. OpenAI's docs and pricing surfaces express image cost through tokens, quality, size, inputs, and route. That is not the same as one flat price per image. If the real problem is cheapest paid API access or a provider route, use the focused cheap GPT Image 2 API guide. If the problem is whether there is an official free API route, use the GPT Image 2 free API answer.

Where Codex fits

Codex is not a replacement for ChatGPT image access or a pricing shortcut for the API. It is useful when images are part of a repository task: a cover for an article, an explanatory board for documentation, a localized visual set, a UI asset that needs review, or a generated image that should be traceable through files and prompts.

The operating difference is important. In ChatGPT, the end product is usually the visual output. In Codex, the visual output is part of a larger change set. The prompt, selected image, resized publish asset, source evidence, alt text, article reference, and final review all matter because the image must survive the same publication workflow as the prose.

For a launch article like this one, Codex is the right image route because the visuals teach decisions: route ownership, product/API naming, workflow selection, and production safety. A decorative hero image would not help a reader choose. A dense board does.

Price, free access, 4K, providers, and comparisons

ChatGPT Images 2.0 creates several obvious follow-up questions, but they are not the same job. Keeping them separate protects the reader from mixing contracts.

If the question is price, identify the owner and billing unit before comparing. OpenAI direct pricing, Batch-style async discounts, provider flat calls, and third-party marketplaces can all describe the same model family while charging through different contracts. Use the cheap GPT Image 2 API guide for that route decision.

If the question is free access, separate ChatGPT app access from official API billing and provider trials. A user may be able to try image generation in an app surface, but that does not create backend API credit. Use the free GPT Image 2 API guide when the task is free-status verification.

If the question is resolution, treat it as an API implementation problem. Exact size values, custom-size validation, and native 4K versus upscale decisions need their own checks. Use the 4K generation guide when dimensions are the blocker.

If the question is whether GPT Image 2 beats another model, define the workload first. For broad OpenAI versus Google route selection, use the GPT Image 2 vs Nano Banana Pro comparison. For text rendering specifically, use the Nano Banana Pro vs GPT Image text-rendering comparison after you know the text, language, and layout constraints you need to test.

Production checks before shipping

Higher quality does not remove review; it changes what review must catch. The more realistic and text-capable the image, the more likely a small error will look believable enough to slip through.

Start with text. Inspect spelling, numbers, dates, names, product claims, units, labels, and small print. If the image is localized, ask a native reviewer to check line breaks and wording. A visually convincing poster with one wrong legal or pricing claim is not production-ready.

Then check dimensions and format. API output should be saved and inspected, not assumed. Confirm width, height, file type, compression, and whether a downstream resize step changed the asset. Remember that gpt-image-2 does not currently support transparent-background generation, so transparent final assets need a separate compositing route.

Cost behavior should be logged before volume increases. Track prompt length, image inputs, quality, output size, number of retries, blocked requests, and final accepted images. A route that feels cheap during a single test can become expensive when retries and high-resolution outputs enter the loop.

Policy and provenance checks belong beside visual QA. OpenAI's ChatGPT Images 2.0 system card emphasizes safety work because stronger realism increases risks around sensitive imagery and convincing generated media. Keep records of prompts, source references, rights assumptions, output approvals, and moderation outcomes when the image is used in a customer-facing workflow.

Finally, keep a fallback route. A production pipeline should know what happens when the image route is slow, blocked, over budget, or unsuitable for a prompt. The fallback might be a smaller master plus upscale, a direct API route instead of a multi-step workflow, a manual ChatGPT pass, or a held release until review clears.

Migration rule for existing image workflows

Do not replace a working image workflow only because the launch is new. Test the tasks that matter: a dense text poster, a product mockup, a multilingual visual, a diagram, a reference edit, and a final-size asset. Compare the new route against the current baseline on quality, editability, cost, latency, safety review, and handoff friction.

Use ChatGPT Images 2.0 first when the current workflow struggles with text, layout, or deliberate visual reasoning. Use direct gpt-image-2 API calls when the product needs programmatic output. Use Responses API when the image is part of a larger tool flow. Use Codex when the visual belongs to a repo-local publication pipeline. Keep the older route when it is cheaper, safer, or more predictable for the exact job.

That migration rule is less exciting than a launch headline, but it is what prevents wasted build work. A stronger image model earns production traffic by beating the existing route on the prompts that matter to your product.

FAQ

Is OpenAI Images 2.0 the official name?

The official launch subject is ChatGPT Images 2.0. The shorthand points to the same OpenAI image launch in many conversations, but use ChatGPT Images 2.0 for the product and gpt-image-2 for API calls.

Is ChatGPT Images 2.0 available now?

OpenAI's Help Center currently describes ChatGPT Images 2.0 availability across ChatGPT tiers and separates Images with thinking by plan availability. Use dated wording and avoid exact quota promises unless you verify the current plan details before publishing or shipping.

Does ChatGPT Images 2.0 have an API?

The developer model is gpt-image-2. Use the Image API for direct generation or editing, and use Responses API image generation when the image step belongs inside a multi-step app or agent workflow.

Is gpt-image-2 free?

Do not assume a free official API route. ChatGPT app access, provider trials, browser tests, and official API billing are different contracts. Use the focused free-status article when free access is the actual decision.

Should I use Image API or Responses API?

Use Image API when the application needs a direct image generation or edit call. Use Responses API when the image is one tool inside a broader workflow that also needs reasoning, text, tools, or follow-up explanation.

When should I use Codex for images?

Use Codex when the image is part of a repository change: article visuals, documentation assets, UI boards, localized publish images, or any asset that should stay traceable through prompts, files, and review.

Can ChatGPT Images 2.0 create perfect multilingual text?

No production workflow should assume perfection. The model is stronger on multilingual layouts and text-heavy visuals, but generated words, line breaks, glyphs, dates, names, and claims still need human review before release.

What should I test before switching production traffic?

Test the exact prompt classes your workflow depends on: dense text, localized text, product images, edits, diagrams, and final-size assets. Measure accepted output rate, retry count, cost, latency, safety review, and fallback behavior before replacing a stable route.