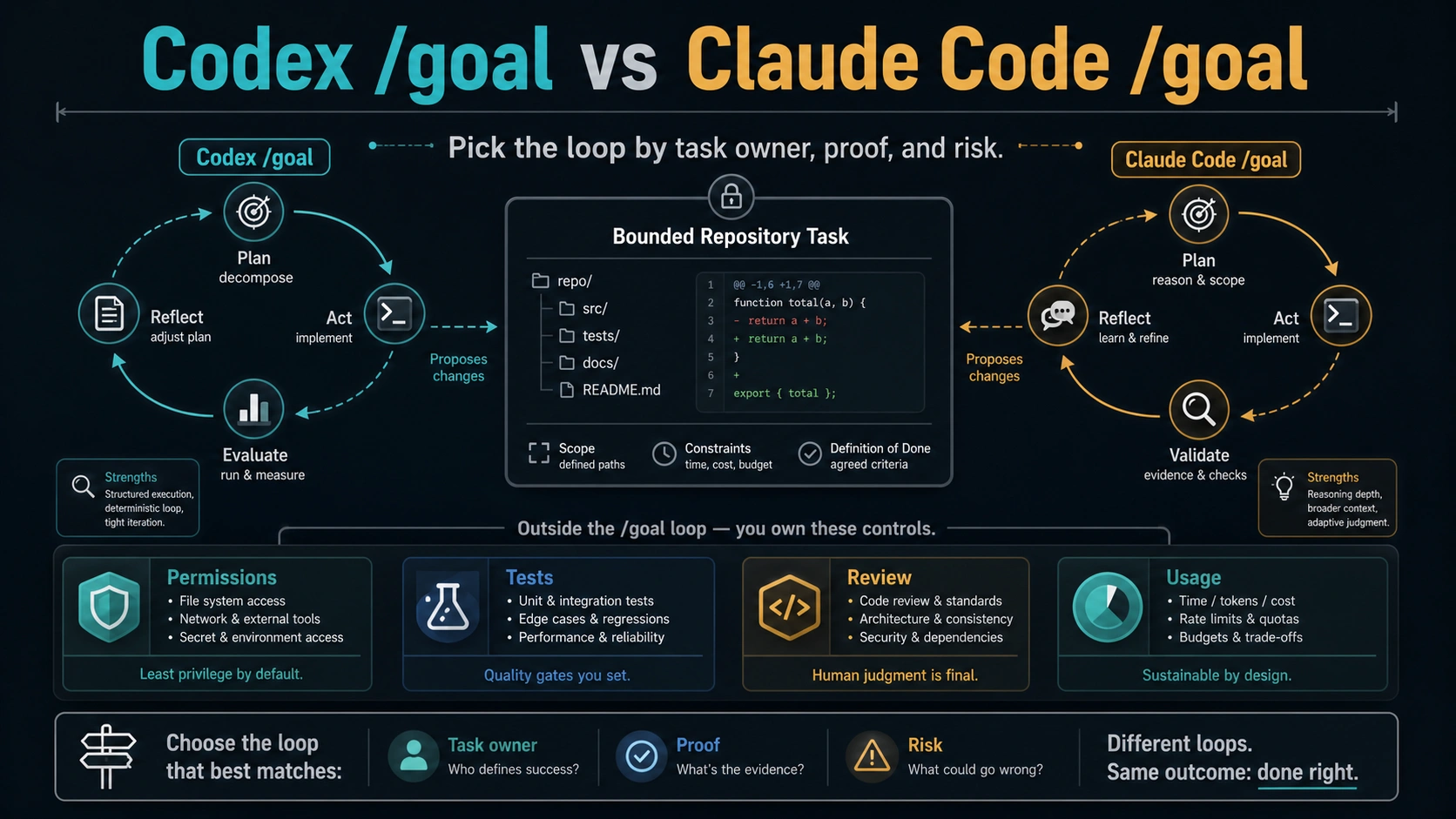

Codex /goal and Claude Code /goal are not interchangeable even though both let an agent keep working toward a target. Use Codex /goal when the task already belongs in a Codex thread or CLI flow, the repository scope is bounded, and a proof command or reviewable diff can show completion. Use Claude Code /goal when you are already steering work in Claude Code, local permission mode matters, and the evaluator can judge the end state from surfaced evidence.

If the work is judgment-heavy, vague, recurring, tied to production data, or missing an abort rule, skip both goal loops. Run a normal guided session for live judgment, or move the loop into CI/scheduler when timing and repeatability are the real owner. /goal defines a completion condition; it does not approve commands, pass tests, cap usage, protect secrets, or remove review.

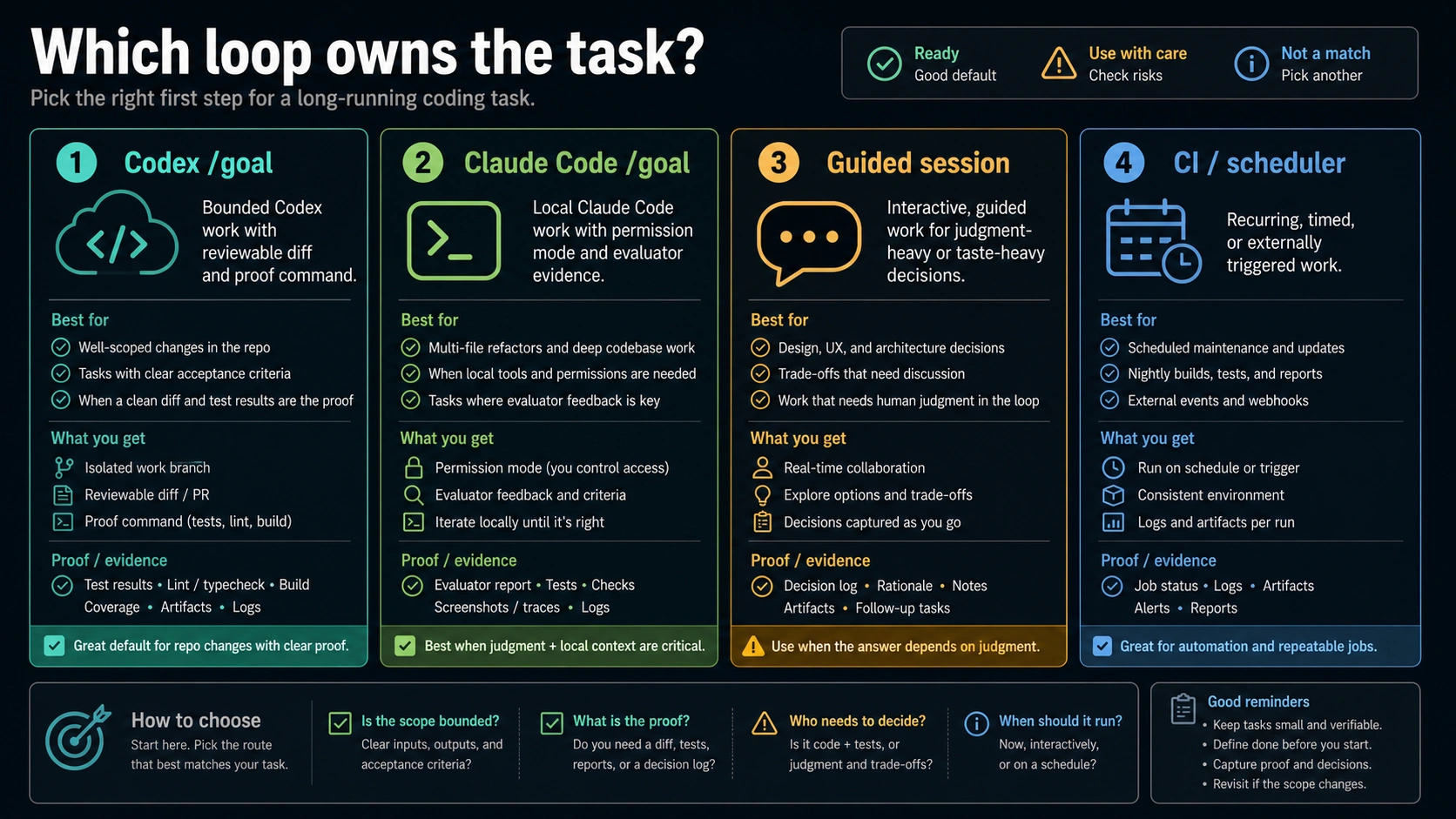

Quick Decision Board

The useful comparison is not "which agent is better?" It is "which loop should own the task until a measurable end state is reached?"

| Route | Use it when | Proof before you start | Stop rule |

|---|---|---|---|

Codex /goal | The task already fits Codex local or CLI work, has bounded repo scope, and should produce a reviewable diff. | Test, lint, typecheck, snapshot, or diff inspection can prove completion. | Pause, resume, or clear the goal when the target changes or the diff grows beyond scope. |

Claude Code /goal | The work is already in Claude Code, local steering matters, and permission mode should stay visible. | The evaluator can judge the surfaced conversation and tool evidence. | Clear or replace the active goal when the condition becomes ambiguous. |

| Guided session | You need live judgment, changing requirements, design taste, or step-by-step negotiation. | A human decision is still needed after each discovery. | Keep the agent in plan or normal chat mode rather than asking it to loop. |

| CI or scheduler | The loop is recurring, time-based, externally triggered, or belongs outside an interactive agent session. | A deterministic job, cron, queue, or workflow has its own logs and retry policy. | Let automation own timing; use coding agents only to change the workflow. |

That board is the safest starting point. A goal loop is useful when completion can be judged from concrete evidence. It is weak when the desired result is taste, negotiation, ongoing monitoring, or a broad migration that should be split into reviewed work units.

What /goal Actually Changes

OpenAI's Codex slash-command docs describe Codex /goal as an experimental command for setting or viewing a goal for a long-running task. The checked docs say it requires the goals feature flag, can be enabled through the experimental controls or goals = true under [features], and includes /goal <objective>, /goal, /goal pause, /goal resume, and /goal clear.

That wording matters. Codex /goal gives the thread a persistent target while the larger task runs. It does not turn every Codex surface into unattended production automation. Codex itself can appear through app, IDE, CLI, and cloud routes; OpenAI's Codex quickstart separates those surfaces. A goal set in a local CLI flow should not be described as the same operational route as a background cloud task unless the owner docs say so.

Anthropic's Claude Code /goal docs frame the feature around a completion condition. Claude works across turns until the condition is met. The checked docs say a small fast model evaluates after each turn whether the condition holds, one goal can be active per session, the goal clears automatically once satisfied, and the evaluator sees surfaced conversation evidence rather than independently calling tools. The same page also describes non-interactive and Remote Control use, plus version and trusted-workspace requirements.

The shared idea is simple: write a condition, let the agent continue until the condition appears satisfied, then return control. The contracts around that idea differ. Codex emphasizes a persistent target in the Codex task/thread context. Claude Code emphasizes a completion condition evaluated inside a Claude Code session. Treating those as identical is where bad delegation starts.

Mechanics That Matter Before You Walk Away

The difference between a useful goal and a risky goal is usually not the wording of /goal itself. It is the surrounding operating route.

| Decision | Codex /goal | Claude Code /goal | Why it matters |

|---|---|---|---|

| Enablement | Experimental in the checked Codex docs and tied to features.goals. | Requires a supported Claude Code version and a trusted workspace in the checked docs. | Availability is a live owner-source fact, not a permanent assumption. |

| Work surface | Codex app, IDE, CLI, or cloud routes may carry different repo and review boundaries. | Claude Code session, including local and documented remote/non-interactive routes. | Do not write a goal that assumes a route the agent is not using. |

| Completion evidence | A proof command, reviewable diff, or bounded artifact should show the target was met. | The evaluator judges surfaced evidence after turns; the goal should be easy to evaluate. | A vague target creates false confidence or endless continuation. |

| Permission boundary | Goal tracking is separate from repo access, sandboxing, and command approval. | Permission modes remain separate from goal completion. | A loop does not authorize risky edits by itself. |

| Usage and billing | Long-running Codex work can consume more usage; exact plan and API-key details are volatile. | Claude Code subscription/API-key routing can change allocation and charges. | Check the active route before leaving a task running. |

Claude Code's permission modes are especially important here. Plan mode, default approvals, accept-edits, auto mode, and bypass-style modes are different trust contracts. /goal does not choose the right one for you. If a task needs read-only exploration, keep it in plan mode. If it needs edits, choose the permission mode deliberately before asking Claude Code to keep working toward a condition.

The same discipline applies to Codex. A goal can help Codex track a target, but the repo state, branch, setup commands, sandbox behavior, and review path still define whether the work is safe to accept.

Goal Contract Before You Start

Use a goal only after six pieces are explicit.

| Contract item | Good enough | Too vague |

|---|---|---|

| End state | "All failing tests in packages/billing pass after fixing the date parsing regression." | "Clean up billing." |

| Proof command | "pnpm test packages/billing -- --runInBand passes, and no unrelated files change." | "Make sure it works." |

| Scope boundary | "Only touch parser, fixture, and regression test files." | "Refactor whatever is needed." |

| Permission mode | "Ask before shell commands that modify data; edits are allowed in the branch." | "Do whatever is necessary." |

| Usage check | "Stop after two failed proof-command attempts or if the diff exceeds the target files." | "Keep trying until done." |

| Abort rule | "Pause and report if the failure moves outside billing date parsing." | "Figure it out." |

A strong goal reads more like an acceptance contract than a prompt. It names the success state, the evidence, the allowed scope, and the moment when the agent should stop. That makes it easier for Codex to keep the target stable and easier for Claude Code's evaluator to decide whether the condition has actually been met.

Here are better examples:

hljs textGoal: In the Codex CLI, update only the checkout discount tests and the discount rounding helper so `pnpm test packages/checkout -- discount` passes. Stop if the fix requires changing payment provider code.

hljs textGoal: In Claude Code, finish the docs typo sweep in `docs/api/authentication.md`; the goal is met when the file has no broken heading anchors and `pnpm docs:lint docs/api/authentication.md` passes. Ask before editing any file outside `docs/api/`.

Both examples are narrow enough to evaluate. They include proof, scope, and abort language. They also make it clear that the human still owns review.

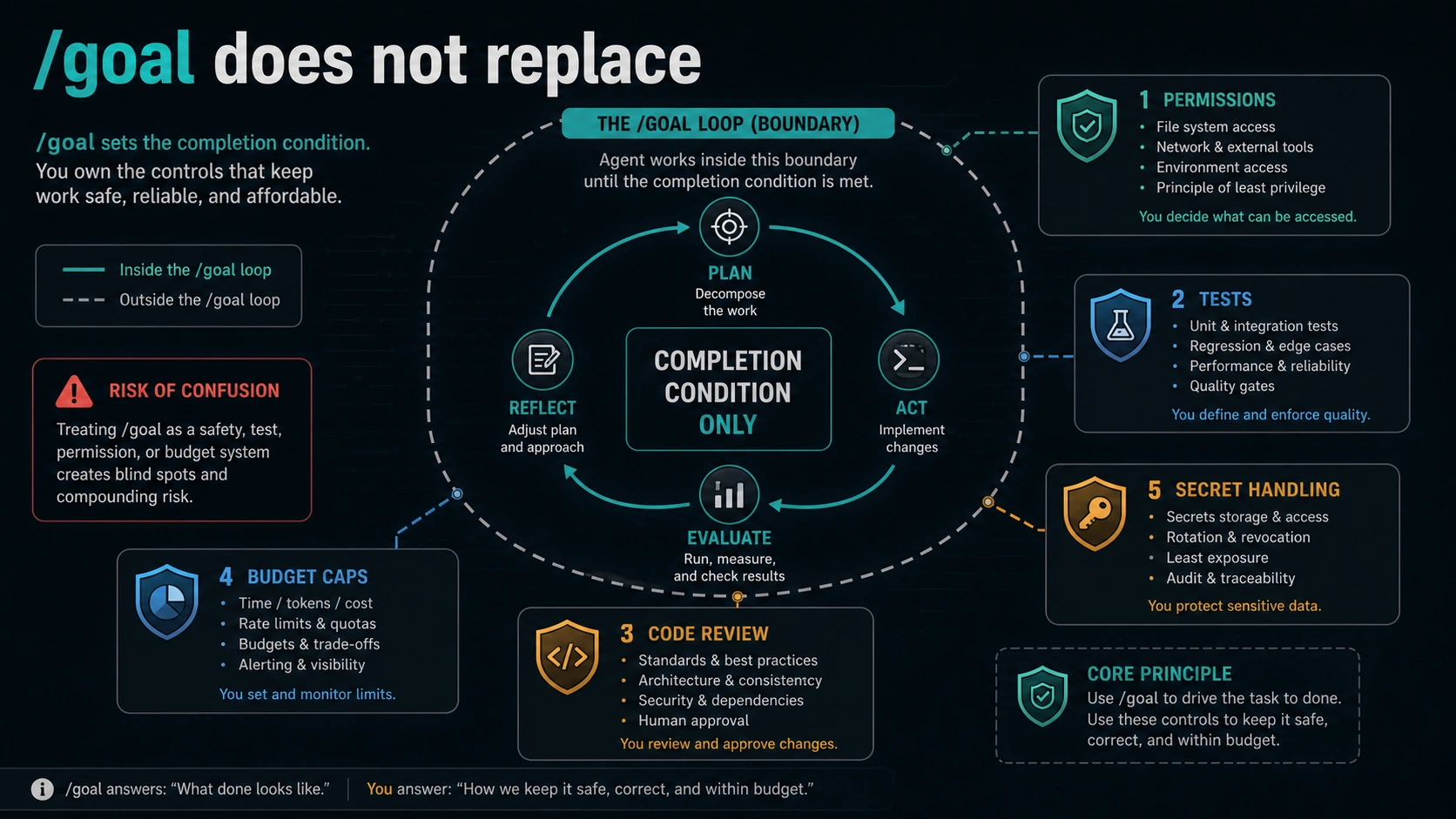

What /goal Does Not Replace

The easiest mistake is to treat a goal loop as a safety layer. It is not. It is a way to keep the agent working toward a condition.

/goal does not replace permissions. If Claude Code is in a mode that asks before actions, the goal does not mean every future action should be accepted. If Codex is operating in a route with sandbox or repo-access settings, the goal does not expand that boundary.

/goal does not replace tests. A completion condition can include "tests pass," but the actual proof still has to be run, visible, and relevant. A passing narrow test does not prove unrelated production risk is gone.

/goal does not replace review. A diff created under a goal loop is still a diff. Check the files changed, read the reasoning, rerun the important commands, and reject broad edits that were not part of the contract.

/goal does not cap spend or usage. OpenAI's Codex pricing docs say usage depends on model, task complexity, and local versus cloud route, and long-running work can use more allowance. Claude Code support docs describe subscription allocation and API-key routing as separate billing paths. Exact limits and plan windows can change, so the safe advice is to check the active owner surface before running a long session.

/goal does not protect secrets or production data. Do not put secrets, customer records, live credentials, or irreversible production actions inside a goal loop. If the task needs those boundaries, design a controlled workflow first.

When Normal Prompting Or CI Is Better

Use a normal guided session when the target will change while the agent learns. Architecture decisions, ambiguous UI polish, messy incident triage, migration planning, and "make this nicer" work need human judgment between steps. A goal loop can keep moving after the moment when a human should have stopped and re-decided.

Use Claude Code plan mode when you want analysis before edits. A completion goal is premature if the important work is still reading, mapping, and asking what should change.

Use CI, scheduled jobs, queues, or repository automation when timing and repetition are the real problem. A nightly dependency audit, recurring documentation check, or deployment verification should not depend on an interactive coding session staying alive. The agent can help write the workflow, but the workflow should own the loop.

Use the broad Codex vs Claude Code route comparison when the question is still which coding agent to adopt. Use this /goal decision only after the reader already knows the task is a candidate for long-running agent work.

For billing context on Claude Code and API routes, the sibling Claude API pricing vs subscription guide is a better next stop than trying to memorize quota numbers inside a goal-mode decision. For Codex, prefer the current OpenAI Codex docs and account UI for plan, API-key, and cloud-task behavior because those surfaces can change quickly.

Practical Goal Patterns

Test repair goal

Use a goal loop when a failing test has a known boundary.

hljs textGoal: Fix the failing `auth/session-expiry` test without changing public API behavior. The goal is complete when `pnpm test auth/session-expiry` passes and the diff only touches session expiry code and tests.

This works because the proof command is narrow, the allowed files are predictable, and the abort rule can be checked.

Bounded refactor goal

Use a goal loop when a refactor can be verified mechanically.

hljs textGoal: Rename the internal `legacyToken` helper to `sessionToken` in `packages/auth` only. The goal is complete when typecheck passes and no file outside `packages/auth` changes.

This is a good Codex /goal candidate if the repo is ready for a reviewable diff. It can also fit Claude Code /goal if the local permission mode and evaluator evidence are clear.

Documentation consistency goal

Use a goal loop when the output is constrained.

hljs textGoal: Update the three OAuth setup pages so each contains the same redirect URI warning and all docs lint checks pass. Stop if the change requires editing product behavior.

The proof is docs lint plus a visible content checklist. The abort rule keeps the agent from turning a docs task into product work.

Bad goal: broad polish

hljs textGoal: Make the dashboard better.

That is not a completion condition. Split it into a guided design session, a concrete issue list, and then smaller goals such as fixing one empty state or one accessibility violation.

Bad goal: risky production path

hljs textGoal: Fix the production billing migration and keep going until it works.

That belongs behind a human-controlled runbook, staging checks, backups, and explicit approval. A goal loop should not be the owner of irreversible operational risk.

FAQ

Are Codex /goal and Claude Code /goal the same feature?

No. They share the idea of working toward a completion condition, but the product contracts differ. Codex /goal is described in OpenAI's checked docs as an experimental Codex slash command tied to the goals feature flag. Claude Code /goal is documented around a completion condition, evaluator behavior, one active goal per session, and trusted-workspace requirements.

Which one should I try first?

Use Codex /goal first for bounded Codex-native work that should produce a reviewable diff and can be proved with commands. Use Claude Code /goal first when the work is already in Claude Code, local steering matters, and permission mode should stay visible. Use neither when the goal is vague or risky.

Does /goal approve shell commands or edits?

No. Goal completion and permission approval are different controls. Claude Code permission modes still matter. Codex route, sandbox, repo access, and review settings still matter. Keep approvals explicit.

Can /goal reduce cost?

Do not assume that. A better goal can reduce wasted turns by making completion clearer, but long-running tasks can also consume more allowance. Check the active Codex or Claude Code route before leaving a task running, especially if an API key or subscription allocation is involved.

What makes a good goal?

A good goal has a measurable end state, a proof command or review artifact, a narrow scope, a known permission mode, a usage check, and an abort rule. If any of those are missing, write a normal prompt first and ask the agent to plan.

When is CI or a scheduler better than /goal?

Use CI or scheduler automation when the work is recurring, time-based, or should run without an interactive agent session. A coding agent can help build the workflow, but the workflow should own repetition, logs, retries, and escalation.