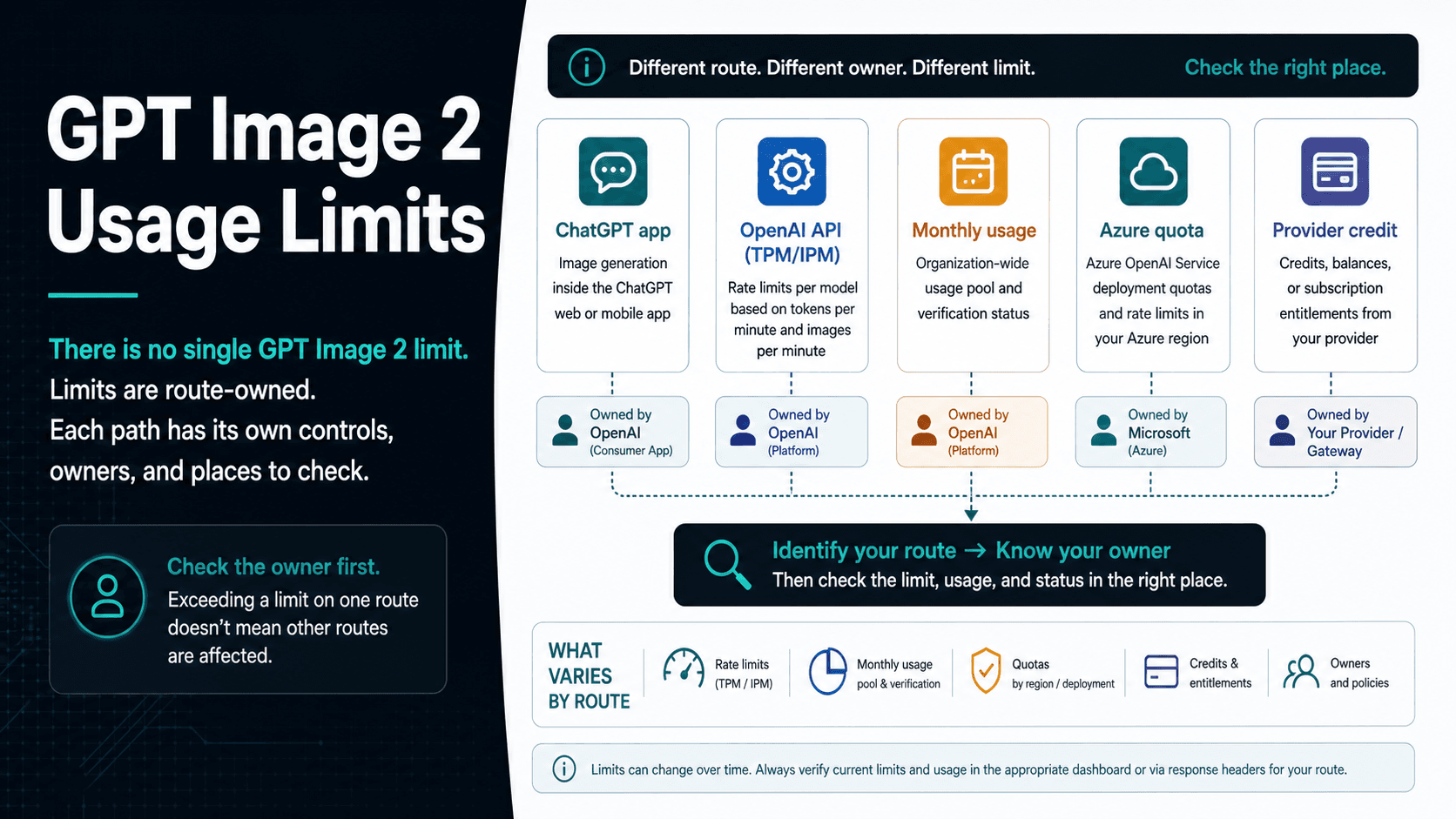

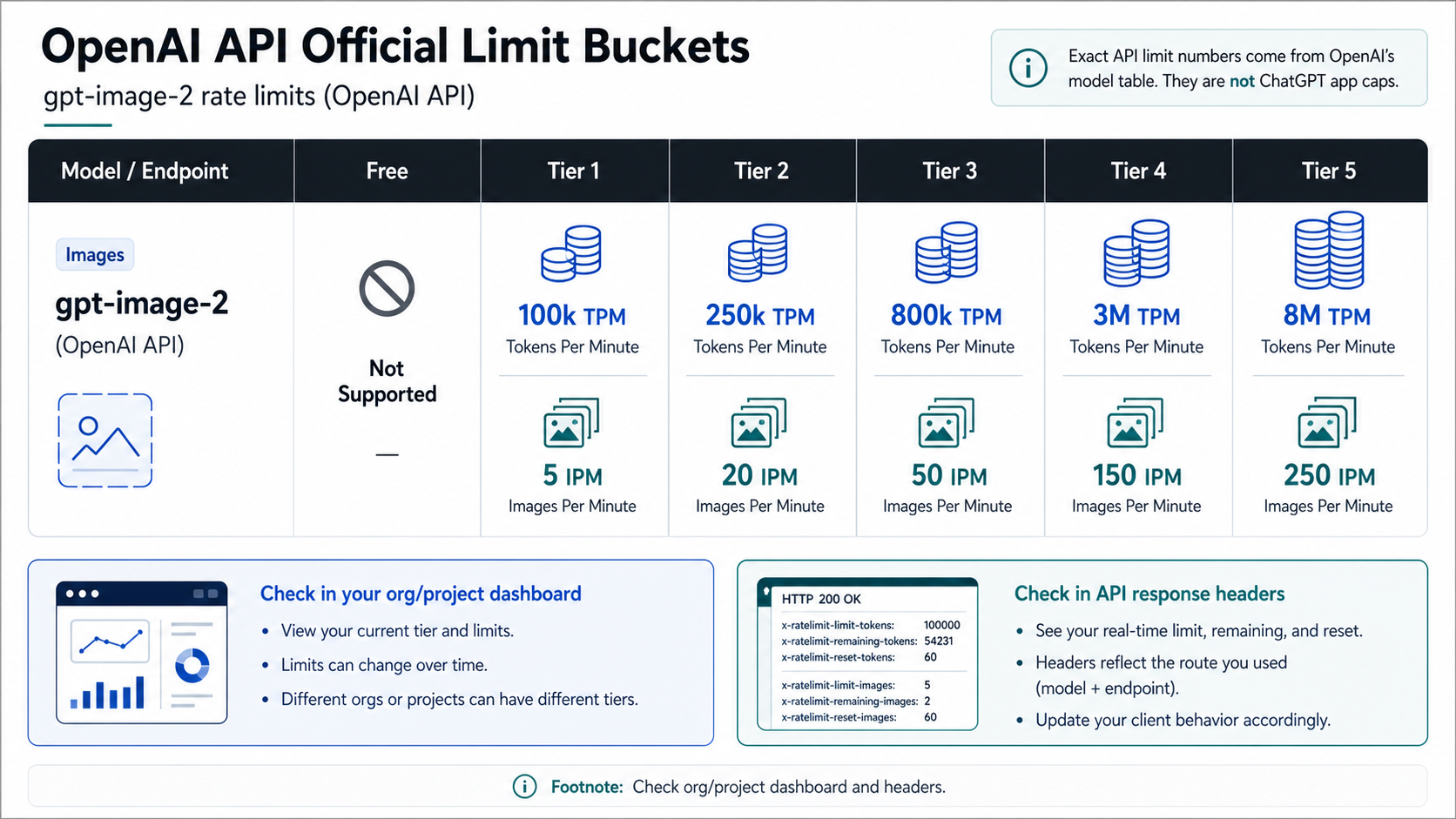

GPT Image 2 has no single usage limit: ChatGPT app caps, OpenAI API rate limits, monthly API spend limits, Azure quotas, and provider credits are separate controls. For direct OpenAI API calls, the official gpt-image-2 table checked on May 5, 2026 lists Free as not supported, Tier 1 at 100,000 TPM / 5 IPM, Tier 2 at 250,000 TPM / 20 IPM, Tier 3 at 800,000 TPM / 50 IPM, Tier 4 at 3,000,000 TPM / 150 IPM, and Tier 5 at 8,000,000 TPM / 250 IPM; your organization or project dashboard can still be the tighter live source.

| If you are using... | The limit owner is... | Check this first | Smallest responsible next step |

|---|---|---|---|

| ChatGPT image generation | ChatGPT app, plan, account, and current system conditions | The app message, plan page, and Help Center wording | Wait for reset or reduce demand; do not copy API tier numbers into the app |

OpenAI API with gpt-image-2 | OpenAI API organization/project and model table | Model limits page, Limits dashboard, response headers, monthly usage | Reduce request pressure, respect reset headers, or request a higher tier |

| Monthly API usage stop | OpenAI billing and usage ceiling | Usage dashboard and billing status | Raise the monthly limit or pause work; retries do not solve a spend ceiling |

| Azure OpenAI | Microsoft subscription, region, deployment, and quota system | Azure portal and Microsoft Learn quota docs | Treat it as an Azure quota change, not an OpenAI direct API fix |

| Third-party provider or gateway | That provider's credits, dashboard, and terms | Provider dashboard, pricing, retry policy, and model route | Verify owner-specific limits before moving production traffic |

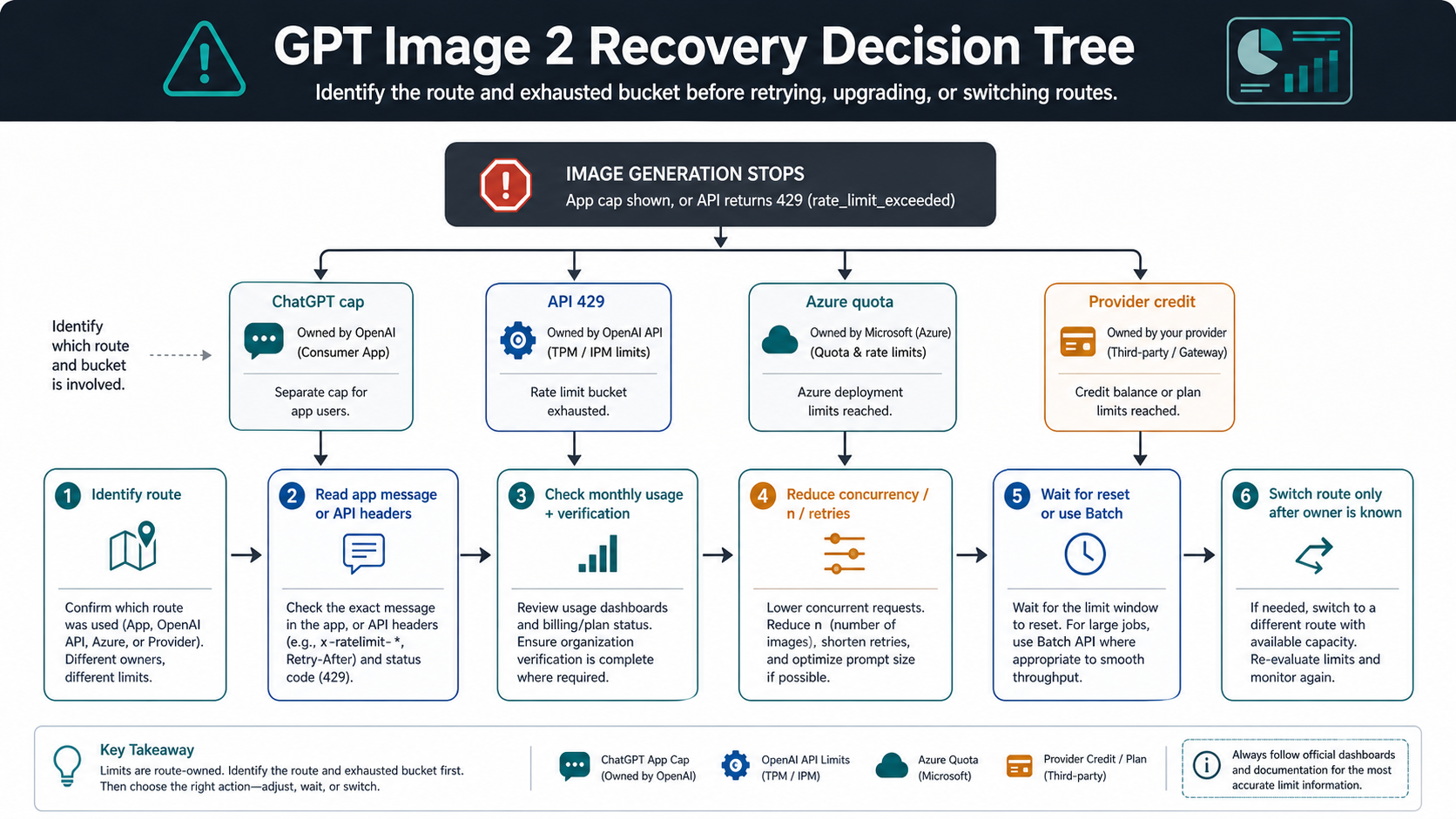

| Stop before you retry, upgrade, or reroute: identify which owner blocked you, which bucket was exhausted, and whether the live source says to wait, reduce throughput, raise quota, or change routes. |

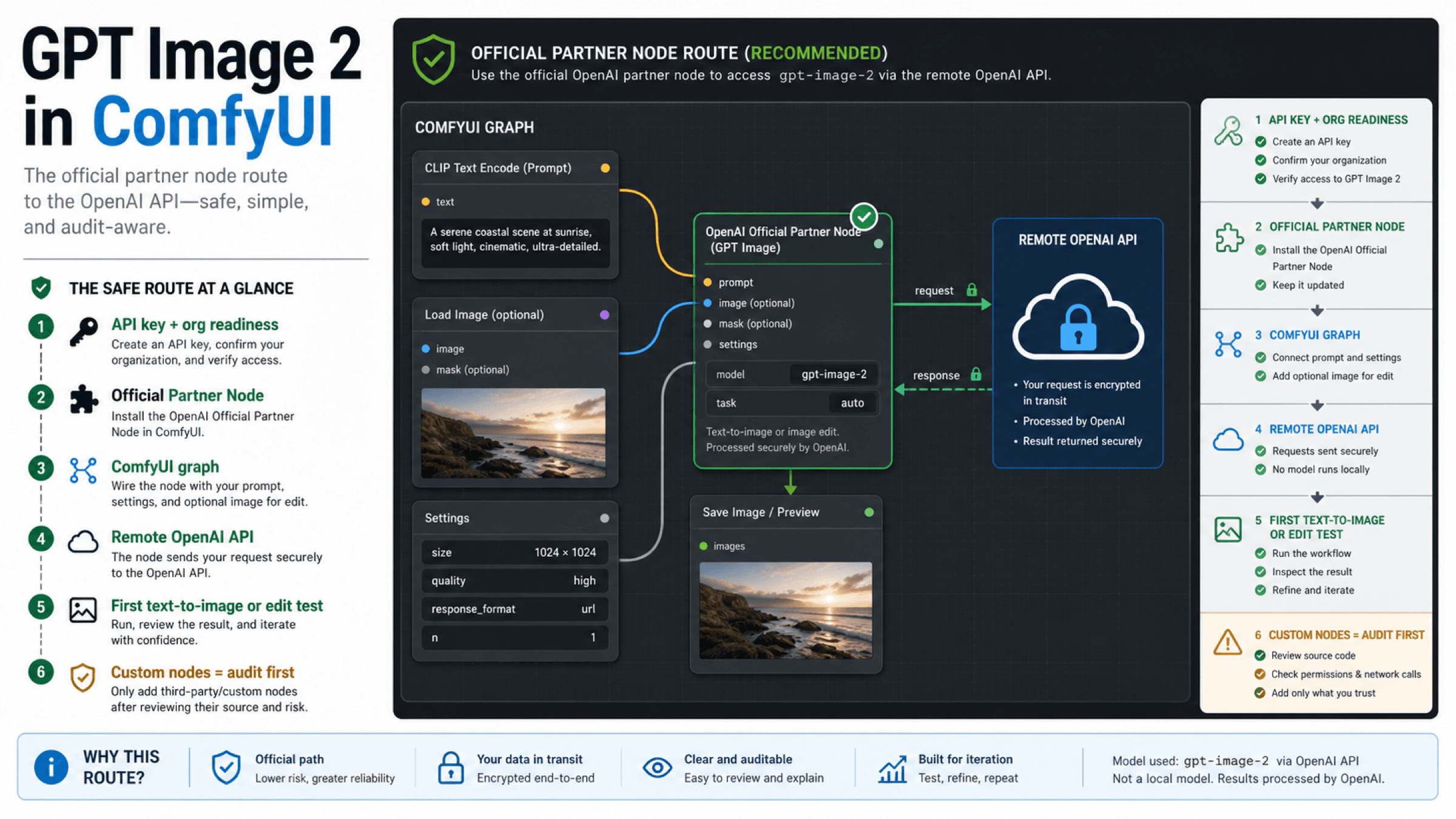

Official OpenAI API limits

For API users, the anchor is OpenAI's gpt-image-2 model page, not a ChatGPT plan rumor or a third-party daily-count table. The model page owns the official developer model ID, the supported image-generation route, the Free-tier boundary, and the published TPM/IPM values. Those numbers answer only direct OpenAI API throughput. They do not answer ChatGPT app caps, Azure deployment quota, or a provider's credit balance.

OpenAI API tier for gpt-image-2 | TPM | IPM | What the number means |

|---|---|---|---|

| Free | Not supported | Not supported | There is no supported official Free-tier API lane for this model. |

| Tier 1 | 100,000 | 5 | A low-throughput API project can still be blocked by image-per-minute pressure. |

| Tier 2 | 250,000 | 20 | More token room and more image requests, still bounded by the first exhausted bucket. |

| Tier 3 | 800,000 | 50 | Better for queue-backed product tests and internal tools. |

| Tier 4 | 3,000,000 | 150 | Higher sustained throughput, but still project/org scoped. |

| Tier 5 | 8,000,000 | 250 | The largest published table value, not a guarantee that every request shape clears. |

OpenAI's rate-limit guide also says limits are applied at the organization and project level. That is why two developers on the same project can affect the same pool, and why moving a request from one script to another does not create new capacity. The dashboard and response headers matter because the published table is the general model ceiling, while the live project can have tighter account, verification, spend, or temporary controls.

The image guide adds one more access boundary: GPT Image models may require organization verification before use. A verification block is not the same as a rate limit. If the API refuses access before it starts processing image requests, do not fix it with retries or a higher queue delay. Fix the organization, billing, model access, or project setup first.

Rate limit, usage limit, and app cap are not the same thing

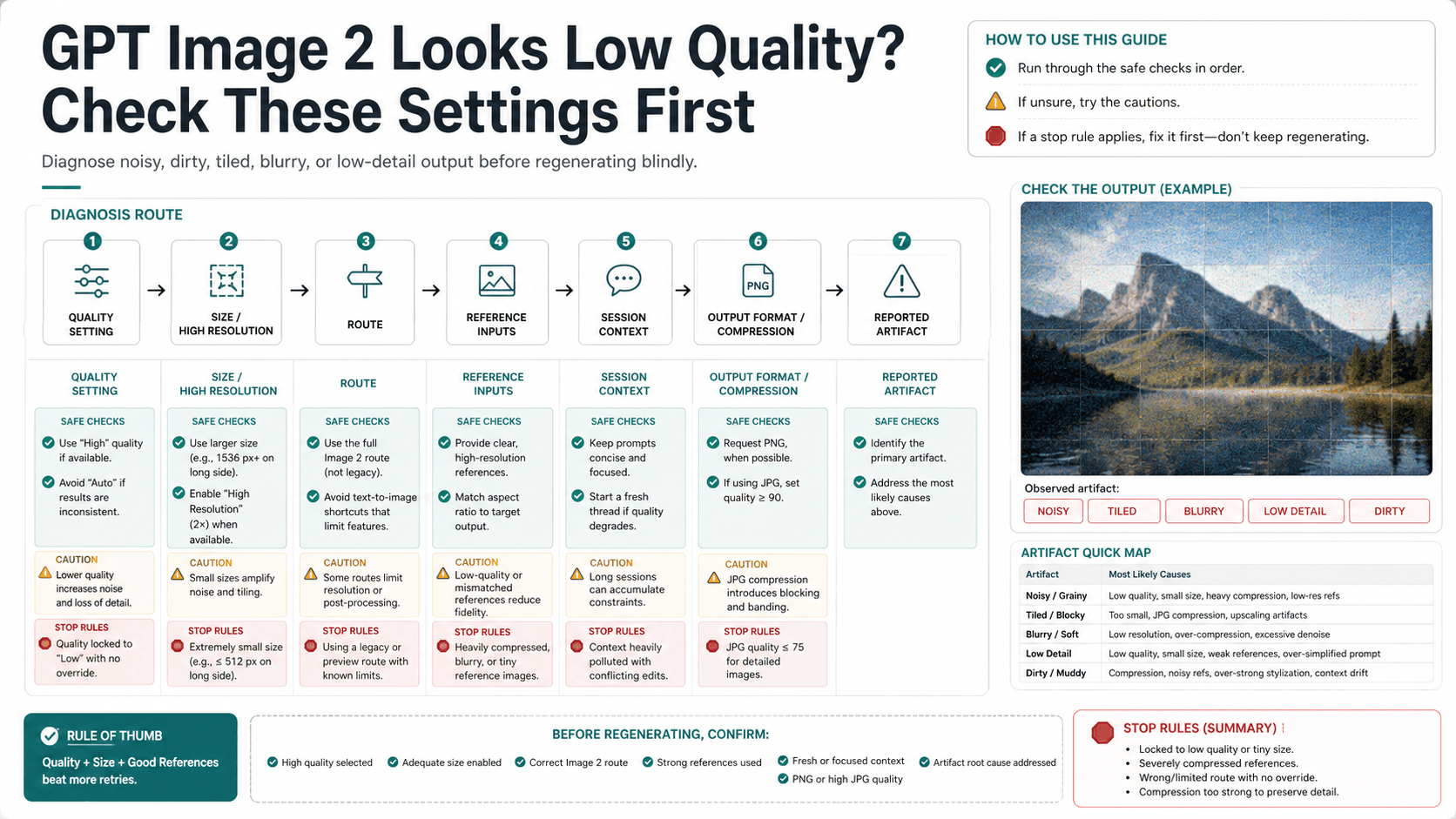

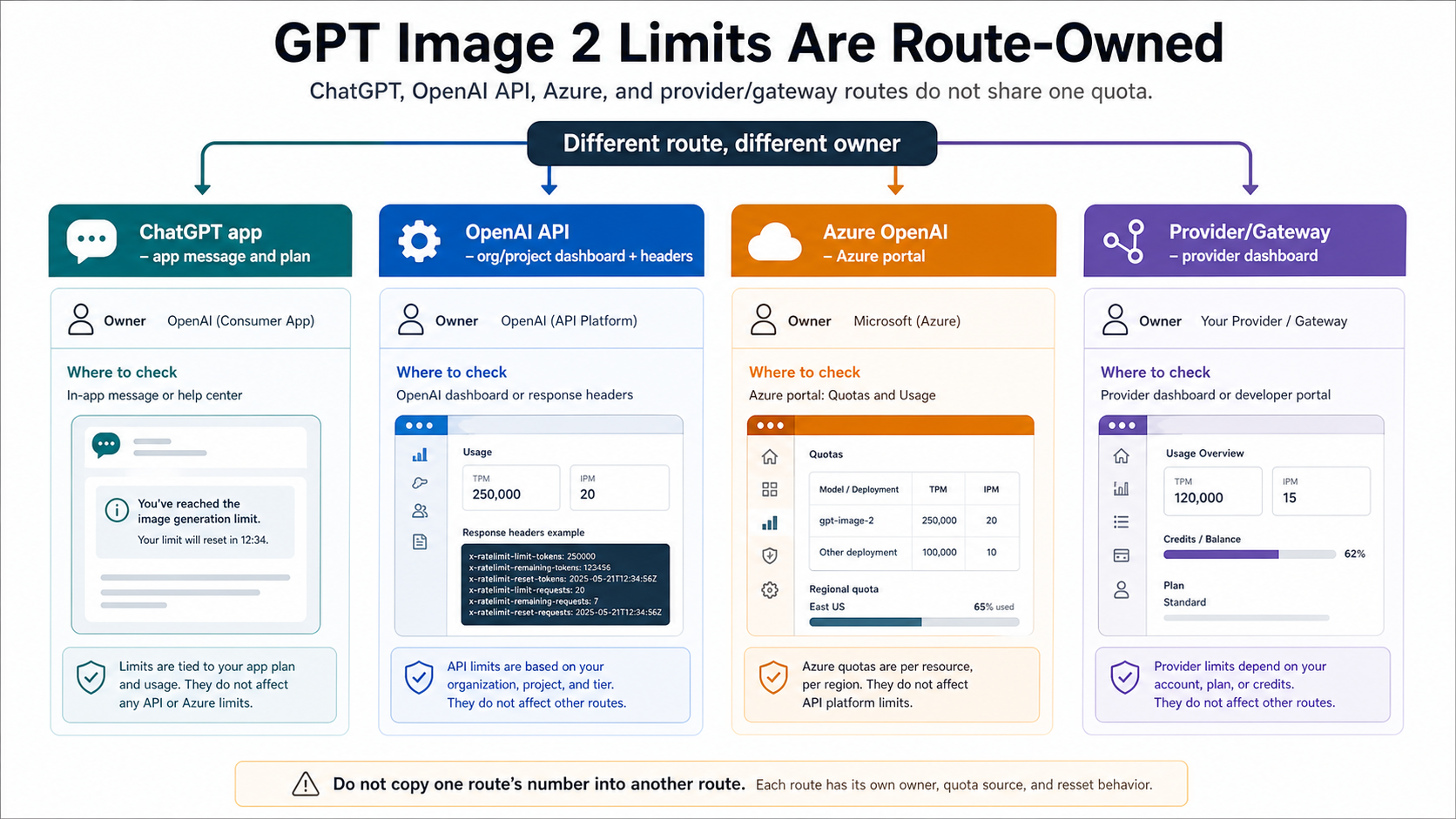

The phrase "usage limit" causes trouble because it can mean several different controls. A developer asking about GPT Image 2 usage limits might mean images per minute, tokens per minute, requests per day, monthly spend, organization verification, a ChatGPT app cooldown, or a provider credit balance. Those are not different labels for one counter.

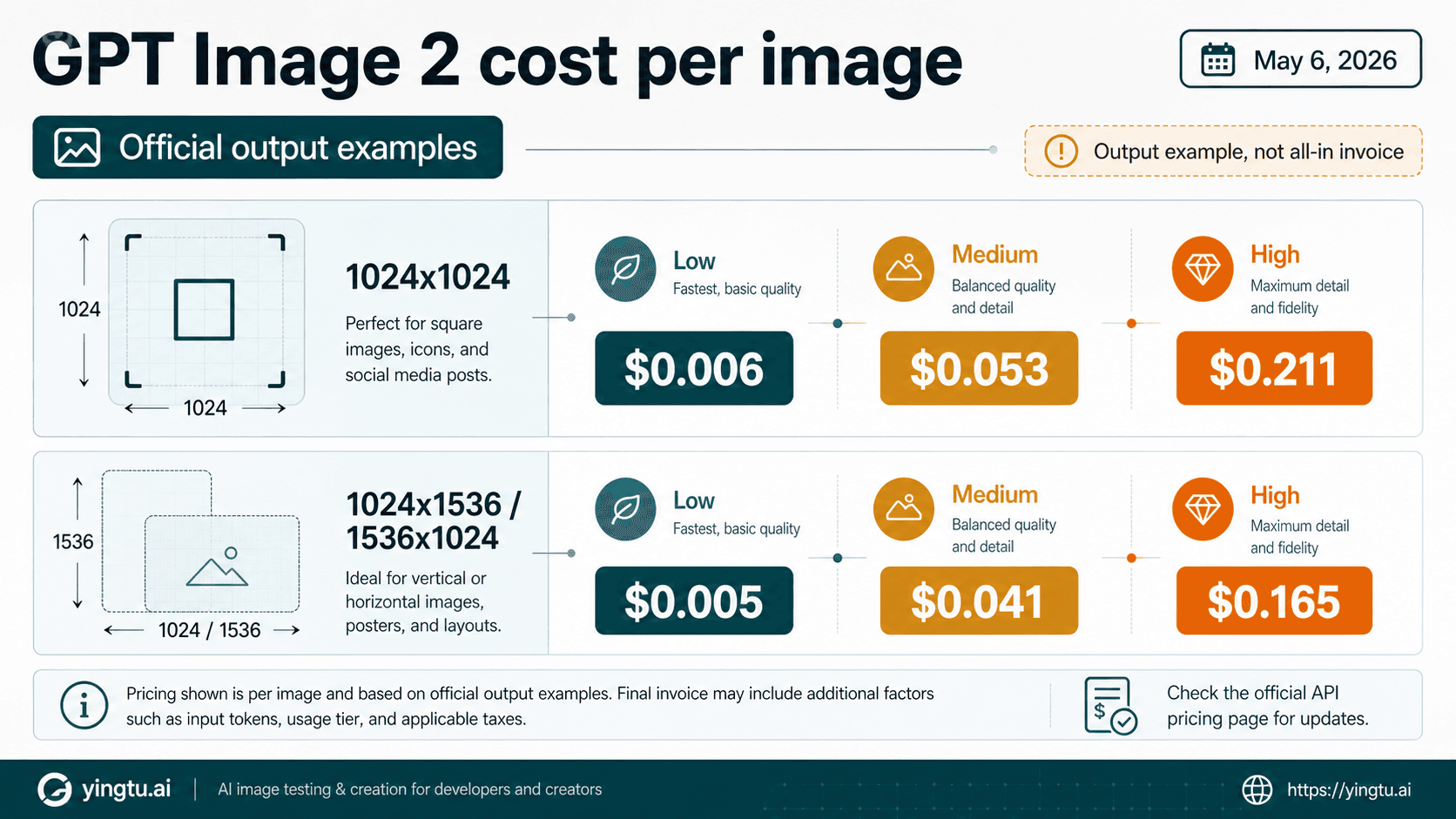

For the OpenAI API, rate limits are time-window throughput controls. TPM counts token pressure. IPM counts image request pressure. RPM, RPD, and TPD can also apply on API surfaces, depending on model and account. The useful debugging question is not "how many GPT Image 2 images do I get?" It is "which bucket did this request exhaust, and at which owner scope?"

Monthly usage limits are different. A monthly spend ceiling can stop otherwise-valid requests even when the per-minute rate limit is not the issue. If billing or monthly usage is the exhausted bucket, repeated retries are the wrong response. They can add more failed-request pressure and still never create budget. The right response is to inspect usage, billing, project ownership, and the monthly cap.

ChatGPT app caps are another contract. They are controlled by the ChatGPT app, the user's account, the plan, current system conditions, and product-side rules. OpenAI's ChatGPT pricing and Help Center surfaces make clear that image generation availability varies by plan and feature, while some "unlimited" wording remains subject to guardrails and temporary restrictions. That is a reason to check the live app message and official plan wording, not a reason to publish third-party exact counts as if they were official.

ChatGPT image caps need live app evidence

If ChatGPT stops generating images, do not paste the API TPM/IPM table into the app. The app does not expose a developer gpt-image-2 organization/project pool to the user. It is a consumer surface with plan-specific availability, account state, current load, safety systems, and temporary restrictions.

The first app-side check is the message that ChatGPT gives you when generation stops. It usually tells you whether to wait, reduce the request, change the prompt, or try later. The second check is the current plan surface. OpenAI's ChatGPT Images FAQ and pricing page are the first-party sources for availability language, but they do not turn every account into a fixed public image/day table. Some plan or region-specific behavior can change faster than a static public count can responsibly track.

That is also why "unlimited" needs a stop rule. ChatGPT Pro and Enterprise-style unlimited language can still be subject to abuse guardrails, temporary restrictions, or system conditions. If an app route says wait, wait. If it says a prompt or content policy is the problem, changing accounts or hammering retries does not solve it. If the work must be automated or logged, move the job to an API route and evaluate the API's own model, billing, and rate-limit contract.

How to recover from a GPT Image 2 limit

Start recovery with the block you actually hit.

| Symptom | Most likely owner | What to inspect | What not to do |

|---|---|---|---|

| ChatGPT says you have reached an image limit | ChatGPT app | App message, plan wording, current account state | Do not copy API tier values or use wrapper sites as a bypass. |

| API returns 429 with rate-limit language | OpenAI API project/org | Response body, headers, model, project, org, retry-after/reset, request shape | Do not retry in a tight loop; failed retries can count. |

| API says quota, billing, or usage is exhausted | OpenAI billing/project | Usage dashboard, billing status, monthly limit, project owner | Do not treat it as a per-minute cooldown. |

| API model is unavailable before generation | OpenAI account/model access | Model ID, organization verification, endpoint, project permissions | Do not raise throughput before proving access exists. |

| Azure deployment stops | Azure OpenAI | Azure portal, subscription, region, deployment quota | Do not open an OpenAI direct API ticket for a Microsoft-owned quota. |

| Provider playground stops | Provider/gateway | Provider dashboard, credits, route, status, retry policy, terms | Do not call it an OpenAI official cap. |

For OpenAI API 429s, keep a small incident packet. Record the route, model ID, organization, project, request size, quality setting, endpoint, response body, response headers, reset time, retry count, and whether monthly usage is close to the cap. That packet tells you whether to reduce concurrency, queue requests, lower pressure, wait for reset, request a higher tier, or fix billing. It also helps support reproduce the issue if the error does not match the documented limit.

For ChatGPT app limits, keep the workflow human. Save the app message, check whether the same account can generate a smaller or simpler image later, and avoid route-jumping before you know whether the block is plan quota, temporary load, policy, or prompt complexity. If you need batch generation, monitoring, persistent output storage, or customer-facing automation, that is a signal to evaluate the API route, not to look for a consumer-app workaround.

For monthly usage, stop retries and fix the spend owner. OpenAI's usage tiers and monthly limits are budget controls. If a project has hit the spend ceiling, a smarter backoff strategy will not produce images. The next step is a billing or usage-limit decision, not a 429 retry decision. For deeper OpenAI-wide quota handling, the sibling OpenAI API rate-limit guide covers generic 429, insufficient quota, billing, and support escalation branches.

Azure, providers, and gateways have separate quota owners

Azure OpenAI is not the same owner as the direct OpenAI API. Microsoft documents Azure quotas and limits by Azure subscription, region, deployment, and model route. That can be the right enterprise surface, but its limits belong in Azure portal and Microsoft Learn evidence, not in OpenAI's direct gpt-image-2 table. If an Azure deployment is blocked, start with Azure quota, region, deployment, and support path.

Third-party providers and gateways also own their own quota contracts. A provider can have a daily credit, trial balance, routing label, queue, retry policy, quality default, data rule, and failure-billing behavior that differs from OpenAI direct. That does not make the provider wrong. It means the provider is the source of truth for provider limits. Use the provider dashboard to answer provider capacity, and use OpenAI docs to answer first-party API capacity.

The safest way to compare providers is to keep the route label honest. If the reader's problem is official API entitlement, use OpenAI direct evidence. If the problem is cost or multi-route access, treat that as a provider comparison and verify every volatile claim with the provider's current docs or dashboard. Do not turn provider credits, speed claims, or "no limit" marketing into official GPT Image 2 usage limits.

Sibling decisions that should stay separate

The usage-limit decision is narrow: which owner controls the cap, which bucket is exhausted, and what the smallest responsible recovery step is. Several nearby GPT Image 2 questions should remain separate because they solve different reader jobs.

Use Is GPT Image 2 API Free? when the only question is the official OpenAI API Free-tier boundary. Use Can You Use GPT Image 2 for Free? when the question is broader free-to-try routes, browser tests, providers, wrappers, and unlimited claims. Use cheap GPT Image 2 API options when cost comparison is the real problem. Use the GPT Image 2 4K guide when image size, resolution, or output workflow is the decision. Use ChatGPT Images 2.0 when the reader needs the product and route map rather than quota troubleshooting.

Keeping those jobs apart makes the limit answer sharper. Free/unlimited route checks can audit risky wrapper promises. Cheap-provider comparisons can handle route cost. 4K workflow decisions can focus on size and output control. Usage-limit troubleshooting should keep returning to owner, bucket, live source, and recovery.

FAQ

What is the official GPT Image 2 API usage limit?

For gpt-image-2 on the direct OpenAI API, the official model table checked on May 5, 2026 lists Free as not supported, Tier 1 at 100,000 TPM / 5 IPM, Tier 2 at 250,000 TPM / 20 IPM, Tier 3 at 800,000 TPM / 50 IPM, Tier 4 at 3,000,000 TPM / 150 IPM, and Tier 5 at 8,000,000 TPM / 250 IPM. Your org/project dashboard and response headers can still be tighter.

How many GPT Image 2 images can I create in ChatGPT?

Use the current ChatGPT app message and official plan wording. Public OpenAI Help and pricing surfaces describe image availability by plan, but exact app-side image counts can vary by account, feature, region, system conditions, and temporary restrictions. Do not treat third-party exact counts as official.

Does the API limit reset every day?

Not as one universal daily image counter. API rate limits are measured through buckets such as TPM, IPM, RPM, RPD, TPM/TPD, and project/org scope. The reset behavior depends on the exhausted bucket and the response headers. Monthly usage limits are billing ceilings, not daily image resets.

Why do I get a 429 if I am below the monthly limit?

Because monthly usage and per-window throughput are different. You can have monthly budget left and still exceed IPM, TPM, RPM, or another live bucket. Read the error body and headers before changing billing or rerouting traffic.

Why do I get a quota or billing error if my RPM looks fine?

Because the exhausted bucket may be monthly usage, project billing, organization verification, or model access rather than requests per minute. Stop retries and inspect Usage, Billing, Limits, project ownership, and model access.

Can I bypass ChatGPT image limits with the API?

No. The API is a separate developer contract with billing, limits, verification, logging, and support responsibilities. Use it when you need a product API route, not as a bypass for a consumer app cap.

Are Azure GPT Image 2 limits the same as OpenAI API limits?

No. Azure OpenAI quotas are owned by Microsoft and depend on Azure subscription, region, deployment, and quota settings. Use Azure portal and Microsoft Learn for Azure limits, and OpenAI docs for direct OpenAI API limits.

Do provider credits increase my OpenAI API tier?

No. Provider credits belong to the provider route. They can help evaluation or routing, but they do not change your direct OpenAI organization/project tier unless the provider explicitly owns the traffic and documents that separate contract.

What should I do first when GPT Image 2 stops working?

Identify the owner and bucket. For ChatGPT, read the app message. For OpenAI API, read the response body, headers, Limits dashboard, usage, billing, model access, and project/org scope. For Azure or providers, use that owner dashboard. Then wait, reduce pressure, raise quota, or reroute only when the live source supports that move.