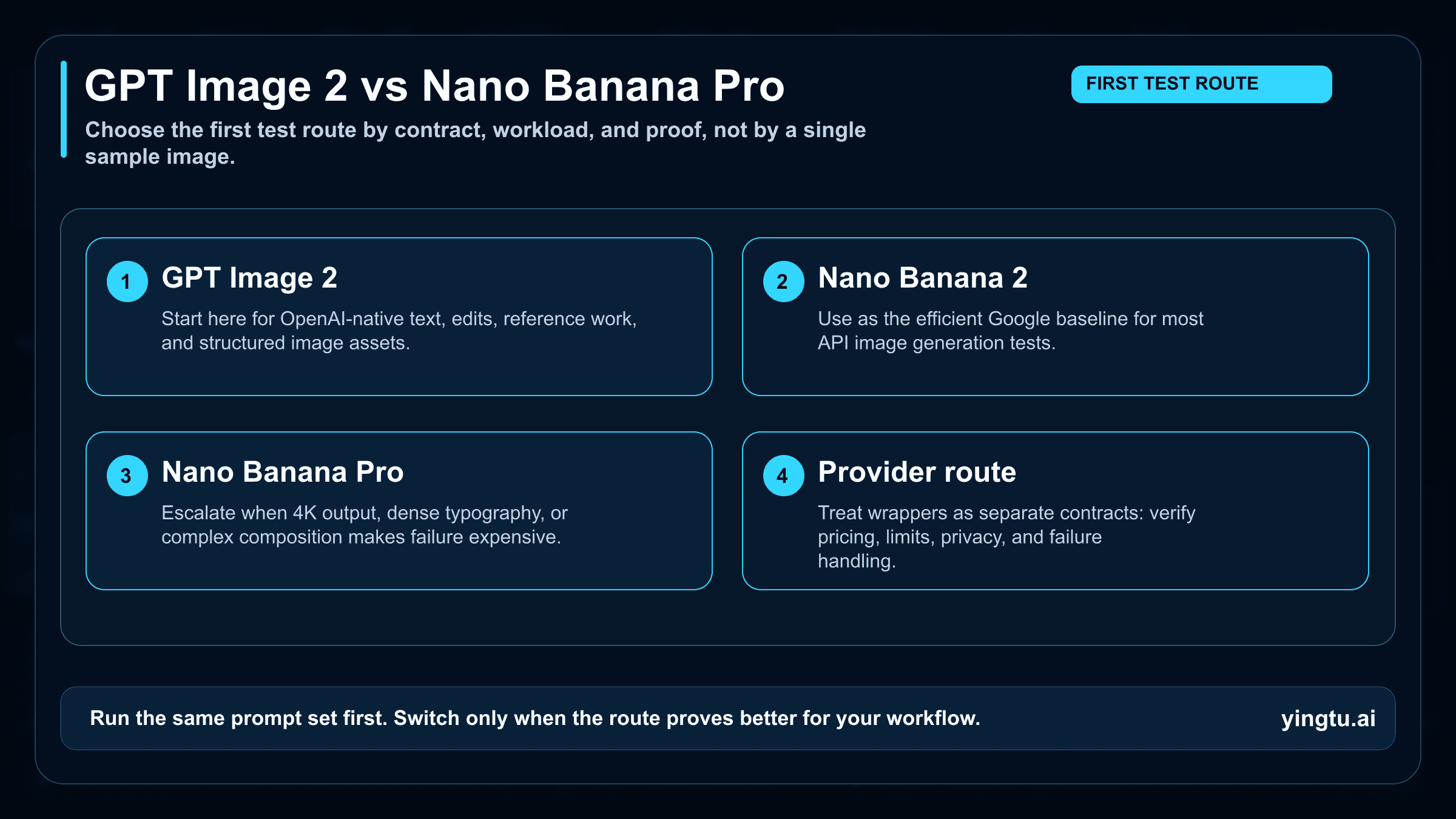

GPT Image 2 is the first route to test when your workflow depends on OpenAI-native image generation, text-heavy layouts, edits, or structured assets. Nano Banana 2 is the efficient Google lane to try before paying for Pro on most API jobs. Nano Banana Pro belongs in the test plan when 4K output, dense typography, complex composition, or failure cost makes the premium lane worth proving.

The useful question is not which model wins every prompt. It is which route should earn your first controlled test, which official contract you are using, and what proof is enough before you change a production pipeline.

| Route | Test first when | Hold back when |

|---|---|---|

| GPT Image 2 | You want OpenAI-native text, edit, reference, or structured image work through the Image API. | Your workflow is already Google-side and Nano Banana 2 meets the quality bar. |

| Nano Banana 2 | You need an efficient Google API default for broad image generation tests. | You specifically need Pro-level 4K, dense text, or complex composition. |

| Nano Banana Pro | The job has premium output requirements, hard typography, 4K delivery, or high failure cost. | Nano Banana 2 passes the same prompt set with acceptable retries. |

| Optional provider route | You need access convenience or multi-model routing. | You have not verified provider terms, limits, privacy, and failure handling. |

Run the same six prompts before you switch: a dense text poster, a product shot, a reference edit, a diagram or UI board, a 4K hero image, and a multilingual copy test. If one route does not beat your current baseline on the prompts that matter, keep your current pipeline.

Start with the route, not the brand

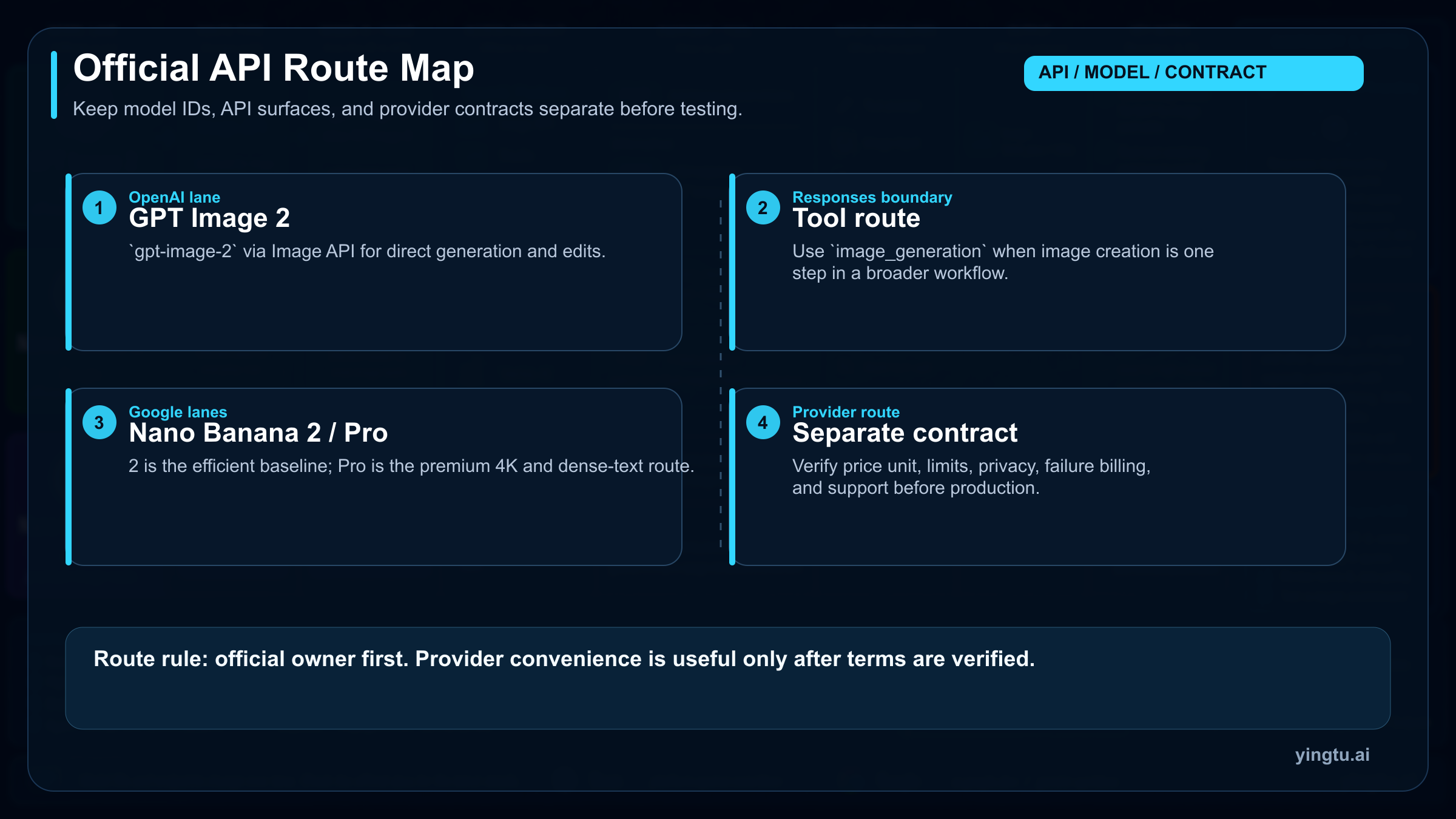

The first practical split is the contract owner. OpenAI's official model page lists gpt-image-2 as a GPT Image 2 model, and OpenAI's image generation documentation shows the Image API as the direct route for generation and edits with that model. Use that route when your existing stack already depends on OpenAI tooling, when you want structured edits, or when you need one official owner for model behavior, billing, and support.

Google's side has two lanes, not one. Nano Banana 2 maps to gemini-3.1-flash-image-preview; Nano Banana Pro maps to gemini-3-pro-image-preview. Google positions Nano Banana 2 as the high-efficiency image route and Nano Banana Pro as the highest-quality image route for studio-grade output, 4K assets, complex layouts, and precise text rendering. That split changes the comparison: many API teams should test Nano Banana 2 before Pro, even when the public discussion focuses on the Pro label.

Responses API access and ChatGPT app access should not be collapsed into the same lane. In OpenAI's current docs, the Responses API uses an image_generation tool as part of a broader model workflow; the Image API is the direct image endpoint for GPT Image models. On Google's side, Gemini app experiences, AI Studio testing, and Gemini API model IDs also have different contracts. If a wrapper or provider offers a convenient unified endpoint, treat that as a separate access contract rather than proof of OpenAI or Google first-party terms.

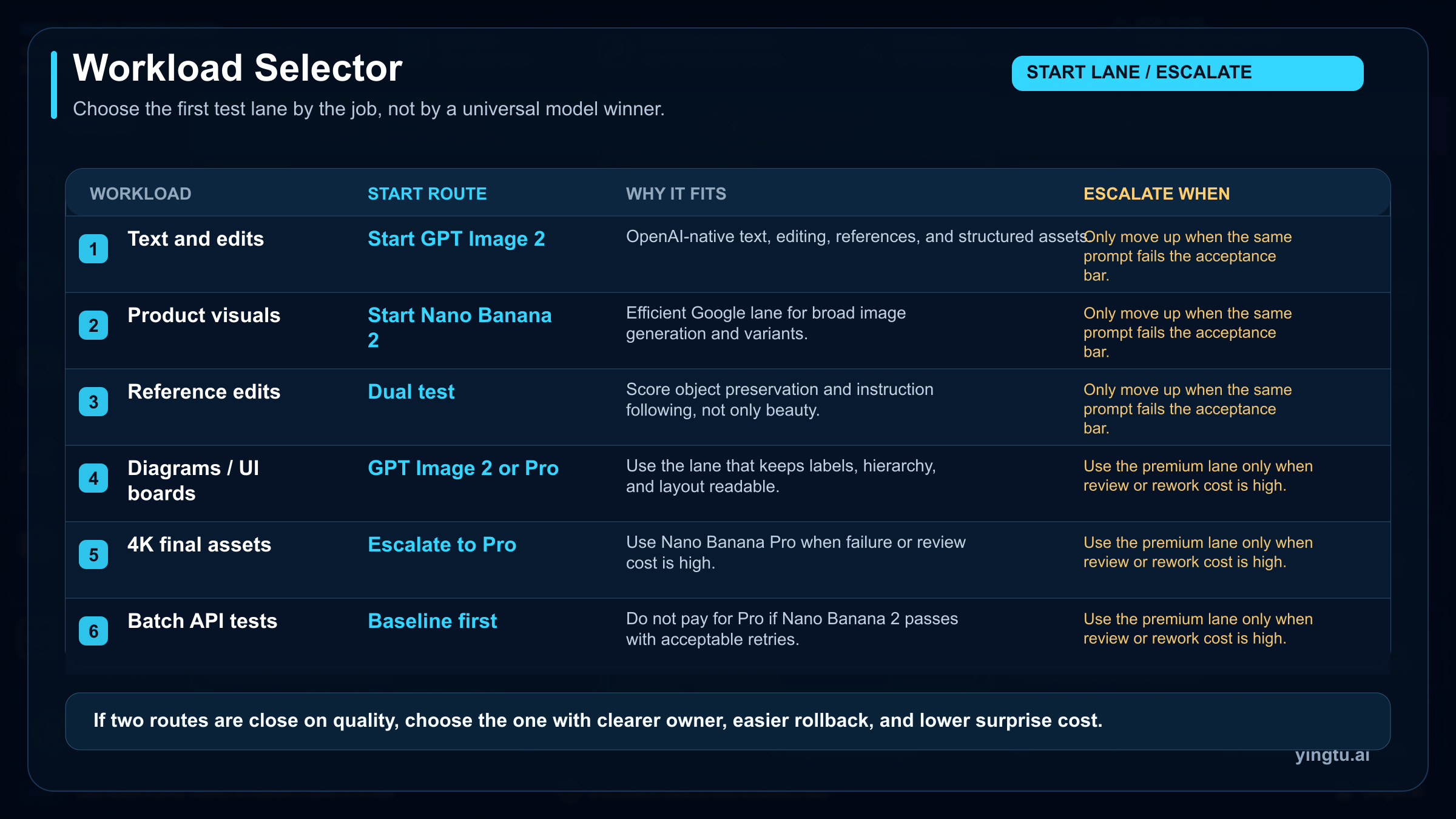

The workload matrix decides the first test

Text-heavy layouts are the strongest reason to test GPT Image 2 early when your stack is already OpenAI-native. Poster copy, annotated diagrams, UI boards, product callouts, and reference-guided edits are all places where the output has to survive review as a design object, not just as an attractive image. The right proof is a prompt set with real copy and real constraints, then a review of spelling, hierarchy, object placement, and editability.

Nano Banana 2 deserves the first Google-side test when the job is broad image generation, high-volume creative variants, ordinary product visuals, or internal experimentation where efficiency matters more than Pro-level polish. If Nano Banana 2 gets acceptable results with a modest retry budget, moving straight to Pro can add cost without changing the business outcome.

Nano Banana Pro becomes the better first test when the workload is expensive to retry or hard to approve manually: 4K hero images, dense multilingual typography, complex product scenes, high-fidelity reference work, or brand assets where a small composition error creates rework. Pro is not a moral upgrade over Nano Banana 2. It is a premium lane that should earn its place by reducing failure cost on the jobs where failure is expensive.

For reference edits, separate "looks close" from "preserves the instruction." A model that makes a beautiful new image but loses the reference object, label, pose, or background constraint may be worse than a less dramatic model that follows the edit. For product work, score consistency across several prompts, not the best single output. For multilingual copy, include at least one non-English text prompt in the proof run, because an English poster result does not prove Japanese, Korean, Chinese, Russian, or Spanish layout behavior.

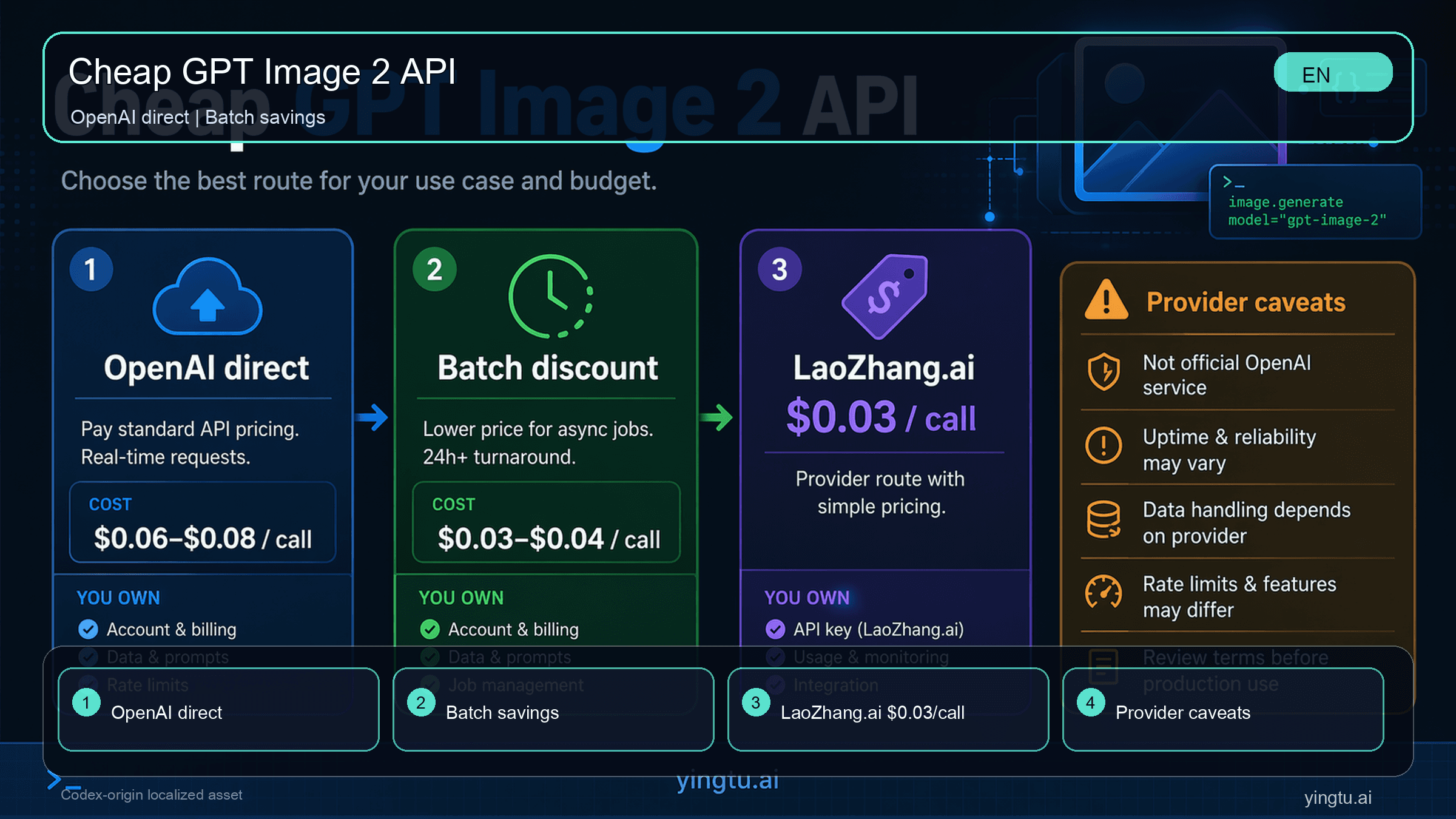

Price comparison only works with visible owners

As of April 25, 2026, OpenAI's GPT Image 2 cost examples show that price can move sharply by output size and quality. The official examples list a 1024x1024 output at about $0.006 on low quality, $0.053 on medium quality, and $0.211 on high quality. A 1024x1536 or 1536x1024 output is shown at about $0.005, $0.041, and $0.165 for low, medium, and high quality. Those examples are not a flat per-image promise; image inputs, text inputs, output tokens, quality, edits, retries, and caching can change the final bill.

Google's developer pricing table needs the same owner label. As checked on April 25, 2026, the Google API pricing table lists Nano Banana Pro with no Free Tier in the developer API. It lists standard output at $0.134 for 1K or 2K and $0.24 for 4K, with Batch/Flex output at $0.067 for 1K or 2K and $0.12 for 4K. The same table lists Nano Banana 2 standard output at $0.045 for 0.5K, $0.067 for 1K, $0.101 for 2K, and $0.151 for 4K.

The comparison changes when the workload changes. A low-quality GPT Image 2 output can be cheaper than a simple flat mental model, while a high-quality OpenAI output can cost more than a Nano Banana 2 output at some sizes. Nano Banana Pro can be cheaper than a high-quality OpenAI example for one prompt and still be the wrong route if your workflow needs OpenAI-native edits, Responses orchestration, or a particular output style. Cost is a route constraint, not the whole decision.

For deeper Google-side quotas and pricing boundaries, keep a separate reference like the Nano Banana Pro pricing and quota guide open while you test. For OpenAI-specific cheap-route testing, a separate GPT Image 2 API price route guide is useful only when it clearly labels provider pricing as provider-owned. Do not mix provider prices, wrapper credits, or marketplace claims into an official OpenAI or Google price row.

Run a proof matrix before switching production

The proof set should be small enough to run repeatedly and hard enough to expose the failure modes that matter. Use the same prompt text, the same reference assets, and the same output targets for every route. Store the prompt, settings, seed or run metadata when available, output images, retry count, and final accepted asset. A public side-by-side screenshot can inspire a test, but it cannot replace your own controlled run.

Use six prompts as a baseline:

| Proof prompt | What it should reveal | Default route to include |

|---|---|---|

| Dense text poster | Spelling, layout, hierarchy, and brand-safe typography | GPT Image 2 and Nano Banana Pro |

| Product shot | Realism, object consistency, lighting, and controllability | Nano Banana 2 and Nano Banana Pro |

| Reference edit | Whether the route preserves the source object and edit instruction | GPT Image 2 plus the relevant Google lane |

| Diagram or UI board | Structured composition, labels, and clean visual hierarchy | GPT Image 2 and Nano Banana Pro |

| 4K hero image | Resolution, detail stability, and final-asset polish | Nano Banana Pro plus one baseline |

| Multilingual copy test | Non-English text accuracy and layout behavior | All routes still under consideration |

Score each output on task fit, instruction following, text accuracy, reference preservation, retry count, and final cost. The winning route is the one that meets the acceptance bar with the least operational friction for your exact workload. If two routes are close on quality, choose the one with the clearer contract owner, easier rollback, and lower surprise cost.

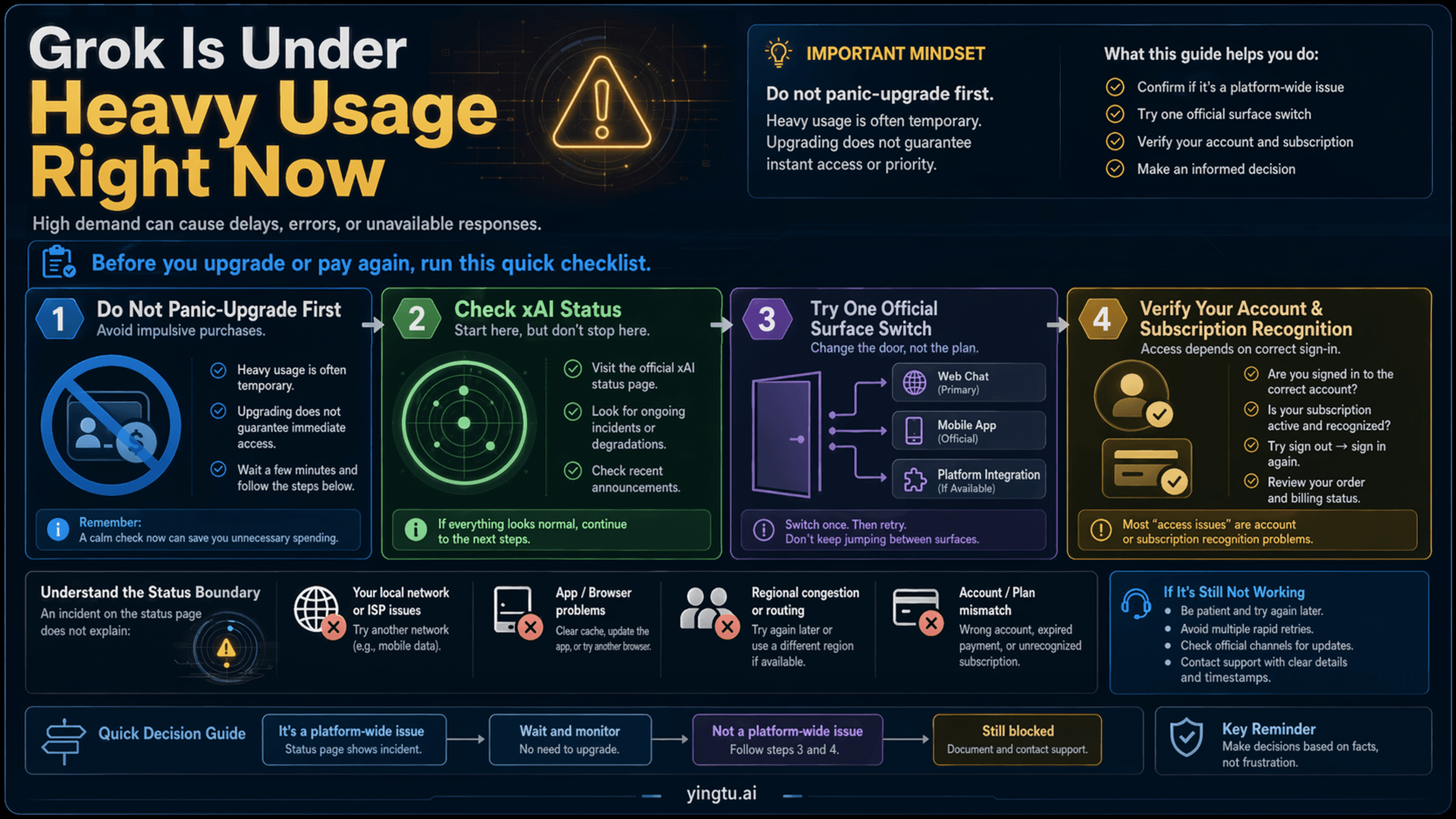

Stop rules for each route

Stop using GPT Image 2 as the first route when the workflow is already Google-side, the prompt set is mostly general image generation, and Nano Banana 2 passes with fewer retries or a lower total cost. Keep GPT Image 2 in the plan when OpenAI-native edits, structured image workflows, Responses orchestration, or direct OpenAI accountability are part of the product.

Stop using Nano Banana 2 as the default when the proof set repeatedly fails on 4K detail, dense text, complex layout, or high-fidelity reference requirements. That is the point where Nano Banana Pro has a concrete job. Do not upgrade to Pro merely because a public comparison says it looks better; upgrade when it reduces the failures your reviewers actually reject.

Stop using Nano Banana Pro as the default when Nano Banana 2 passes the same prompt set with acceptable retries, or when the official route cost and latency do not fit the workload. Pro is the better route only when its output quality or lower failure cost changes the final decision.

Stop using an optional provider route when terms are unclear. Before production, verify the price unit, output count, failure charging, rate limits, privacy terms, support path, and fallback plan. Convenience is valuable in testing, but production risk belongs in the same scorecard as visual quality.

FAQ

Is GPT Image 2 better than Nano Banana Pro?

It depends on the workload. GPT Image 2 is the better first test for OpenAI-native image generation, edits, structured assets, and workflows that already use OpenAI APIs. Nano Banana Pro is the better first test when the job needs premium Google-side output, 4K delivery, dense typography, complex composition, or lower failure cost. Use the same prompt matrix before making a production switch.

Where does Nano Banana 2 fit if the comparison says Nano Banana Pro?

Nano Banana 2 should stay in the decision. It maps to Google's gemini-3.1-flash-image-preview and is positioned as the efficient image lane. For many API jobs, Nano Banana 2 is the Google route to test before Pro. Nano Banana Pro is the premium override, not the automatic Google default.

Can I call GPT Image 2 through Responses API?

Use the Image API when you want the direct GPT Image route with model: "gpt-image-2". Use the Responses API when image generation is part of a conversational or multi-step workflow that uses the image_generation tool. Do not treat GPT Image model IDs as the main Responses model value unless OpenAI's docs explicitly describe that route.

Is Nano Banana Pro free in the Gemini API?

Google's developer API pricing table checked on April 25, 2026 lists Nano Banana Pro with no Free Tier. Consumer app access, AI Studio testing behavior, wrapper credits, and provider promotions can have different rules. Keep those contracts separate from the official developer API price table.

Should I use a provider or wrapper instead of official OpenAI or Google APIs?

Use a provider route only when it solves a real access, routing, or testing problem and you can verify the terms. For production, compare provider convenience against official support, privacy, failure charging, rate limits, output quality, and fallback options. If the provider cannot answer those questions, keep the official route as the production baseline.