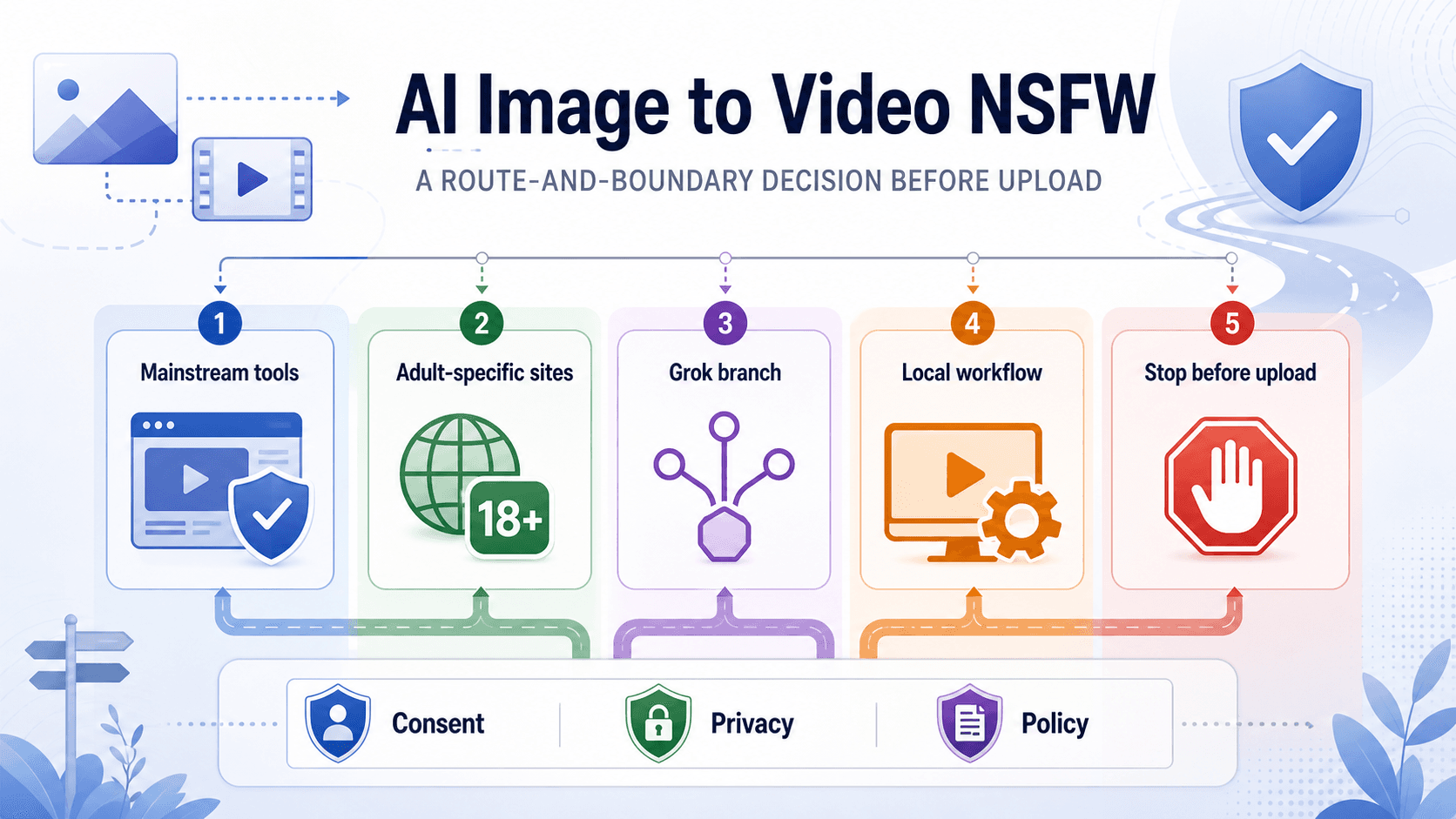

As of May 8, 2026, AI image-to-video NSFW work is not a single tool choice: mainstream image-to-video tools usually restrict explicit or sensitive jobs, while adult-specific sites may advertise fewer filters but require stricter consent, privacy, and policy checks before any upload. Start with the source image, not the generator, because an uploaded photo can turn a tool test into a real-person, privacy, or non-consensual intimate imagery problem.

| Route | Best fit | What can break | Check before upload |

|---|---|---|---|

| Mainstream image-to-video tools | SFW animation, product demos, avatars, motion tests | Explicit or sensitive jobs may be rejected by policy filters | Current usage policy, account risk, and whether a non-NSFW version solves the job |

| Adult-specific image-to-video sites | Lawful fictional or fully consented adult creative work | Privacy, storage, payment, public-gallery, and "no filters" claims may be weak | Terms, deletion controls, source-image handling, support path, and proof of consent |

| Grok Imagine branch | Questions about Grok, Spicy Mode, or xAI policy | Consumer access and policy boundaries are separate from general image-to-video tools | Use the Grok access and xAI policy guides instead of treating Grok as this whole topic |

| Local or open-source workflow | Experiments where you control files and infrastructure | Local control does not erase consent, legal, host, or model-card duties | Rights, age, identity, host rules, and whether the setup is actually safer |

| Stop before upload | Real person without explicit consent, minors, private/intimate images, harassment, NCII, or safeguard bypasses | The request is unsafe or unlawful, not merely unsupported | Do not generate; preserve evidence and use platform or legal reporting paths if harm already exists |

Treat "uncensored", "private", "free", "no filters", and "unlimited" as claims to verify, not facts to trust. A useful route guide should help you choose a lawful path, understand why a tool blocks a request, and stop when the image or intended output crosses consent, child-safety, privacy, or safeguard boundaries.

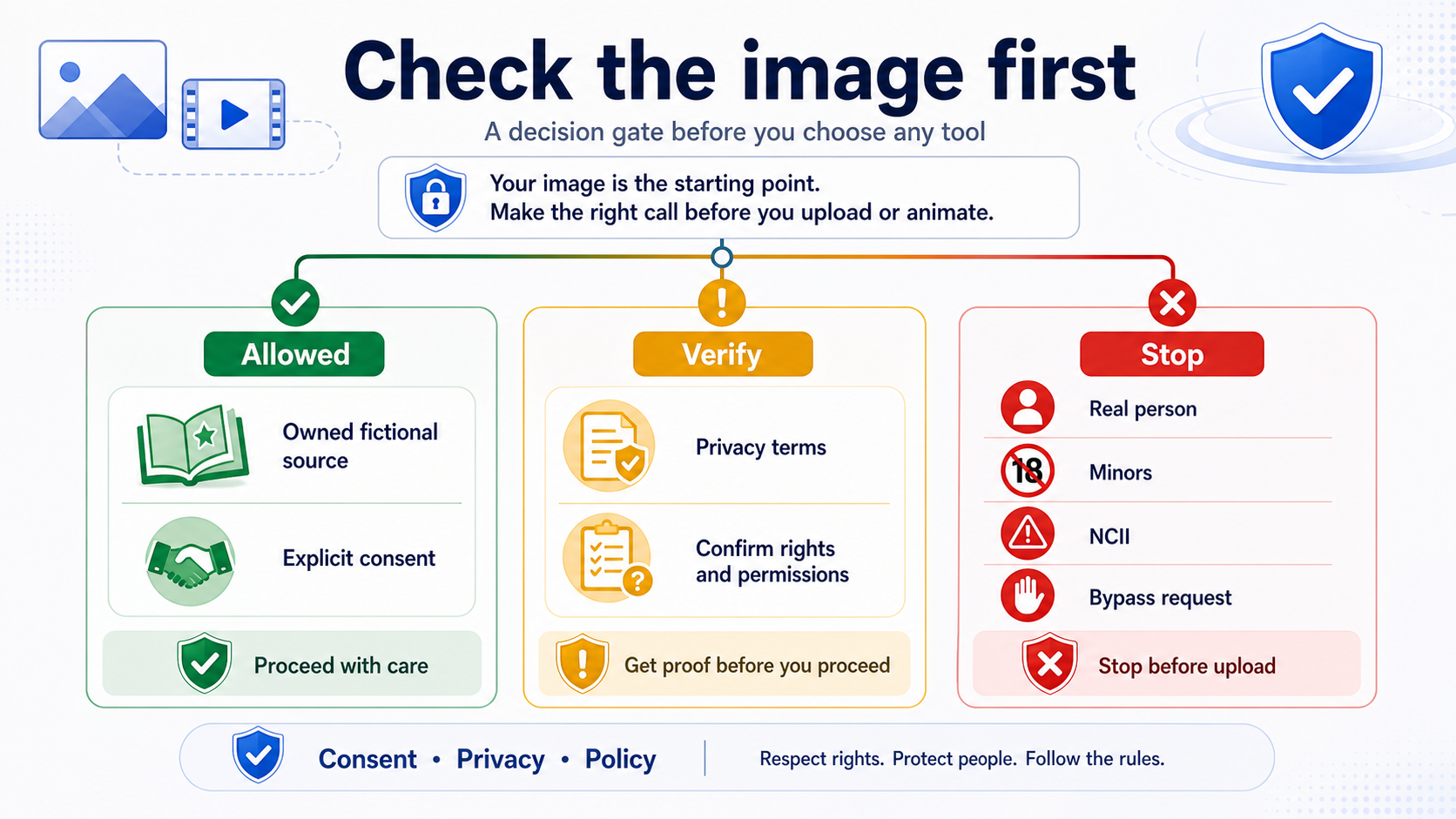

Check the Image Before You Check the Tool

The first decision is whether the image can be used at all. A fictional character, your own non-identifying artwork, or a fully consented adult source is a different case from a photograph of a real person, a private image, a celebrity, a coworker, or someone who never agreed to sexualized animation.

Use a simple gate before upload.

| Source image | Safer reading | Action |

|---|---|---|

| Your own fictional or AI-generated adult character | Lower identity risk, but still check the tool's terms and output rules | Continue only if the site allows the category and does not overclaim privacy |

| A model, performer, or partner with explicit consent for this exact use | Consent must be specific to generation, storage, editing, and distribution | Keep proof of consent and avoid routes with unclear deletion or reuse terms |

| A real person who did not consent | Likeness, privacy, publicity, harassment, and NCII risks converge | Stop before upload |

| A minor or age-ambiguous person | Child-safety boundary | Stop and use reporting channels if harmful content exists |

| A private or intimate image | NCII and privacy risk | Do not generate, upload, share, or test it |

| A request framed around getting around filters | Safeguard circumvention | Stop rather than rewording the request |

The important distinction is not whether the output would be "adult" in a broad sense. It is whether the source image creates rights and identity risk. Image-to-video is sharper than text-only generation because the uploaded image can carry a person's face, body, metadata, private context, or implied identity. If the source image fails the consent gate, changing tools does not fix the request.

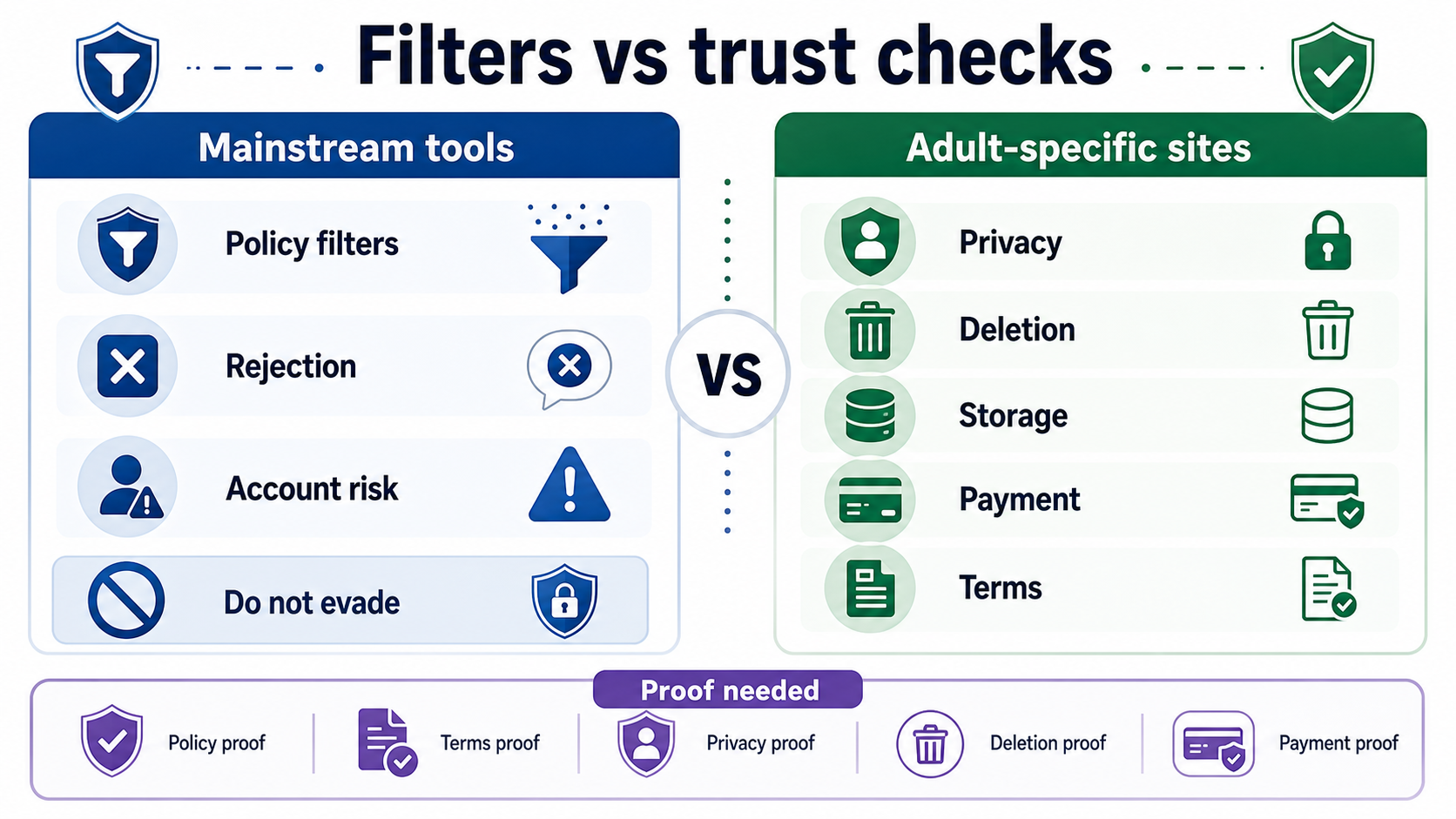

Why Mainstream Image-To-Video Tools Block NSFW Requests

Mainstream tools are built for broad consumer, creator, and business use. They may support image-to-video animation while still restricting explicit sexual content, non-consensual intimate imagery, child sexual abuse material, real-person likeness misuse, harassment, and attempts to bypass safeguards.

Runway's Usage Policy says it uses automated and human review and prohibits sexually explicit content, adult nudity, child sexual abuse content, attempts to create or modify non-consensual intimate imagery, and use of another person's image, video, or audio without permission. HeyGen's ethics page and moderation policy similarly make sexual content and consent boundaries visible for avatar and video generation.

The same pattern appears at model-provider level. OpenAI's usage policies prohibit sexual violence, non-consensual intimate content, child sexual exploitation, certain likeness misuse, and safeguard circumvention. Google's Generative AI prohibited-use policy prohibits content that facilitates child sexual abuse or exploitation, non-consensual intimate imagery, privacy or IP violations, safety-filter circumvention, and sexually explicit content for pornography or sexual gratification. Pika's acceptable-use policy requires rights and consents for images used in video creation and prohibits pornographic or sexual-gratification content.

That does not mean every moderation decision will be predictable. It means a rejected job is usually a boundary signal, not a puzzle. If a mainstream generator refuses an NSFW image-to-video request, the safest next step is to classify why: explicit content restriction, real-person likeness, missing consent, minor-safety risk, private image, or policy-sensitive prompt. Rephrasing the request to make a filter miss the intent moves the workflow in the wrong direction.

Adult-Specific Image-To-Video Sites Need A Trust Check

Adult-specific image-to-video sites are the route that may sound most aligned with the phrase "AI image to video NSFW", but they are also the route where claims require the most scrutiny. A site can say "private", "uncensored", "free", "no filters", or "no limits" without proving how it stores uploads, who can view outputs, whether deleted files are actually removed, how payment descriptors appear, or how abuse reports are handled.

Before uploading any image to an adult-specific generator, look for the contract, not the promise.

| Claim or feature | What to verify | Why it matters |

|---|---|---|

| "Private" | Privacy policy, storage duration, model-training language, public-gallery defaults, and whether outputs are visible to staff or other users | Adult uploads are sensitive even when lawful |

| "Delete anytime" | Whether deletion covers source images, derived videos, thumbnails, logs, and backups | A visible delete button may not mean full retention removal |

| "No filters" | Terms of use, prohibited-content list, reporting channel, and enforcement history | A no-filter pitch can hide weak abuse controls |

| "Free" | Credits, watermarking, resolution, queue time, export limits, and whether payment is required for deletion or privacy | Free trials can be useful, but they are not a long-term contract |

| "Realistic people" | Consent, likeness, NCII, and age policy | Real-person generation is where harm and legal exposure rise fastest |

| "Local or anonymous" | Whether generation really runs locally, what leaves the device, and what logs remain | Privacy depends on architecture, not marketing wording |

If a site does not disclose the basics, do not upload a source image that would be harmful if stored, leaked, reused, or misidentified. For lawful fictional adult work, a less polished but clearer contract may be safer than a glossy page that refuses to say what happens to uploads. For any real-person or private-image scenario, the correct route is still to stop.

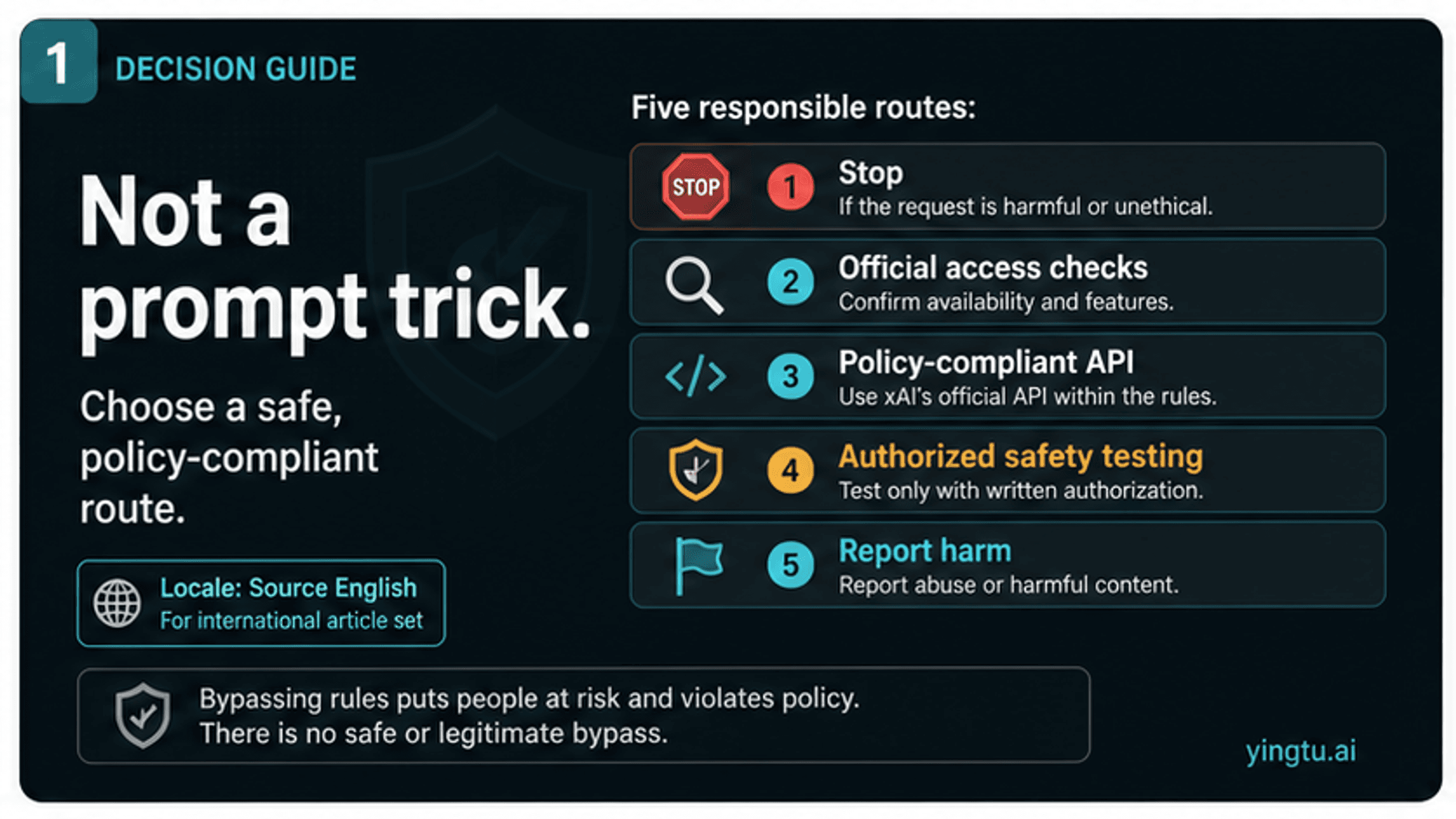

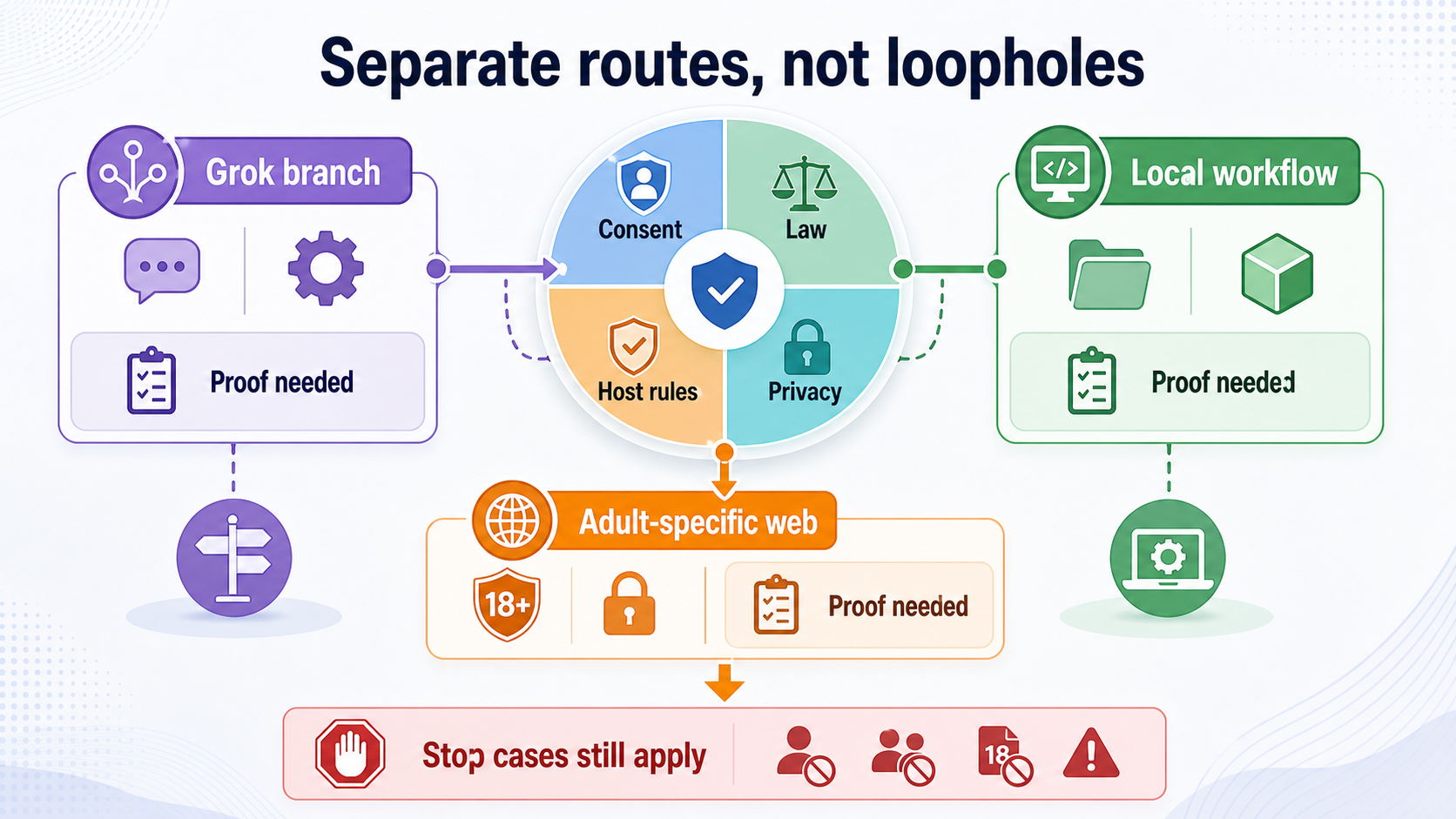

Grok Imagine Is A Separate Branch

Grok appears in many adult-image conversations because of Grok Imagine, Spicy Mode, and xAI's policy changes. That does not make Grok the owner of every AI image-to-video NSFW question.

Use the Grok branch only when the next question is actually about Grok: whether Spicy Mode appears for the current account, why it is missing, whether X sensitive-media settings matter, or how xAI policy treats adult image generation. For account availability and missing-mode triage, use the Grok Imagine Spicy Mode availability guide. For policy, API moderation, regulatory context, real-person, minor, and NCII boundaries, use the Grok xAI NSFW image generation policy guide.

xAI's Acceptable Use Policy applies across consumer, developer, and business use. It prohibits privacy and publicity violations, pornographic depictions of a person's likeness, sexualization or exploitation of children, and safeguard circumvention. That makes Grok a product-specific route with its own account and policy contract, not a general answer to every adult image-to-video workflow.

The clean split is practical: use the general route board to decide whether the workflow belongs in mainstream tools, adult-specific tools, a local setup, the Grok branch, or no tool at all. Use the Grok pages only when the branch is already Grok-specific.

Local And Open-Source Routes Do Not Remove Responsibility

Local or open-source image-to-video workflows can reduce exposure to a third-party web service, but they do not remove consent, law, host rules, or model-card conditions. Running a workflow on your own machine may change who stores the file. It does not make a non-consensual real-person video acceptable, does not make a minor-safety issue disappear, and does not give permission to distribute harmful output.

Think of local generation as a control tradeoff.

| Local advantage | Remaining boundary |

|---|---|

| Source files may stay on your hardware | The source image still needs rights and explicit consent |

| You can inspect some code, model cards, or workflow nodes | You still need to follow licenses, host rules, and local law |

| You can avoid adult-site storage and payment risk | You still own evidence handling, deletion, and misuse prevention |

| You can experiment with fictional material | You should not use local control to sexualize real people or bypass safeguards |

Avoid model lists, prompt recipes, checkpoint names, or filter-evasion steps for local adult generation. The useful discussion is higher level: local control can be a privacy improvement for lawful fictional or consented work, but it is not a loophole around the source-image gate.

Claims From Old NSFW Image-To-Video Lists Can Age Badly

NSFW image-to-video pages age quickly because moderation behavior, free credits, model access, payment rules, region availability, and privacy terms change quickly. Treat old listicles and social posts as pointers to verify, not as proof.

Use this freshness filter.

| Claim | Trust only when | Safer wording |

|---|---|---|

| "No filters" | The current terms, prohibited-use page, and enforcement behavior support the exact claim | "The site advertises fewer filters, but verify current rules" |

| "Private" | The privacy policy covers source images, outputs, logs, backups, staff access, and model training | "Check storage and deletion before upload" |

| "Free" | The live product shows export, watermark, resolution, and credit limits | "Free-to-try, not guaranteed free for the whole job" |

| "Unlimited" | The current plan and fair-use rules say so plainly | "Limits can change by plan, queue, and abuse controls" |

| "Works with real photos" | The policy clearly allows the specific consented use | "Real-person use requires explicit consent and strong rights checks" |

| "Grok still works" | The current Grok account surface and xAI policy support that route | "Use the Grok-specific guides for that branch" |

This is not just caution for cautious people. Uploading an adult source image to the wrong site creates a record. If the image is sensitive, private, or tied to a real person, the risk starts at upload, not at publication.

If Harmful Sexualized AI Content Already Exists

If someone has made or shared harmful AI sexualized content of you, another adult, or a child, the next step is not tool comparison. Preserve evidence, record URLs, account names, timestamps, and platform names, and use platform reporting and removal paths. Do not reshare the content while trying to prove it exists.

For minors or suspected child sexual abuse material, use child-safety reporting resources such as the National Center for Missing and Exploited Children's generative AI guidance and CyberTipline pathways. NCMEC describes AI-created child sexual abuse material, nudify-style harms involving children, sextortion, and reporting/removal resources.

For non-consensual intimate imagery in the U.S., the Congressional Research Service summary of the TAKE IT DOWN Act explains that the law covers certain nonconsensual publication of intimate images, including digital forgeries, and gives covered platforms until May 19, 2026 to establish notice-and-removal processes. Local laws vary, so platform reporting, trusted support organizations, and qualified legal advice may all matter.

When harm already exists, the task changes from "which generator should I use" to "how do I document, report, and remove this safely?"

FAQ

Can mainstream image-to-video tools make NSFW video from an image?

They may animate ordinary images, but many mainstream providers restrict explicit or sensitive content, real-person likeness misuse, NCII, child-safety harms, and safeguard circumvention. If your request is rejected, treat the rejection as a boundary signal before trying another route.

Is an adult-specific image-to-video site safer for NSFW work?

Not automatically. It may fit lawful fictional or fully consented adult creative work better than a mainstream tool, but only if the site's terms, privacy policy, deletion controls, source-image handling, payment behavior, and reporting path are clear enough for the sensitivity of the image.

Can I use a real person's photo if the output stays private?

Do not use a real person's image for sexualized animation without explicit consent for that exact use. "Private" does not remove likeness, privacy, NCII, harassment, or legal risk, and many services still process or store uploads.

Is a fictional or AI-generated character different?

Yes, the identity risk is usually lower, but the tool's terms, age depiction rules, content limits, and output-sharing rules still apply. Fictional does not mean every platform will allow the result.

Does local generation make NSFW image-to-video legal?

No. Local generation can change where files are processed and stored, but it does not erase consent, age, privacy, publicity, NCII, license, host, or local-law boundaries.

Is Grok Imagine the best route for this?

Only if the real question is Grok-specific. For Spicy Mode visibility and missing-mode checks, read the Grok Imagine availability guide. For xAI policy, API moderation, and regulatory context, read the Grok/xAI NSFW policy guide.

What should I do if a site advertises "uncensored" or "no filters"?

Treat it as a claim to verify. Check the current terms, prohibited-content rules, privacy policy, source-image storage, deletion controls, payment terms, and support path. If those are unclear, do not upload sensitive images.

What is the safest first move?

Use the source-image gate. If the image is fictional or fully consented and the site contract is clear, choose the route that fits your privacy and policy tolerance. If the image involves a non-consenting real person, a minor, private/intimate material, NCII, harassment, or safeguard bypassing, stop before upload.