A Grok image jailbreak request is not a prompt-trick workflow. Grok Imagine access, the xAI image API, xAI policy, and X posting rules are separate routes, and none of them turns safeguard evasion, real-person sexualized likeness, minors, or nonconsensual imagery into an acceptable workflow.

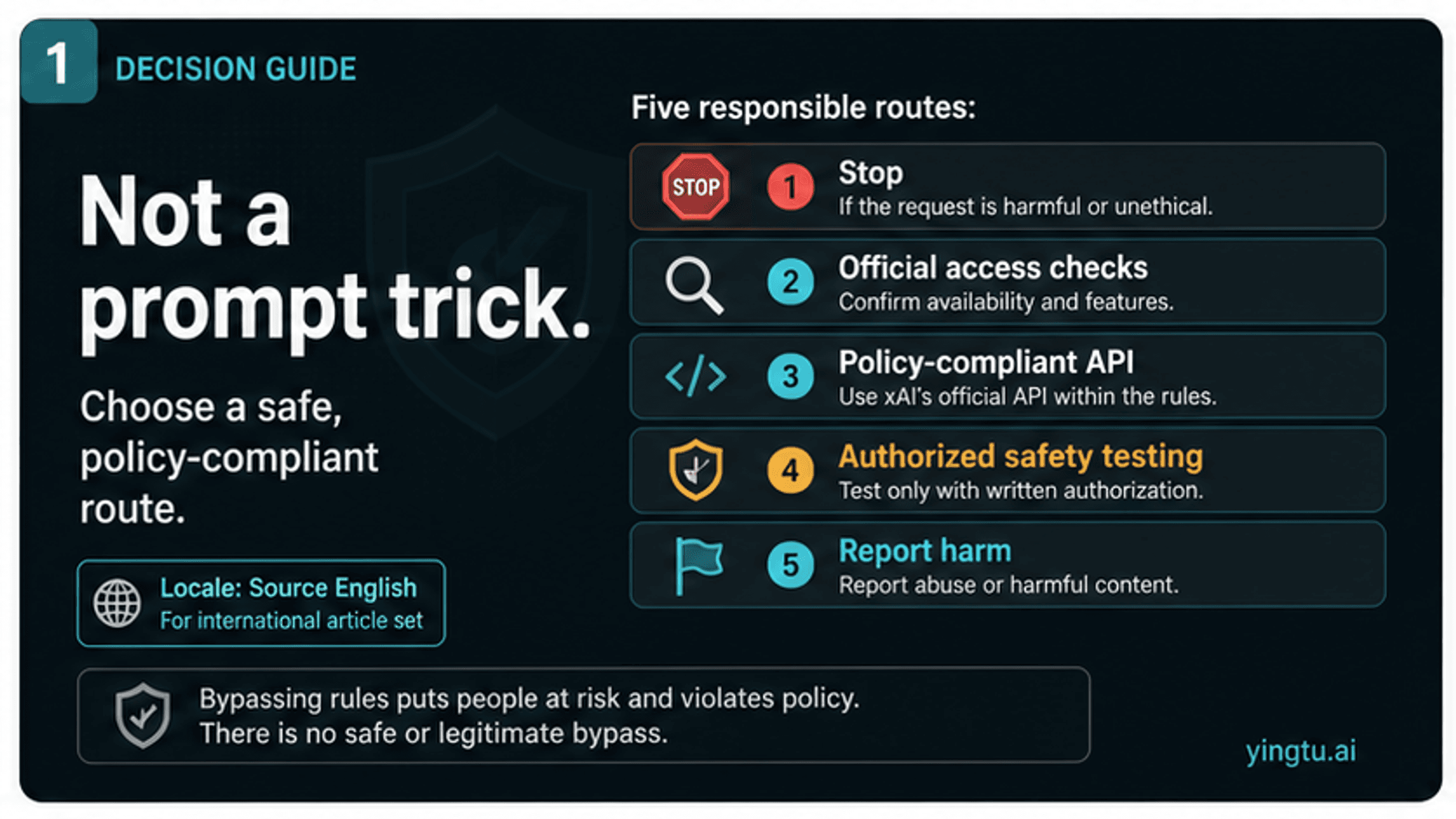

Classify the goal first:

| Goal behind the request | Safer next move |

|---|---|

| Bypass a blocked image prompt | Stop. Do not use bypass prompts, wrappers, or prompt lists as instructions. |

| Find a missing Grok Imagine or Spicy Mode control | Troubleshoot official app access, account state, region, and content settings instead. |

| Build with xAI image generation | Use the documented API inside xAI policy controls; the API is not a moderation bypass. |

| Test safety behavior | Do it only with authorization, logging, scope limits, and non-harmful test cases. |

| Respond to harmful output or abuse | Preserve evidence, report through the platform route, and avoid redistributing the content. |

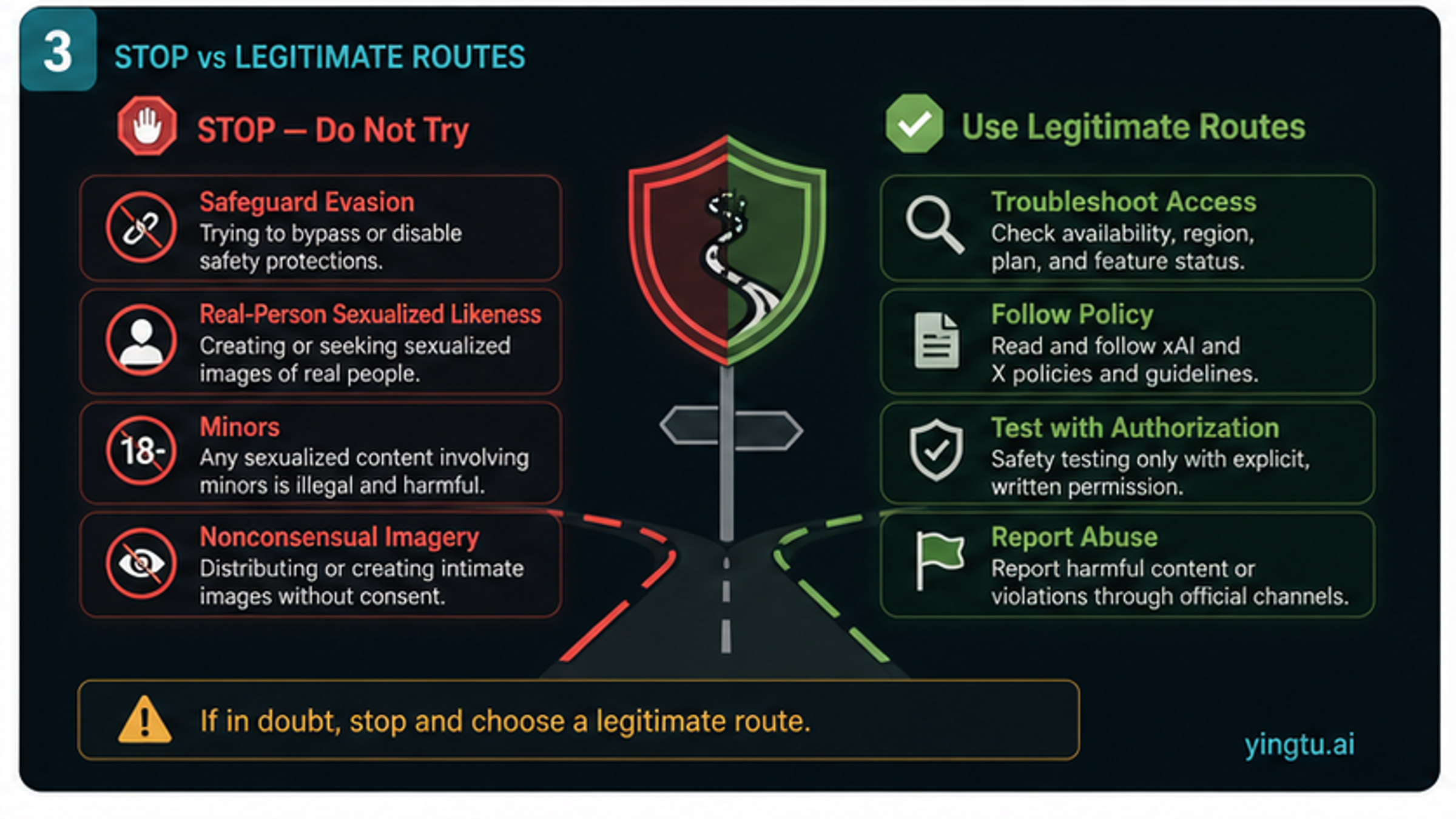

The hard stop cases are straightforward: do not try to evade safeguards, generate sexualized images of real people, involve minors, create or spread nonconsensual intimate imagery, or turn Reddit-style prompt claims into a production route. If the real problem is Grok Imagine visibility or broad xAI/Grok adult-content policy, move to the dedicated access or policy guide; the jailbreak route itself should be treated as a stop-or-reclassify decision.

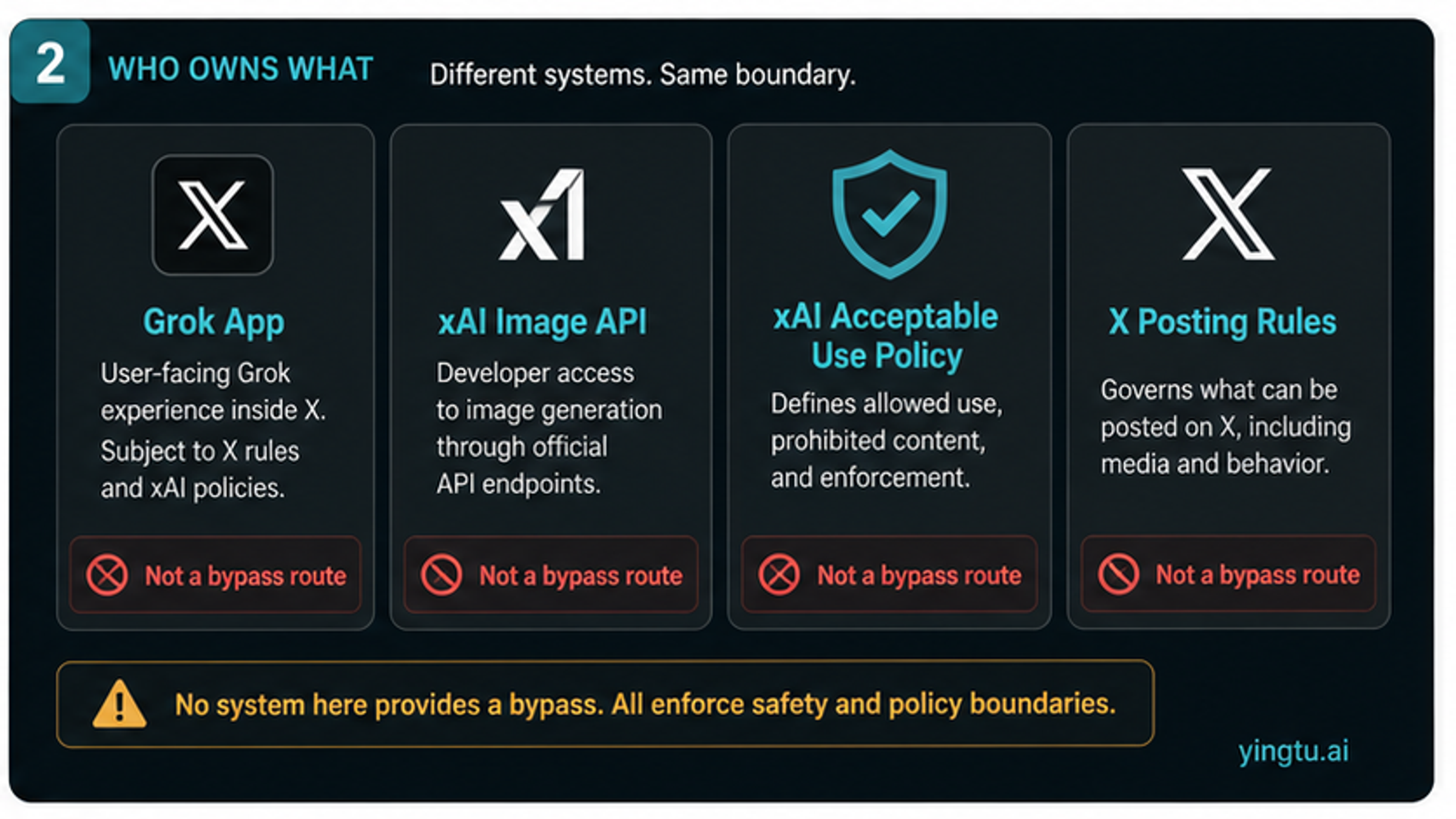

Four Rule Owners Come Before Any Answer

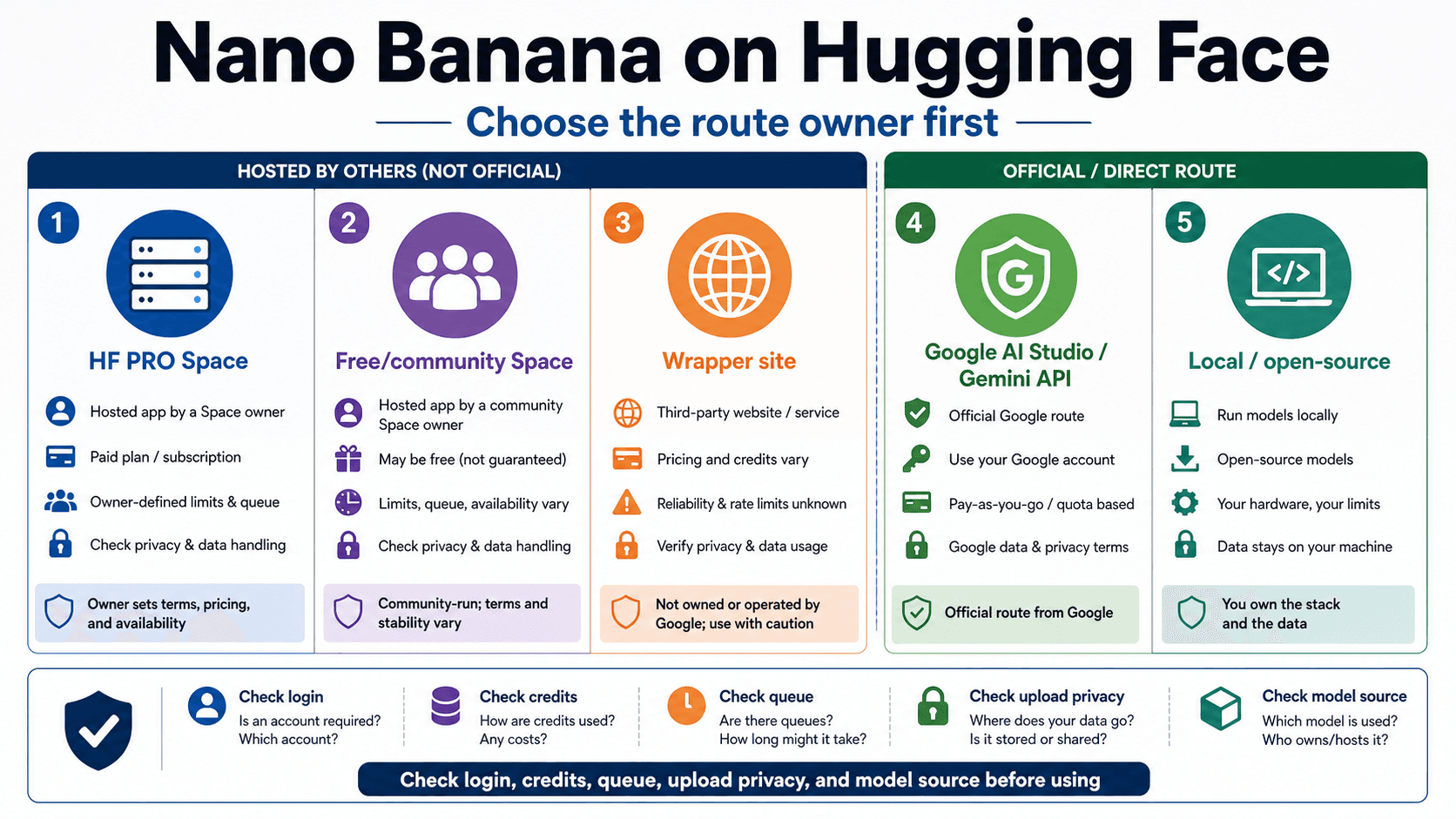

The word "Grok" hides several product and policy surfaces. A safe answer starts by separating those surfaces, because a claim that is true for one surface can be misleading or unsafe on another.

| Owner | What it can prove | What it cannot prove |

|---|---|---|

| Grok or X app | What the signed-in consumer account currently sees, including Grok Imagine or Spicy Mode controls when visible | A universal entitlement, a bypass route, or parity with every app and country |

| xAI image API docs | That xAI documents developer image generation and editing routes, including model IDs, generated outputs, and moderation response handling | Consumer Spicy Mode access or permission to evade content review |

| xAI Acceptable Use Policy | The use boundaries for consumers, developers, and businesses, including safeguards, illegal use, child safety, likeness, privacy, and publicity rights | A prompt-level allowlist or a guarantee that a blocked request can be made acceptable through wording |

| X Adult Content Policy | Rules for labeled consensual adult material on X and prohibited adult-content placements | Permission to generate sexualized imagery with Grok or to ignore xAI service rules |

As of May 9, 2026, xAI's image documentation proves a developer image route, not a jailbreak route. The xAI image overview describes image generation and editing capabilities and says generated images are subject to content policy review. That matters because developer access can make a workflow more programmable, but it does not remove the policy layer.

The xAI Acceptable Use Policy is the stronger boundary for a bypass-seeking reader. It applies across consumers, developers, and businesses, and it prohibits illegal use, privacy or publicity rights violations, child sexualization or exploitation, pornographic depictions of a person's likeness, and safeguard circumvention. The X Adult Content Policy is narrower: it governs adult material posted on X when properly labeled and consensually produced. X posting permission is not the same as Grok generation permission.

What Not To Try

Do not treat a blocked image request as a puzzle to solve. A blocked request may be blocked because the target content is disallowed, because the model cannot safely classify the request, because the account surface does not expose the feature, or because the request is trying to bypass a rule. None of those cases becomes safer by copying prompt packs, multi-turn chains, or wrapper claims.

The non-negotiable stop cases are:

| Stop case | Why it stops | Better action |

|---|---|---|

| Safeguard evasion | xAI policy tells users to respect safeguards unless operating under official red-team authorization | Stop or move into an authorized safety-testing process |

| Real-person sexualized likeness | Likeness, consent, harassment, privacy, publicity, and pornographic depiction risks converge | Do not generate, edit, publish, or redistribute the image |

| Minors or age-ambiguous subjects | Child safety rules and law create a hard stop | Stop immediately and use appropriate reporting routes for suspected abuse |

| Nonconsensual intimate imagery | NCII is a platform, legal, and personal-harm category, not a prompt-engineering challenge | Preserve evidence if needed for reporting; do not recreate or spread the content |

| "No filter", "unblur", or "uncensored" routes | Those phrases usually signal attempts to defeat controls or move through untrusted wrappers | Do not use them as production or personal instructions |

This section is intentionally non-operational. Safety research and abuse reporting can discuss that bypass attempts exist, but a public reader article should not reproduce sequences, examples, prompt fragments, or success recipes. The useful action is to classify the goal and choose a legitimate route.

Legitimate Routes That Still Remain

Rejecting a jailbreak path does not leave every reader with the same answer. The legitimate route depends on the actual job behind the request.

If the real job is consumer access, start with the official Grok or X surface tied to the account. Check the current app, account state, content controls, age state, region, subscription recognition, and feature rollout. The separate Grok Imagine Spicy Mode availability guide owns that access troubleshooting job. Missing controls are not a reason to use prompt bypasses, modified clients, shared accounts, or sellers.

If the real job is development, use the documented xAI image API as a moderated developer surface. Build with logging, content review, user reporting, consent boundaries, and removal handling. Do not design a workflow where users upload another person's photo and request sexualized transformations. That is not a product feature waiting for the right prompt; it is a foreseeable harm pattern.

If the real job is safety testing, the difference is authorization. A security team, trust-and-safety team, model evaluator, or contracted red team can test restricted behavior inside a scoped environment with permission, logging, non-harmful test fixtures, and disclosure rules. A public prompt list or social post is not the same thing as authorized testing.

If the real job is response to harmful content, do not regenerate or amplify it. Preserve only the evidence required for the report, use the platform reporting route, and follow legal or organizational escalation rules when minors or nonconsensual intimate imagery may be involved.

The API Is Not A Moderation Bypass

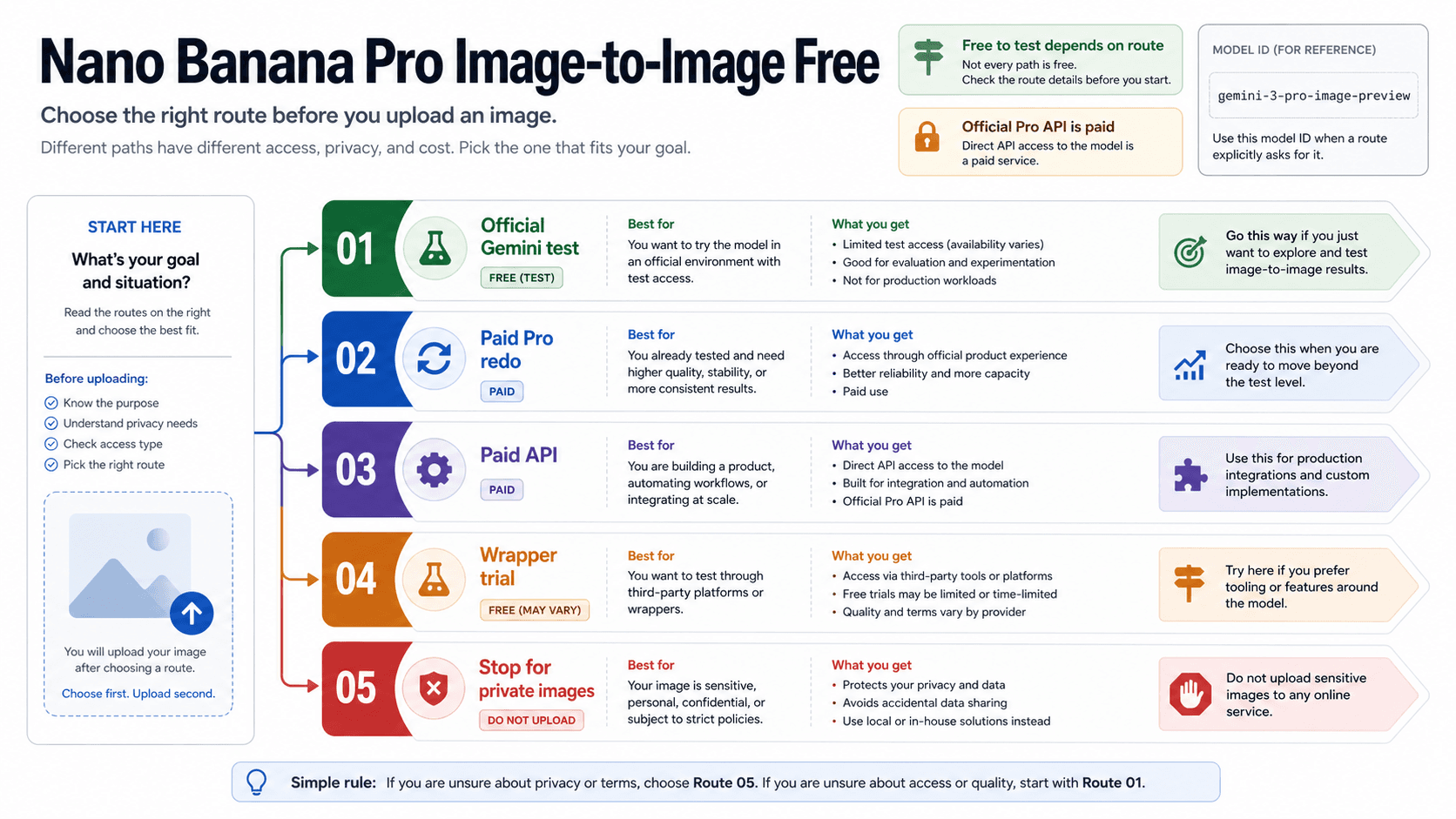

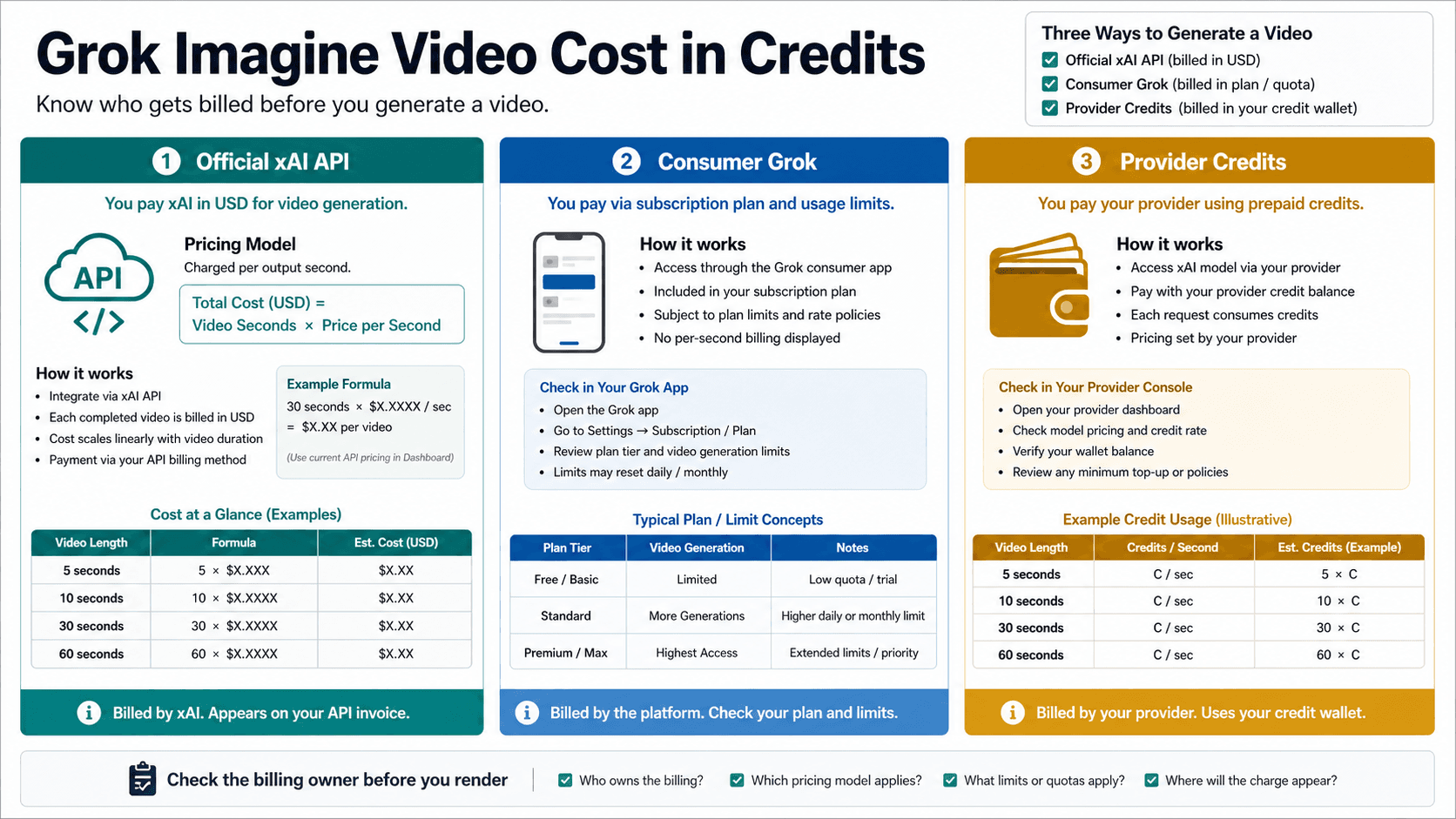

The xAI image API can be attractive because it sounds more controllable than a consumer app. That is the wrong way to read it. An API is a developer contract: it can provide request formats, outputs, model names, billing, and integration behavior, but it does not cancel the acceptable-use policy.

| API question | Safe answer |

|---|---|

| Does xAI document image generation? | Yes. xAI documents image generation and image editing capabilities. |

| Does that mean blocked consumer prompts can be moved to the API? | No. The API is subject to policy review and moderation response handling. |

| Does an API key prove Spicy Mode access? | No. Consumer account controls and developer API routes are separate surfaces. |

| Can the API be used for sexualized real-person edits? | No. Real-person likeness, consent, privacy, publicity, harassment, and NCII risk make that path unsuitable. |

| Should prices, model IDs, and availability be copied from old posts? | No. Treat model names, pricing, limits, plan names, and availability as volatile and check first-party pages on the day they matter. |

This split matters for teams. A production system needs policy review, a reporting path, audit logs, and a refusal strategy. It also needs a product decision about what users are allowed to upload and request. If the only way to satisfy the use case is to defeat moderation, the correct route is not the API. The correct route is to reject the use case.

Dated Risk Context

The current public risk context is not hypothetical. On January 16, 2026, the California Attorney General sent a cease-and-desist letter to xAI demanding action over alleged deepfake nonconsensual intimate images and child sexual abuse material connected to Grok. The same office had announced an investigation into Grok sexual AI imagery two days earlier.

In the United Kingdom, Ofcom opened a formal investigation into X over Grok sexualised imagery on January 12, 2026, and later noted that X had reported mitigation steps while the investigation remained open. Those official records should be described carefully: they are dated regulator actions and investigation statuses, not a substitute for final legal findings.

The practical reader takeaway is narrower than a controversy recap. Nonconsensual intimate imagery, child-safety exposure, real-person sexualized likeness, platform reporting, and rapid takedown obligations are live risk categories. A reader who came looking for a bypass should see those risks before any feature background.

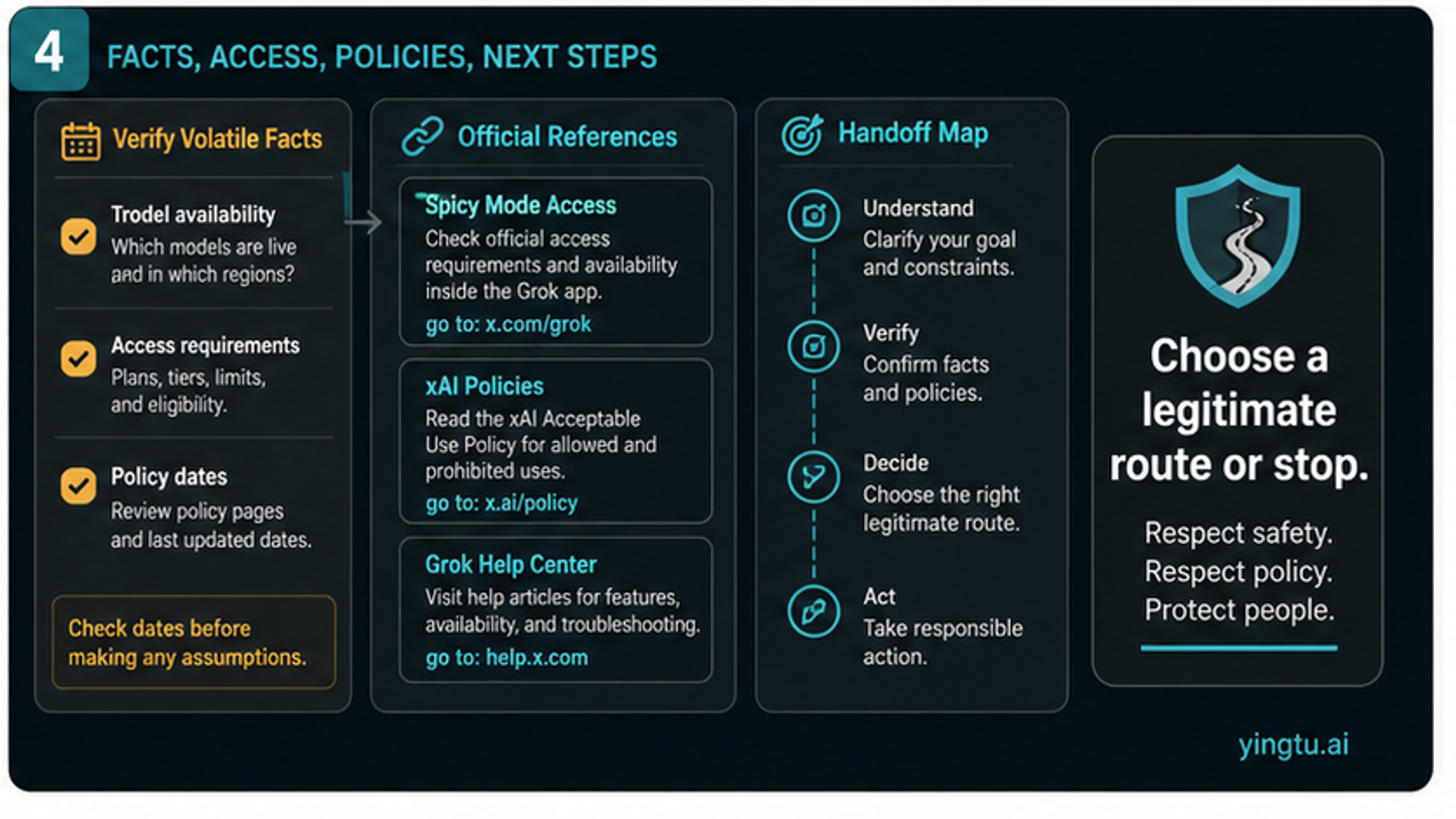

When The Problem Is A Different Grok Question

Not every Grok image question belongs in a jailbreak frame. Reclassify the problem when the actual job is one of these:

| Actual problem | Better owner |

|---|---|

| Spicy Mode does not appear in the current account | Grok Imagine Spicy Mode availability guide |

| The question is broad adult image policy | Grok xAI NSFW image generation policy analysis |

| The question is developer image generation | xAI image API docs plus internal safety and compliance review |

| The question is harmful output already created | Platform reporting, evidence preservation, and legal or organizational escalation |

| The question is current pricing, limits, or regional access | First-party checkout, account console, docs, or policy pages checked on the day of use |

This handoff prevents duplicate and misleading advice. The access article can help with legitimate visibility checks. The policy article can explain the broader xAI/X adult-content boundary. The jailbreak-safety decision remains narrower: do not follow a bypass route when the requested output or method is unsafe.

What Can Change And What Should Not

Several facts around Grok image generation can change quickly: model names, API pricing, output sizes, temporary URL behavior, consumer feature visibility, subscription packaging, regions, account controls, moderation wording, and regulator status. A useful current article should date or omit those claims rather than pretending a static number is durable.

The core boundary is less volatile. Safeguard evasion is not a legitimate consumer workflow. Real-person sexualized likeness, minors, and nonconsensual intimate imagery are hard stop cases. X adult-content posting rules are not generation permission. The xAI API is a developer route, not a loophole.

For organizations, that means a purchasing or integration review should not start with "can we make Grok produce it?" Start with the use case, identity and consent risk, user upload flow, reporting path, region, legal review, and whether the workflow can be defended without evading safeguards. If the answer depends on bypassing the system, the project should stop.

FAQ

Is there a safe Grok image jailbreak prompt?

No. A public article should not provide prompt strings, bypass sequences, or wrapper instructions. Treat a jailbreak claim as a boundary warning, then choose official access troubleshooting, policy-compliant API work, authorized testing, reporting, or stop.

Does the xAI API bypass Grok Imagine moderation?

No. xAI documents image generation and editing APIs, but those routes remain subject to policy review and moderation response handling. API access is a developer contract, not a way around consumer or policy controls.

Does X allowing adult content mean Grok can generate it?

No. X adult-content rules govern labeled adult material posted on X in allowed contexts. Grok generation and xAI service use still have their own rules, including hard boundaries around nonconsent, minors, real-person likeness, illegal use, and safeguard evasion.

What if Spicy Mode is missing?

Use the official access troubleshooting route. Check the current Grok or X app surface, account state, content controls, age state, subscription recognition, region, and rollout. Do not treat missing controls as a reason to use modified clients, shared accounts, or bypass prompts.

Can safety researchers test Grok image safeguards?

Only inside an authorized scope. Responsible testing needs permission, logging, non-harmful test fixtures, disclosure rules, and clear limits. Public prompt packs and social-media recipes are not authorized safety testing.

What should be reported?

Report suspected nonconsensual intimate imagery, sexualized content involving minors, impersonation, harassment, or harmful real-person likeness misuse through the relevant platform or legal route. Preserve only the evidence needed for reporting and avoid redistributing the content.