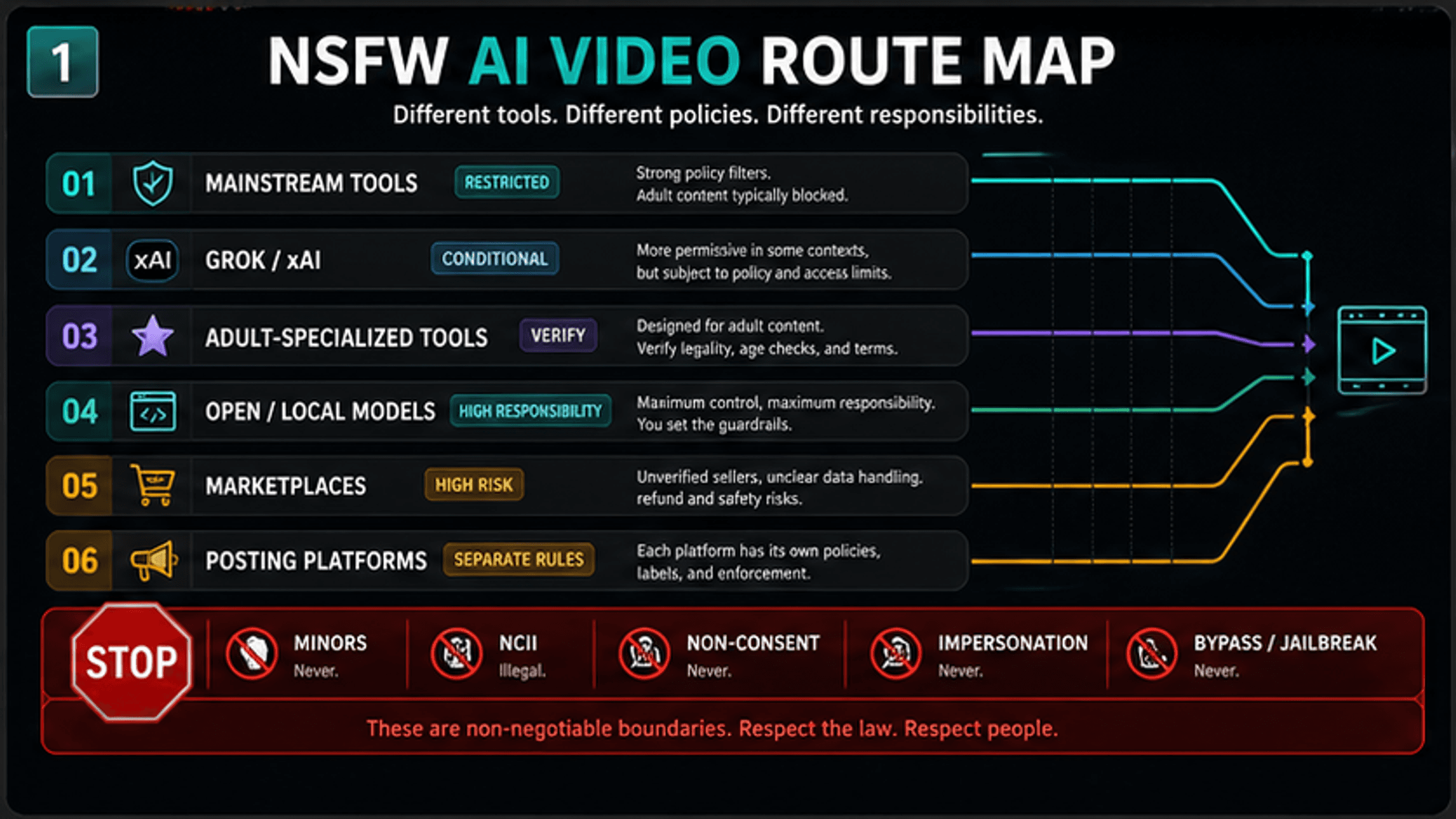

NSFW AI video is possible only in route-specific, policy-bound ways: most mainstream AI video generators block explicit sexual content, while Grok/xAI, adult-specialized platforms, open or local models, marketplaces, and posting platforms each carry different rules and risks. Treat the first decision as route choice, then verify consent, platform terms, privacy, and current access before paying, uploading, or sharing.

| Route | Current posture | What to check before acting |

|---|---|---|

| Mainstream AI video generators | Usually restricted for explicit sexual content | Safety policy, commercial use, account enforcement, input rules |

| Grok / xAI | Conditional and feature-dependent | Current app or API surface, region, age/account state, xAI and X policy boundaries |

| Adult-specialized tools | Possible, but verify before use | Age verification, terms, storage, privacy, deletion, consent workflow |

| Open or local models | More control and more responsibility | Rights to inputs, local law, data security, safeguards, distribution plan |

| Marketplaces and freelancers | High risk unless tightly scoped | Seller identity, rights transfer, private data handling, takedown path |

| Posting platforms | Separate rules from generation | Labels, age gates, non-consensual intimate imagery rules, removal process |

Stop immediately for minors, age-ambiguous subjects, non-consensual intimate imagery, a real person's likeness without clear consent, sexual violence, harassment, impersonation, or attempts to bypass a tool's safeguards. Those are not route-selection problems.

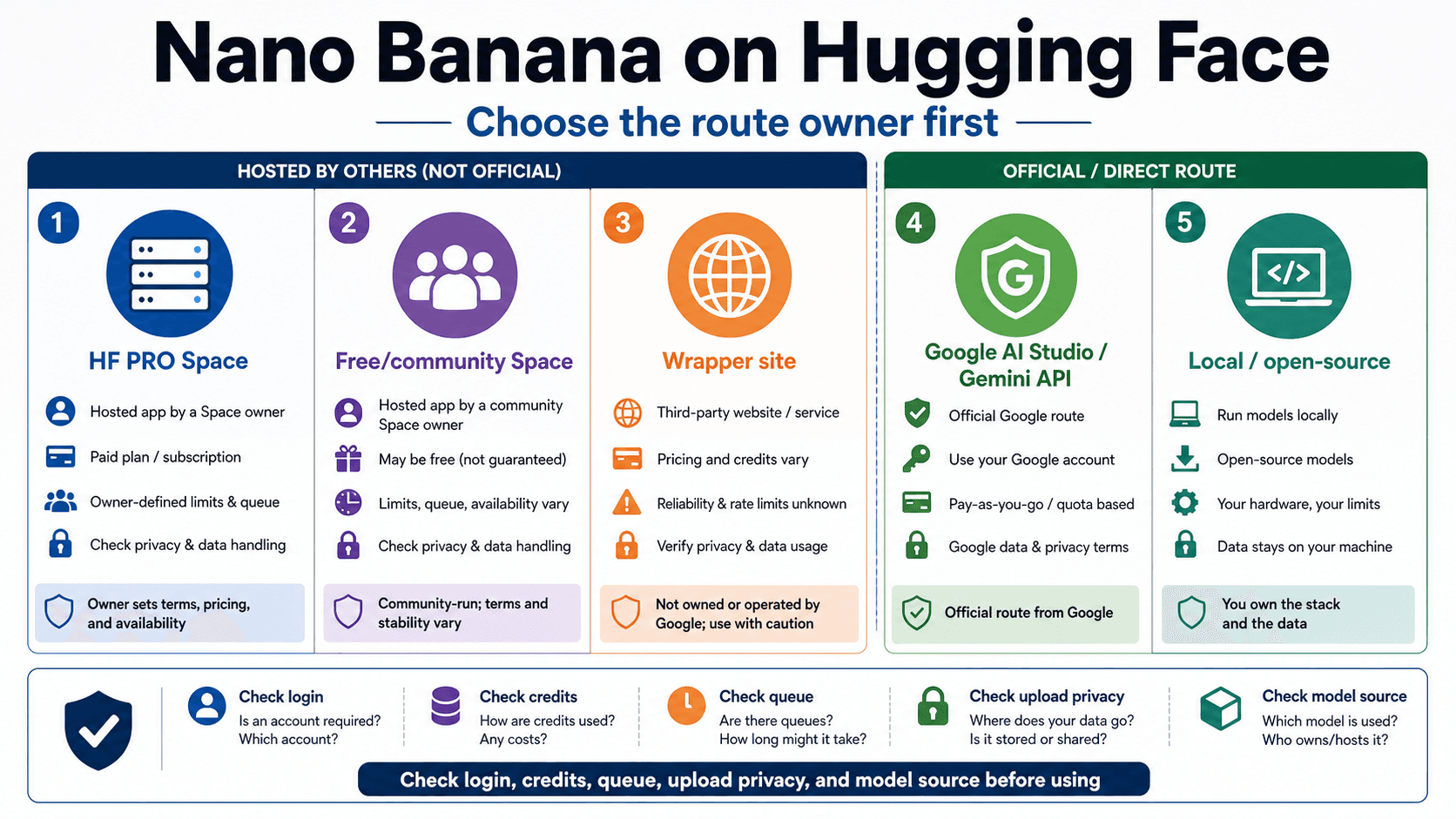

Prices, free credits, daily limits, model names, Grok or Spicy Mode access, region availability, storage promises, privacy claims, "no filter" claims, and "no ban" claims can change quickly. Use current owner proof before trusting them, and treat forums, directories, and marketplace listings as demand signals rather than permission.

Quick Answer: Can You Make NSFW AI Videos Today?

Yes, but not as one universal workflow. A fictional, adult, consent-cleared project may have possible routes in adult-specialized tools, conditional xAI/Grok surfaces, or local/open-model environments. A request that depends on a real person's likeness without clear consent, any minor or age-ambiguous subject, non-consensual intimate imagery, harassment, impersonation, or safeguard evasion should stop before model choice.

The safest practical move is to ask three questions in order. First, which route are you actually using: mainstream app, Grok/xAI, adult platform, open/local setup, marketplace, or posting platform? Second, what does the current owner of that route allow today? Third, do you have consent, rights, storage controls, and a lawful distribution plan?

That order matters because many people start with tool names and skip the contract. A mainstream video generator can have excellent video quality and still block explicit sexual content. An adult-specialized platform can advertise the use case and still have age, consent, privacy, and deletion rules. A local model can remove a platform filter while increasing the user's legal and security responsibility.

The Six Routes People Confuse

The phrase "NSFW AI video" hides at least six different product and responsibility layers.

| Route | What it can mean | Main risk |

|---|---|---|

| Mainstream generators | Broad AI video apps for marketing, storyboards, product clips, education, and creative work | Their policies usually restrict explicit sexual material, adult nudity, or unsafe uploads |

| Grok / xAI | Consumer Grok features, X-adjacent experiences, or xAI developer video docs | Consumer feature access, X posting rules, and xAI API behavior are separate contracts |

| Adult-specialized tools | Services that position themselves for adult-oriented generation | Marketing claims may outrun policy, privacy, age verification, or deletion guarantees |

| Open or local models | Running or adapting models outside a hosted consumer app | More control also means more responsibility for legality, safeguards, data handling, and distribution |

| Marketplaces and freelancers | Paying a seller to create or edit a clip | Consent, rights transfer, private data handling, and takedown paths can be unclear |

| Posting platforms | X, YouTube, Reddit, hosting providers, storefronts, or private sharing links | Posting rules can block or remove output even if generation happened elsewhere |

Do not treat the route names as recommendations. The point is to prevent category mistakes. A person who wants a consensual fictional adult clip, a developer testing policy-safe synthetic media, and a victim trying to remove a fake intimate video all need different next steps. A single "best tools" answer would make all three readers less safe.

Why Mainstream AI Video Tools Usually Block Explicit Content

Mainstream AI video tools are built for broad commercial, creative, and consumer use. That usually means strict handling of sexual content, real-person likeness, minors, harassment, and unsafe uploads.

OpenAI's Usage Policies prohibit sexual violence, non-consensual intimate content, sexualization or exploitation of minors, and misleading or harmful likeness use. OpenAI's Sora safety page also describes people-related consent and sexual-material safeguards, and it notes that the earlier Sora product page was no longer available as of April 26, 2026. That is not a route for NSFW generation; it is evidence that mainstream video products draw safety boundaries around sexual and real-person content.

Google's Veo responsible AI guidance describes built-in safety filtering and policy assessments for video prompts, images, and outputs, including sexual-content categories. Runway's Usage Policy prohibits sexually explicit content, adult nudity, NCII creation or modification, and use of another person's image, video, or audio without permission. HeyGen's ethics statement bars sexually explicit, illegal, and child-depicting content and requires permission for depicted individuals. Kling's user policy likewise bars use that promotes sexually explicit material and requires rights or authorization for inputs.

The practical result is simple: mainstream video tools are not a reliable adult-content route. They may be appropriate for safe-for-work storyboards, product demos, educational animations, or non-explicit fictional scenes. They should not be treated as tools to pressure toward explicit adult output.

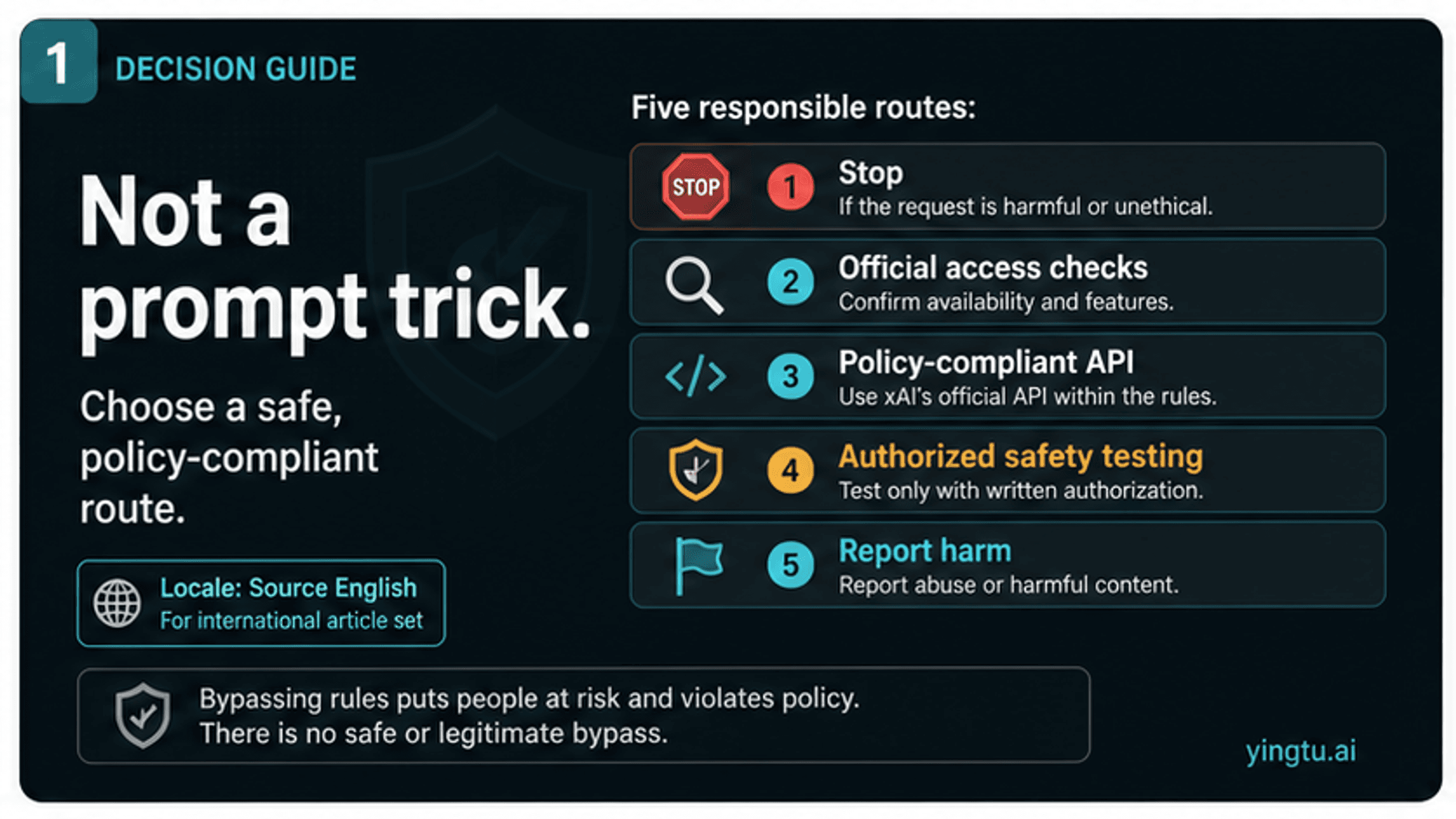

Where Grok / xAI Fits

Grok and xAI deserve a separate branch because public discussion around Grok Imagine and Spicy Mode has made them visible in adult-content searches. That branch is still conditional, not a universal unlock.

xAI's Acceptable Use Policy applies to consumers, developers, and businesses. It requires legal compliance and bars privacy or publicity-rights violations, pornographic depictions of real people's likenesses, child sexualization or exploitation, safeguard circumvention, and other harmful uses. xAI's video documentation describes developer video capabilities such as text-to-video, image-to-video, editing, reference-to-video, extension, temporary URLs, and moderation, but API documentation is not the same thing as consumer Spicy Mode access.

X's Adult Content Policy is another separate contract. X may allow consensually produced adult material, including AI-generated material, when it is properly labeled and not placed in highly visible surfaces. X's Non-consensual Nudity Policy bans intimate media shared or created without consent, including digitally manipulated sexualized material. Posting rules do not prove generation permission, and generation access does not erase posting rules.

If the immediate question is whether Spicy Mode appears for an account, use the Grok Imagine Spicy Mode availability guide. If the question is broader xAI/X policy ownership, use the Grok xAI NSFW image generation policy analysis. For video route planning, keep the answer narrower: verify the current official surface, separate consumer UI from developer API docs, and stop when the use case crosses consent or likeness boundaries.

Consent Is The First Technical Check, Not A Legal Footnote

Before uploading any image for image-to-video, decide who or what the subject is. This decision should happen before model selection, prompt writing, or payment.

| Subject in the input | Safer decision | Why it matters |

|---|---|---|

| Fictional adult character or fully synthetic adult performer | Proceed only if you own or can lawfully use the IP and the platform allows the use case | Copyright, platform rules, and synthetic identity still apply |

| Yourself | Proceed only if you are an adult and understand storage, sharing, and takedown risk | Consent can exist, but privacy and distribution risk remain |

| Consenting adult performer | Proceed only with clear, specific, informed, documented consent and rights | "They sent a photo" is not enough for adult image-to-video use |

| Public figure, creator, coworker, classmate, partner, ex-partner, or private person | Stop unless explicit adult-use consent and rights are clear | Public availability of an image is not permission for sexualized transformation |

| Minor or age-ambiguous person | Stop | Child safety rules and legal exposure override every tool route |

| Stolen, leaked, private, or non-consensual intimate material | Stop, do not store or share, and use reporting paths when appropriate | Non-consensual intimate imagery is a platform, legal, and safety harm |

This is the section most "tool list" pages skip. Consent is not a final disclaimer after generation. It is the gate that determines whether generation should happen at all. A safer workflow records the basis for consent, limits storage, avoids unnecessary copies, and respects revocation or takedown requests.

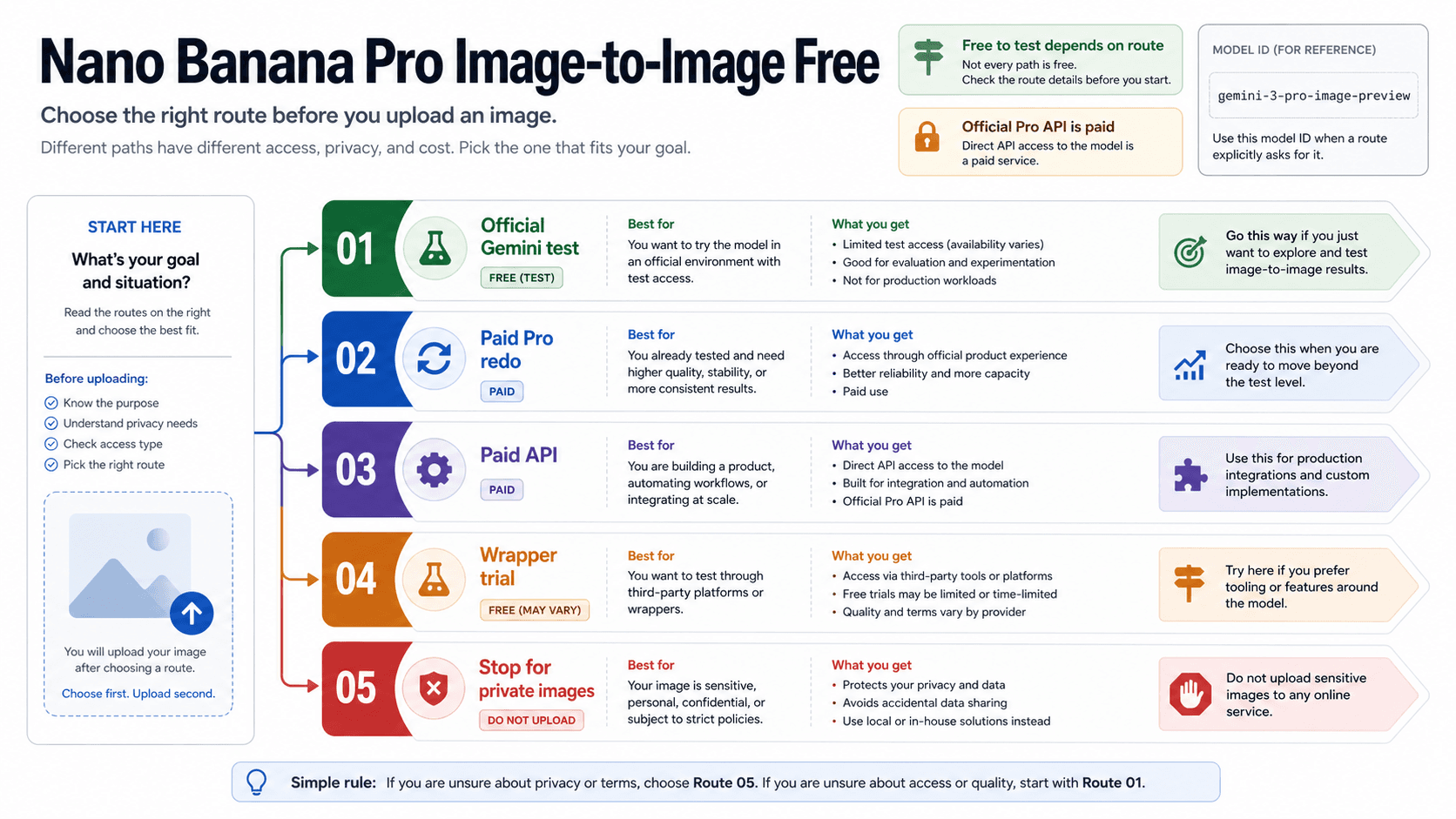

What To Verify Before Paying Or Uploading

Adult-oriented AI video routes attract volatile claims: free credits, unlimited generations, no filters, no bans, private storage, safe deletion, high resolution, commercial rights, and exact model names. Treat those as claims to verify, not as facts to repeat.

| Claim | Trustworthy proof | Red flag |

|---|---|---|

| Price, plan, credits, or limits | Current official checkout, billing page, or account screen | Static screenshots, affiliate tables, copied forum lists |

| Adult-content permission | Current terms, content policy, and visible account controls | Vague "uncensored" marketing without rules |

| Privacy and storage | Plain retention, deletion, access-control, and training-use terms | No deletion path, no retention statement, or forced public gallery |

| Real-person input rules | Explicit consent, likeness, and identity policy | "Use any photo" language |

| Takedown or reporting path | Documented support, abuse, or rights process | Seller disappears after delivery |

| Commercial use | Current license terms and rights chain | "Commercial" claim without IP, performer, or platform context |

For a hosted adult-specialized platform, privacy and deletion matter as much as generation quality. The most sensitive asset may be the uploaded source image, not the generated clip. For a local model, the tool may avoid remote upload, but the user still owns the responsibility for inputs, outputs, storage, and sharing. For a marketplace seller, keep the scope narrow and avoid sending sensitive personal images unless rights, consent, and deletion are documented.

The Risk Continues After Generation

Generation is only the first step. Storage, sharing, platform labels, takedown duties, and reporting paths can create more risk than the generation itself.

If a generated adult clip is lawful and consent-cleared, store it with access controls, avoid unnecessary backups, and do not upload it to tools that reuse or expose private media. Sharing should be limited to intended adult audiences, and links should not be treated as private just because they are unlisted.

Posting platforms apply their own rules. X allows some consensual adult content when it is labeled and kept out of restricted placements, but it prohibits non-consensual intimate media and child-safety violations. YouTube's Nudity and Sexual Content Policy bars explicit content meant for sexual gratification, non-consensual imagery, and sexual content involving minors. Google Search offers a removal path for fake sexual or nude content distributed without consent.

Legal duties vary by location, but some boundaries are not subtle. The FTC's TAKE IT DOWN Act page explains that the U.S. law criminalizes publication of non-consensual intimate visual depictions and requires covered platforms to remove qualifying depictions after notice. NCMEC's generative AI resource treats AI-generated child sexual exploitation as reportable child-safety harm. When harm is already present, the right workflow is removal, reporting, and evidence preservation, not tool comparison.

Safer Route Choices By Use Case

Different reader jobs need different decisions.

| Use case | Safer route | Stop conditions |

|---|---|---|

| Fictional adult creative work | Verify an adult-specialized or policy-compatible route, keep characters fictional, and check storage and license terms | Real-person likeness, unclear IP, missing age controls, no deletion path |

| Personal consensual project | Use only if every depicted adult gives clear, specific consent and storage/sharing is controlled | Pressure, ambiguity, revoked consent, private image reuse, public upload |

| Developer experiment | Use synthetic or licensed test assets and document moderation, logging, deletion, and abuse handling | User-uploaded real-person sexualization or bypass-oriented product design |

| Publisher or platform | Separate generation permission from posting, labeling, age gating, reporting, and takedown obligations | No moderation plan, no rights process, or no response path for NCII |

| Victim or removal case | Preserve evidence, use platform reporting, search removal, and legal/support channels | Reposting, editing, or testing the harmful media in more tools |

The best route is sometimes no route. If the project cannot document consent, cannot protect private media, cannot comply with platform rules, or cannot respond to takedown requests, generation should not start.

FAQ

Is adult AI video legal?

It depends on location, content, consent, identity, age, distribution, and platform rules. Consensual fictional adult content and non-consensual real-person sexualized content are not the same legal or platform problem. Minors, age-ambiguous subjects, child sexual exploitation, NCII, and sexual violence are stop lines, not gray areas to test.

Can mainstream AI video generators make explicit adult videos?

Mainstream generators usually restrict explicit sexual content and unsafe real-person uploads. OpenAI, Google Veo, Runway, HeyGen, and Kling materials all point toward moderation or policy limits around sexual content, minors, consent, or likeness. Do not treat them as dependable adult-content routes.

Can Grok make NSFW videos?

Grok/xAI is conditional and feature-dependent. Consumer Grok controls, xAI API video documentation, and X adult posting rules are different contracts. Verify the current official surface for your account and keep xAI/X policy stop lines in force.

Are free NSFW AI video tools safe?

Free is not a safety signal. A free tool can still store uploads, reuse prompts, lack deletion controls, expose private media, or make unsupported adult-content claims. Verify terms, privacy, age controls, content policy, and takedown paths before uploading anything sensitive.

Can I use a real person's photo for image-to-video?

Only with clear, specific, informed adult-use consent and rights. Public availability of an image is not permission. Do not use images of public figures, creators, coworkers, classmates, partners, ex-partners, private individuals, minors, or age-ambiguous people for sexualized transformation without a clear lawful basis.

Are open or local models safer?

They can reduce remote-upload risk, but they also remove some platform guardrails and shift responsibility to the user. Local control does not make illegal, non-consensual, minor-related, or harassing content acceptable. Storage, distribution, and evidence trails still matter.

Can I post the output on X, YouTube, or another platform?

Generation permission and posting permission are separate. X has labeling and placement rules for consensual adult material and bans NCII and child-safety violations. YouTube restricts explicit sexual content and bans non-consensual imagery and sexual content involving minors. Other platforms add their own rules.

What should I ignore in tool lists?

Be skeptical of claims such as unlimited, no filter, no ban, free forever, private by default, deletion guaranteed, current Grok access for all accounts, or exact credit limits without owner proof. Those claims change quickly and are often not backed by first-party terms.