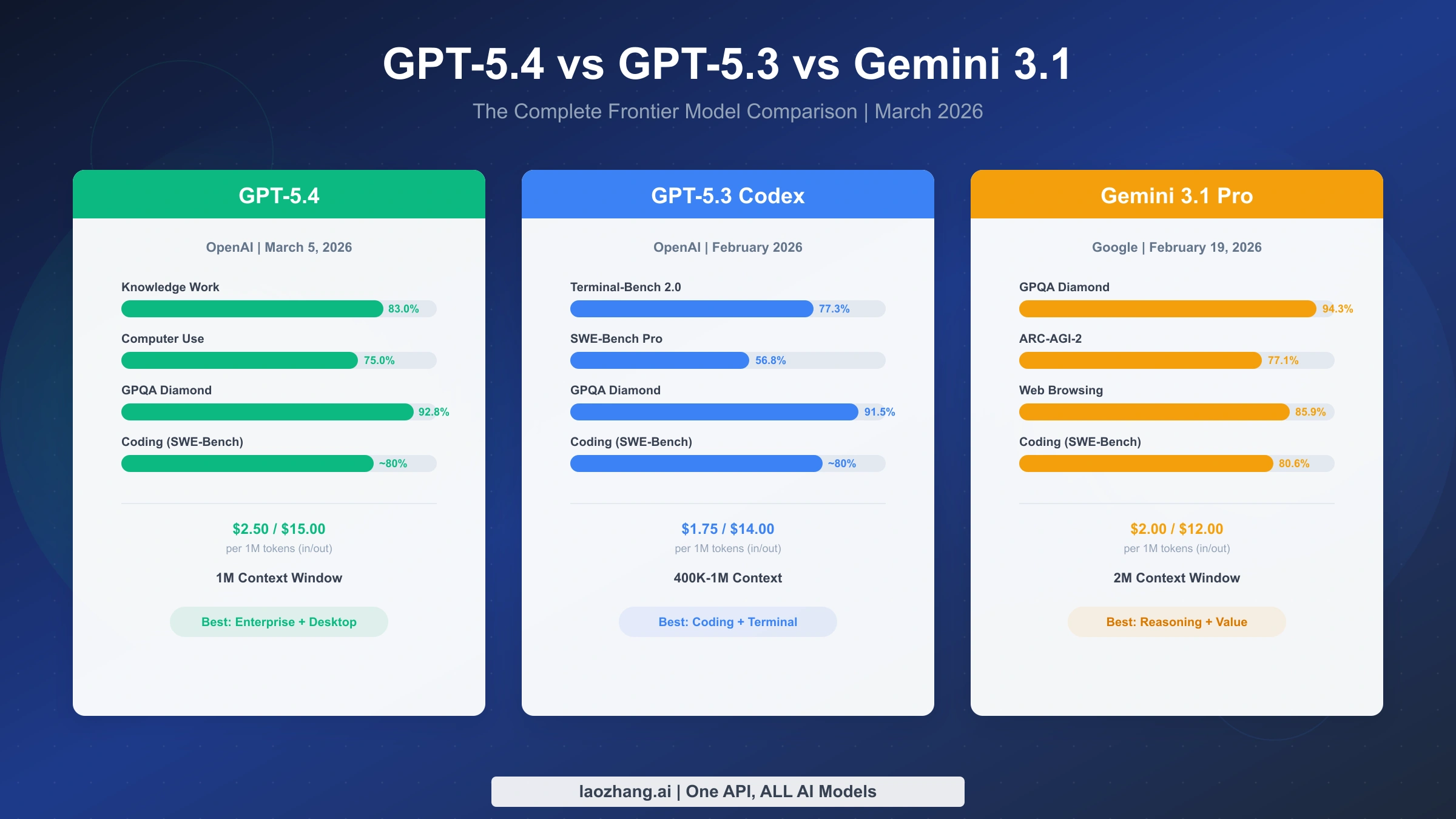

GPT-5.4 launched on March 5, 2026, becoming the first AI model to surpass human experts on knowledge work benchmarks. Combined with GPT-5.3 Codex's terminal-first coding dominance and Gemini 3.1 Pro's 2-million-token context window, developers now face a genuine three-way decision. This guide compares every benchmark score, pricing tier, and API feature to help you choose the right model for your specific workload.

The March 2026 AI Landscape Has Changed Everything

The AI model landscape shifted dramatically in early 2026. Within just four weeks, three frontier models launched that collectively redefined what large language models can do. Understanding this context is essential before diving into benchmark comparisons, because the competitive dynamics between OpenAI and Google have never been tighter.

OpenAI released GPT-5.3 Codex in early February 2026 as a coding-specialized model built for terminal and CLI workflows. It introduced a new paradigm where the model operates directly within developer environments rather than through chat interfaces. GPT-5.3 Codex scored 77.3% on Terminal-Bench 2.0, a benchmark that measures a model's ability to navigate real command-line tasks across operating systems, package managers, and build systems. This wasn't just an incremental improvement over previous models; it represented a fundamental shift toward models that understand developer toolchains at a native level.

Google responded on February 19, 2026 with Gemini 3.1 Pro, pushing the boundaries of reasoning and context processing. With a 2-million-token context window and a 94.3% score on GPQA Diamond (a graduate-level science reasoning benchmark), Gemini 3.1 Pro established itself as the go-to model for research-heavy workloads. Google also released Gemini 3.1 Flash-Lite at just $0.25 per million input tokens, creating a budget tier that dramatically undercuts competitors for high-volume applications.

Then on March 5, 2026, OpenAI unveiled GPT-5.4, which became the first model to exceed human expert performance on the GDPval knowledge work benchmark with an 83.0% score. GPT-5.4 also introduced native computer use capabilities, scoring 75.0% on OSWorld, meaning it can operate desktop applications, navigate web browsers, and coordinate multi-step workflows across software tools. For enterprises looking to automate complex digital workflows, this capability represents a category-defining moment. If you're coming from the previous generation comparison, the performance gap between generations has widened considerably across every benchmark category.

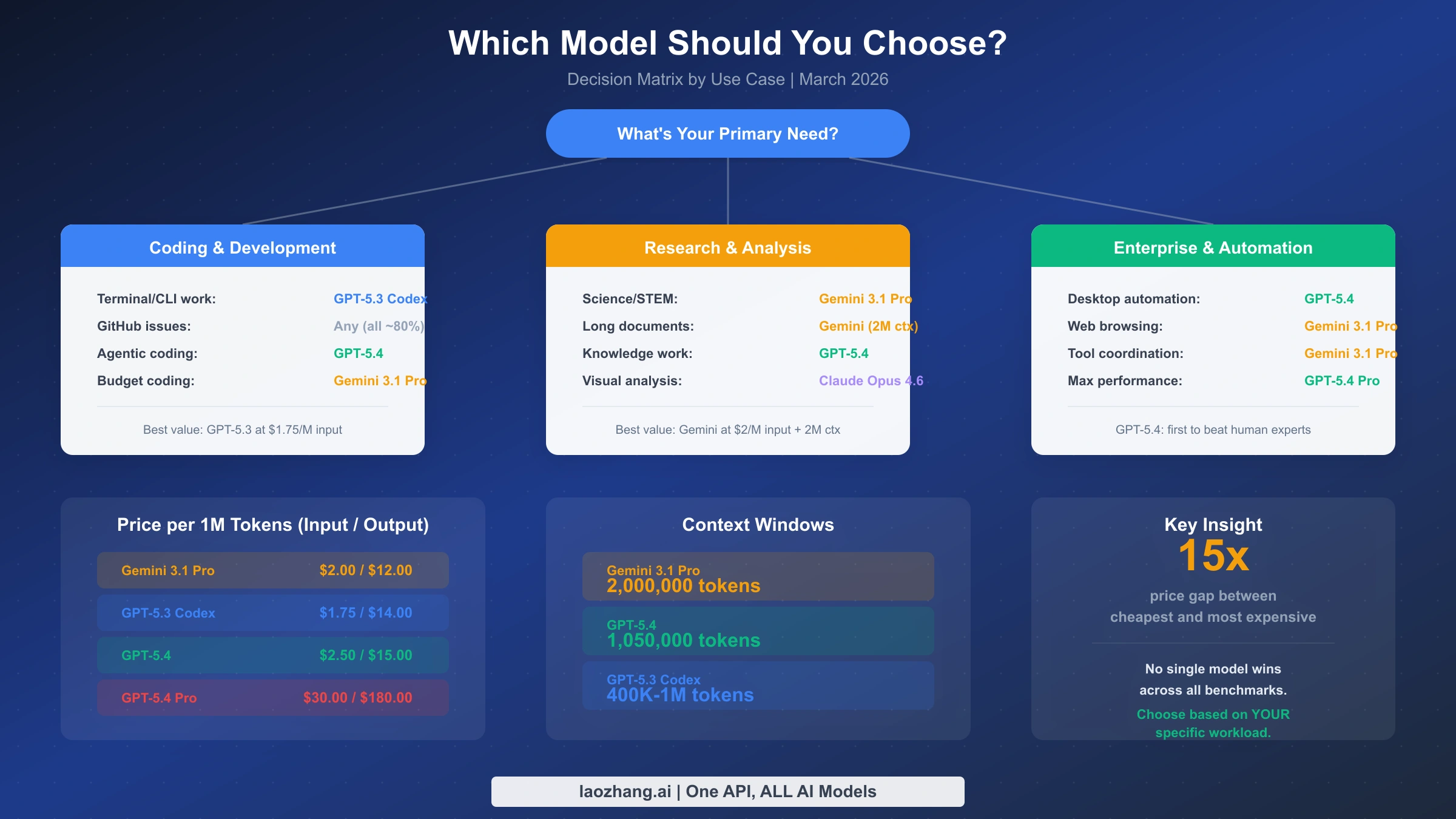

The key insight from this rapid succession of launches is that no single model dominates across all dimensions. GPT-5.4 leads knowledge work and desktop automation. Gemini 3.1 Pro excels at scientific reasoning and offers the largest context window at the lowest cost per token. GPT-5.3 Codex remains the strongest choice for terminal-based development workflows. Choosing the right model in March 2026 requires understanding exactly what you need it to do.

Benchmark Showdown - Every Score That Matters

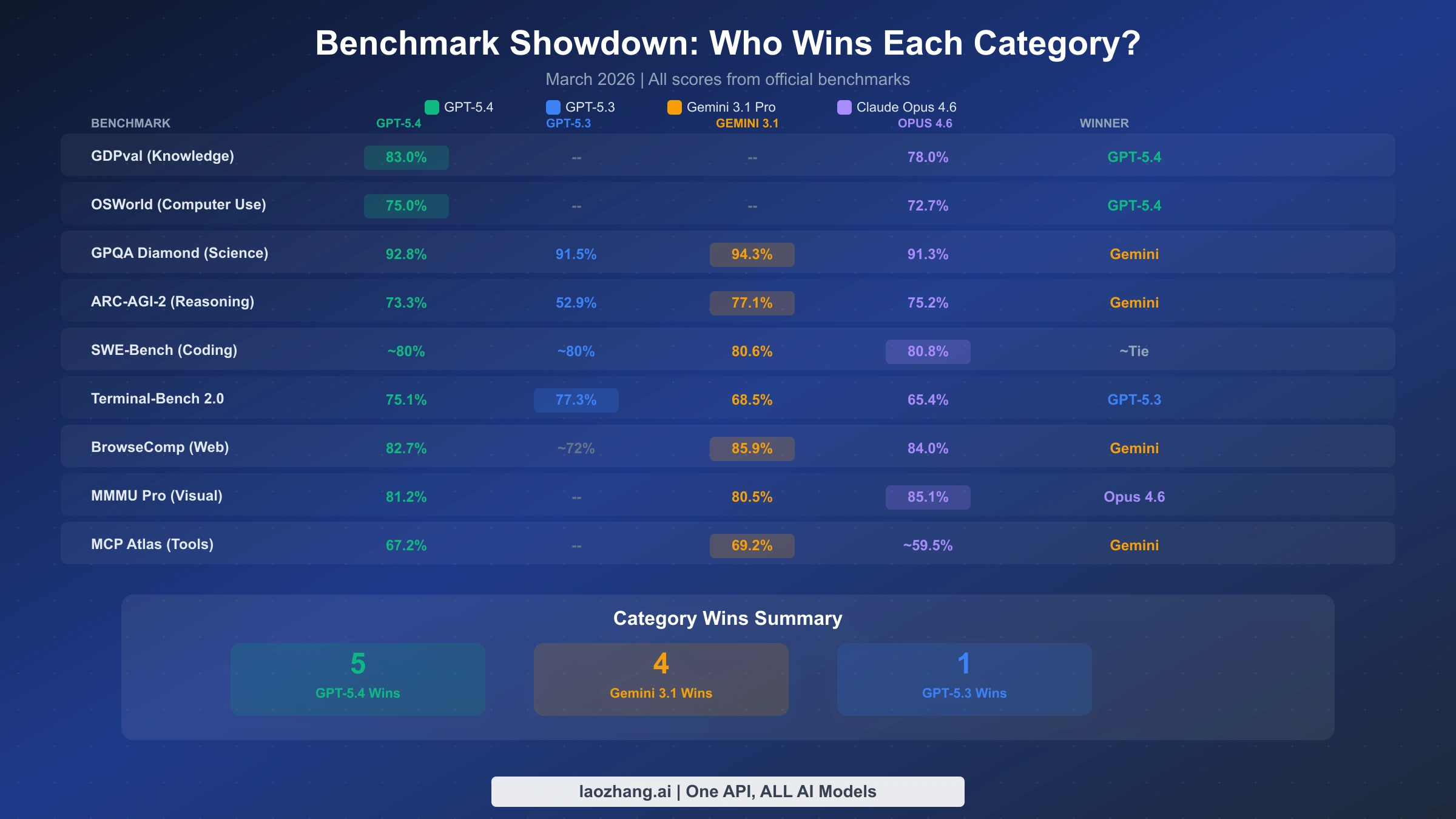

Raw benchmark scores tell part of the story, but understanding what each benchmark actually measures and why certain models excel reveals the practical implications for your workloads. The table below presents the most comprehensive comparison available, covering 11 benchmarks across reasoning, coding, computer use, and agentic capabilities.

| Benchmark | What It Measures | GPT-5.4 | GPT-5.4 Pro | GPT-5.3 Codex | Gemini 3.1 Pro |

|---|---|---|---|---|---|

| GPQA Diamond | Graduate-level science | 92.8% | 94.4% | 91.5% | 94.3% |

| ARC-AGI-2 | Novel reasoning | 73.3% | 83.3% | 52.9% | 77.1% |

| GDPval | Knowledge work | 83.0% | - | - | - |

| OSWorld | Computer use | 75.0% | - | - | - |

| SWE-Bench Verified | Code generation | ~80% | - | ~80% | 80.6% |

| SWE-Bench Pro | Advanced coding | 57.7% | - | 56.8% | 54.2% |

| Terminal-Bench 2.0 | CLI/terminal tasks | 75.1% | - | 77.3% | 68.5% |

| BrowseComp | Web browsing | 82.7% | 89.3% | ~72% | 85.9% |

| MMMU Pro | Multimodal understanding | 81.2% | - | - | 80.5% |

| MCP Atlas | Tool coordination | 67.2% | - | - | 69.2% |

| Toolathlon | Multi-tool agentic | 54.6% | - | 51.9% | - |

Reasoning benchmarks reveal a fascinating split. Gemini 3.1 Pro leads GPQA Diamond at 94.3%, narrowly beating GPT-5.4's 92.8%, which means Google's model handles graduate-level scientific questions with slightly higher accuracy. However, GPT-5.4 Pro closes this gap at 94.4%, suggesting that OpenAI's premium tier matches Google's reasoning capabilities. On ARC-AGI-2, which tests truly novel reasoning patterns that can't be solved through memorization, GPT-5.4 Pro dominates at 83.3% compared to Gemini's 77.1%, indicating stronger generalization ability when facing unfamiliar problem types.

Coding benchmarks show near-parity with important nuances. On SWE-Bench Verified, all three models cluster around 80%, meaning they can resolve roughly the same proportion of real GitHub issues. The differentiation appears in specialized tasks: GPT-5.3 Codex leads Terminal-Bench 2.0 at 77.3% versus GPT-5.4's 75.1%, confirming that Codex retains its edge for command-line workflows even after GPT-5.4's release. On SWE-Bench Pro, which tests harder multi-file changes, GPT-5.4 edges ahead at 57.7% versus GPT-5.3's 56.8% and Gemini's 54.2%.

Computer use and agentic capabilities represent the most significant differentiator. GPT-5.4's 75.0% on OSWorld and 83.0% on GDPval are unmatched because neither GPT-5.3 nor Gemini 3.1 Pro offer native computer use capabilities. If your workflow involves automating desktop applications, navigating complex web interfaces, or coordinating multiple software tools, GPT-5.4 is currently the only viable option among these three models. Meanwhile, Gemini 3.1 Pro leads MCP Atlas (tool coordination) at 69.2% versus GPT-5.4's 67.2%, suggesting Google's model handles structured tool-calling pipelines slightly better.

The bottom line from benchmarks: GPT-5.4 wins on 5 categories (knowledge work, computer use, advanced coding, multimodal, multi-tool), Gemini 3.1 Pro wins on 4 (science reasoning, standard coding, web browsing, tool coordination), and GPT-5.3 Codex wins on 1 (terminal tasks). No single model dominates.

Pricing Deep Dive - The True Cost of Each Model

Understanding pricing for frontier models in 2026 goes far beyond comparing input and output token rates. Long-context surcharges, cached token discounts, and batch processing tiers create a complex cost landscape where the cheapest model on paper might not be the cheapest model for your actual workload.

Base and Long-Context Pricing

The following table shows the complete pricing picture including both base rates and long-context surcharges that many comparison articles miss entirely.

| Model | Input / 1M Tokens | Output / 1M Tokens | Long-Context Input | Long-Context Output |

|---|---|---|---|---|

| Gemini 3.1 Flash-Lite | $0.25 | $1.50 | N/A | N/A |

| GPT-5.3 Instant | ~$0.30 | ~$1.20 | N/A | N/A |

| GPT-5.3 Codex | $1.75 | $14.00 | N/A | N/A |

| Gemini 3.1 Pro | $2.00 | $12.00 | $4.00 (>200K) | $18.00 (>200K) |

| GPT-5.4 | $2.50 | $15.00 | $5.00 (>272K) | $22.50 (>272K) |

| GPT-5.4 Pro | $30.00 | $180.00 | N/A | N/A |

The spread between the cheapest option (Gemini 3.1 Flash-Lite at $0.25/M input) and the most expensive (GPT-5.4 Pro at $30.00/M input) is a staggering 120x. This means running the same prompt through GPT-5.4 Pro costs 120 times more than running it through Flash-Lite. For teams processing millions of tokens daily, this difference translates to thousands of dollars per month. The long-context surcharges deserve particular attention because they catch many teams off guard. GPT-5.4 doubles its input price from $2.50 to $5.00 per million tokens once your prompt exceeds 272,000 tokens, with output jumping from $15.00 to $22.50. Gemini 3.1 Pro similarly increases from $2.00 to $4.00 per million input tokens beyond 200,000 tokens, with output rising from $12.00 to $18.00. If you regularly work with large codebases, lengthy documents, or extensive conversation histories, these surcharges can double your effective API costs and fundamentally change which model offers the best value for your specific usage pattern.

Real-World Cost Scenarios

To make these numbers practical, consider three common usage patterns that represent different scales of operation. A solo developer running 100 coding queries per day, each averaging 2,000 input tokens and 1,000 output tokens, would spend approximately $0.52 per month on GPT-5.3 Codex, $0.42 on Gemini 3.1 Pro, or $0.53 on GPT-5.4. At this scale, the cost difference is negligible and model capability should drive your decision entirely, not price. You could run all three models simultaneously for less than $2 per month, which is exactly why individual developers should focus on finding the model that produces the best output for their specific tasks rather than optimizing for cost.

For a mid-size team processing 10 million input tokens and 2 million output tokens per day, monthly costs diverge meaningfully: GPT-5.3 Codex would run about $1,365/month, Gemini 3.1 Pro about $1,320/month, and GPT-5.4 about $1,650/month. At this volume, Gemini's lower output pricing starts creating real savings, especially for applications that generate long responses. The $330/month gap between Gemini and GPT-5.4 might seem modest, but it adds up to nearly $4,000 annually, enough to fund a significant portion of a team's cloud infrastructure budget.

Enterprise-scale operations processing 100 million tokens daily face the starkest choices. At this volume, the difference between Gemini 3.1 Pro ($40,800/month) and GPT-5.4 ($52,500/month) amounts to nearly $140,000 per year. Platforms like laozhang.ai that aggregate multiple model APIs can help optimize costs by routing different query types to the most cost-effective model, potentially saving 20-30% through intelligent model selection. The cost optimization becomes even more dramatic when you factor in batch and cached pricing: OpenAI offers cached input pricing at roughly 50% discount for repeated prompts, and batch API processing at 50% off for non-time-sensitive workloads, while Google provides similar context caching discounts for Gemini applications that repeatedly reference the same documents.

Hidden Cost Factors Most Comparisons Miss

Beyond the headline pricing numbers, several factors influence your real-world costs that rarely appear in comparison articles. Output token pricing is often the dominant cost component because frontier models tend to generate verbose responses, and output tokens cost 5-8x more than input tokens across all providers. A query that costs $0.005 in input tokens might cost $0.03-0.04 in output tokens, meaning your output-to-input ratio dramatically affects which model is cheapest for your application. Applications that need concise outputs (classification, extraction, yes/no decisions) will find costs relatively similar across models, while applications requiring long-form generation (reports, documentation, code) will see Gemini's $12.00/M output rate provide meaningful savings over GPT-5.4's $15.00/M.

Rate limits also impose hidden costs through forced architectural complexity. If your application hits rate limits frequently, you either need to implement queuing systems, purchase higher-tier access, or distribute traffic across multiple API keys. For applications that can tolerate non-real-time processing, batch APIs offer the single best cost optimization available, effectively halving your per-token costs on both platforms. Teams that haven't evaluated batch processing for their non-interactive workloads are leaving significant savings on the table.

GPT-5.3 vs GPT-5.4 - Is the Upgrade Worth It?

For teams already running GPT-5.3 Codex in production, the GPT-5.4 launch raises an immediate question: should you upgrade? The answer depends entirely on what capabilities you actually use and whether GPT-5.4's new features solve problems you currently face.

What GPT-5.4 Adds Over GPT-5.3

The most significant addition is native computer use capability. GPT-5.4 can operate desktop applications, navigate web browsers, fill forms, and coordinate multi-step workflows across software tools, scoring 75.0% on OSWorld. GPT-5.3 has no equivalent capability, meaning if your use case involves any form of desktop automation or web interaction, GPT-5.4 is a necessary upgrade rather than an optional one.

GPT-5.4 also introduces a substantially larger output limit. With 128K max output tokens compared to GPT-5.3's standard output limit, GPT-5.4 can generate entire codebases, comprehensive reports, or lengthy documents in a single API call. For applications that require long-form generation, such as documentation tools, code generators, or report builders, this expanded output capacity eliminates the need for multi-turn generation chains.

Knowledge work performance improved meaningfully. GPT-5.4 scores 83.0% on GDPval, the first model to surpass human expert performance on this benchmark. For enterprise applications involving research synthesis, complex analysis, or multi-source reasoning, this represents a qualitative improvement in output reliability.

Where GPT-5.3 Still Wins

Terminal and CLI workflows remain GPT-5.3 Codex's strongest domain. Its 77.3% Terminal-Bench score versus GPT-5.4's 75.1% might seem like a small gap, but in practice, GPT-5.3 Codex was purpose-built for terminal environments. It understands shell semantics, package manager conventions, and build system configurations at a deeper level because its training and fine-tuning focused specifically on these workflows. If your primary use case is terminal-first development, there's no compelling reason to switch to GPT-5.4.

Pricing also favors staying with GPT-5.3. At $1.75 per million input tokens versus $2.50, GPT-5.3 Codex costs 30% less per input token. For high-volume coding applications where you're processing thousands of prompts daily, this 30% saving compounds into significant cost reduction over time. Given that SWE-Bench Verified scores are essentially equivalent at ~80% for both models, you're getting comparable code generation quality at a lower price.

The Upgrade Decision Framework

Upgrade to GPT-5.4 if: you need computer use or desktop automation capabilities, your application generates very long outputs (>32K tokens), you need the strongest possible reasoning for knowledge work, or you're building agentic workflows that coordinate multiple tools and applications.

Stay with GPT-5.3 Codex if: your primary workload is terminal-based development, cost efficiency is a critical constraint, you don't need computer use capabilities, or your current results meet quality requirements and you want to avoid migration risk.

Consider running both: many teams maintain GPT-5.3 Codex for coding queries and GPT-5.4 for complex reasoning and agentic tasks. Using an API aggregation service that supports both models can simplify this multi-model strategy.

API Features and Developer Experience Compared

Beyond raw performance, the practical experience of integrating these models into applications differs in important ways. Context windows, rate limits, output formats, and special features all influence which model fits your architecture.

Context Window and Output Limits

| Feature | GPT-5.4 | GPT-5.3 Codex | Gemini 3.1 Pro |

|---|---|---|---|

| Max Context | 1,050,000 tokens | 400K-1M tokens | 2,000,000 tokens |

| Max Output | 128,000 tokens | Standard | 64,000 tokens |

| Long-Context Threshold | 272K tokens | - | 200K tokens |

| Knowledge Cutoff | Aug 31, 2025 | - | - |

Gemini 3.1 Pro's 2-million-token context window is nearly double GPT-5.4's capacity and represents its single strongest technical advantage. For applications that need to process entire codebases, lengthy legal documents, or extensive conversation histories, this extra context capacity isn't just a nice-to-have; it fundamentally changes what's possible in a single API call. A full-stack application's codebase that might require chunking and multiple calls with GPT-5.4 could fit entirely within Gemini's context window, enabling more coherent analysis and generation. If you work with Gemini API rate limits, understanding the throughput constraints alongside these context windows is essential for production planning.

GPT-5.4's 128K output limit is the largest among these models, allowing it to generate entire files, comprehensive documentation, or detailed multi-section reports without requiring continuation prompts. This is particularly valuable for code generation tasks where breaking output across multiple API calls can introduce inconsistencies or lost context.

Special Capabilities

GPT-5.4 Computer Use represents a unique capability that neither competitor currently matches. Through the API, GPT-5.4 can receive screenshots, identify UI elements, generate mouse clicks and keyboard inputs, and navigate complex multi-step workflows across desktop and web applications. This enables a new category of applications including automated testing, form filling, data extraction from legacy systems, and multi-application workflow automation.

Gemini 3.1 Deep Think provides native chain-of-thought reasoning that can be enabled through the API. When activated, the model spends additional compute on complex problems, trading latency for accuracy. This is particularly effective for mathematical proofs, scientific reasoning, and multi-step logical deductions where the model benefits from "thinking through" the problem step by step.

GPT-5.3 Codex Terminal Integration offers optimized APIs for terminal-based workflows, including shell command generation, build system debugging, and package management. The model understands terminal output formats and can chain commands together intelligently, making it ideal for CLI tool development and developer productivity applications.

API Compatibility and Migration

All three models support standard chat completion APIs with system/user/assistant message formats, making basic migration between providers straightforward for standard use cases. If your application uses only text-in, text-out interactions with system prompts and conversation history, switching between GPT-5.4, GPT-5.3 Codex, and Gemini 3.1 Pro requires minimal code changes beyond updating the API endpoint and model identifier. This compatibility means that teams can experiment with all three models using their existing application architecture before committing to a primary provider.

However, specialized features like computer use (GPT-5.4), extended thinking (Gemini 3.1), and terminal optimization (GPT-5.3) use provider-specific API parameters that don't directly translate between platforms. GPT-5.4's computer use API requires sending screenshots as base64-encoded images and parsing structured action responses, which has no equivalent in the Gemini API. Similarly, Gemini's Deep Think mode uses Google-specific configuration parameters to control reasoning depth and token budget. Teams considering multi-model strategies should build abstraction layers that handle provider-specific features while maintaining a common interface for standard chat operations. This architectural investment pays off quickly because it allows you to route different query types to the optimal model without requiring application-level changes each time you want to test a new model or adjust routing logic.

Which Model for Which Task - A Decision Matrix

Rather than declaring a single "best" model, the most useful approach is matching specific workloads to the model that handles them best. The decision matrix below covers the most common use cases, organized by workflow type, and provides a clear recommendation for each scenario based on the benchmark data and pricing analysis covered in previous sections.

Coding and Development represents the most competitive category, with all three models delivering strong results but excelling in different sub-domains. For terminal and CLI development work, GPT-5.3 Codex remains the strongest choice because its 77.3% Terminal-Bench score reflects purpose-built optimization for shell environments, and at $1.75/M input tokens, it offers the best value for high-volume coding workloads. For agentic coding tasks that involve multi-file changes, project-wide refactoring, or code generation with test writing, GPT-5.4 edges ahead with its 57.7% SWE-Bench Pro score and 128K output limit that lets it generate complete implementations in a single response. For budget coding where cost matters more than peak performance, Gemini 3.1 Pro at $2.00/M input delivers comparable SWE-Bench results (80.6%) at a competitive price, while Gemini 3.1 Flash-Lite at $0.25/M input provides adequate quality for simpler code tasks at a fraction of the cost. The practical implication is that most development teams should maintain at least two model integrations: GPT-5.3 Codex for daily terminal work and either GPT-5.4 or Gemini for more complex, multi-file coding tasks.

Research and Analysis is where Gemini 3.1 Pro establishes its clearest advantage through the combination of reasoning capability and context capacity. For scientific and STEM research, Gemini's 94.3% GPQA Diamond score makes it the unambiguous leader, particularly when combined with its 2-million-token context window that can ingest entire research papers, datasets, or comprehensive literature reviews in a single prompt. For long document analysis involving legal contracts, regulatory filings, or extensive technical documentation, Gemini's context advantage is decisive because you can process documents up to 2 million tokens in a single call, whereas GPT-5.4 maxes out at 1.05 million. This means a 500-page legal document that requires chunking and multiple API calls with GPT-5.4 could be analyzed holistically with Gemini, producing more coherent and contextually aware analysis. For general knowledge work and complex multi-source analysis, GPT-5.4's 83.0% GDPval score demonstrates superior reasoning capability, especially on tasks that require synthesizing information across multiple domains where deep contextual understanding outweighs raw context length.

Enterprise and Automation is the category where GPT-5.4 has the most clear-cut advantage thanks to its unique computer use capabilities. For desktop automation, GPT-5.4 is the only option among these three models with native capability, scoring 75.0% on OSWorld. If your enterprise needs automated form filling, cross-application workflows, or legacy system interaction through graphical interfaces, GPT-5.4 is a requirement rather than a preference because no other model in this comparison can operate desktop applications through screenshots and UI interaction. For web browsing and data extraction, Gemini 3.1 Pro scores 85.9% on BrowseComp, outperforming GPT-5.4's 82.7%, making it the better choice for web-scale information gathering tasks. For tool coordination in multi-step agent pipelines using structured function calling, Gemini leads at 69.2% on MCP Atlas versus GPT-5.4's 67.2%, suggesting Google's model handles complex tool orchestration slightly better. For maximum performance regardless of cost, GPT-5.4 Pro at $30/M input delivers the highest scores on reasoning (94.4% GPQA) and novel problem-solving (83.3% ARC-AGI-2), making it the premium choice for mission-critical applications where accuracy justifies the 12x price premium over standard GPT-5.4.

Budget-Conscious Applications deserve special attention because the 120x price range between models means that intelligent routing can dramatically reduce costs without sacrificing quality. Teams running high-volume, cost-sensitive workloads should implement a tiered approach: route simple classification, extraction, and formatting queries to Gemini 3.1 Flash-Lite ($0.25/M), standard conversational and moderate-complexity tasks to GPT-5.3 Instant (~$0.30/M), and reserve complex reasoning for the most appropriate frontier model based on the task type. This strategy can reduce overall API costs by 60-80% compared to running everything through a single frontier model, because the vast majority of production queries don't actually require frontier-level intelligence. API aggregation platforms like laozhang.ai simplify this multi-model routing by providing a unified API endpoint that automatically selects the optimal model based on query complexity and budget constraints, eliminating the need to build and maintain custom routing logic.

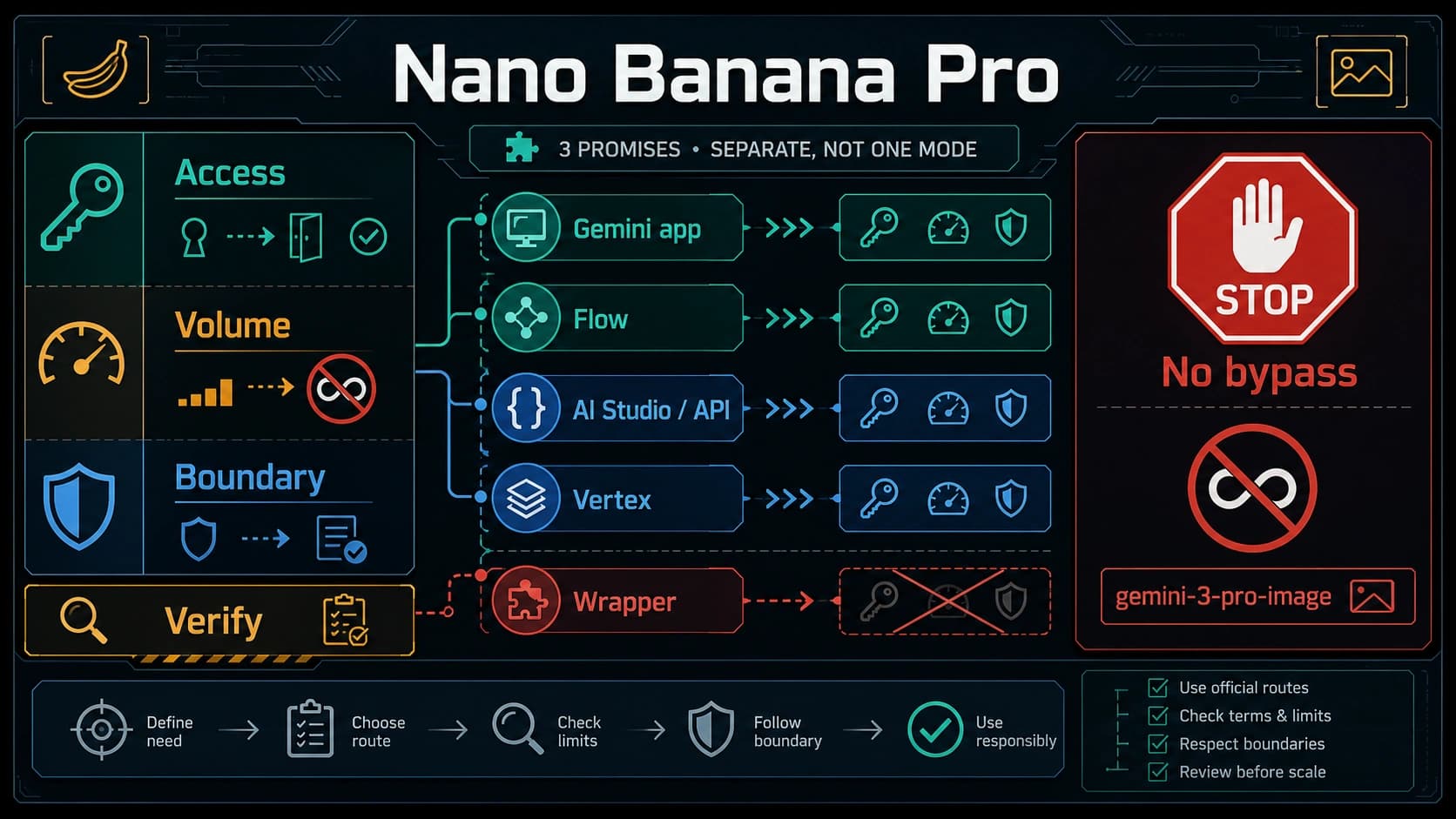

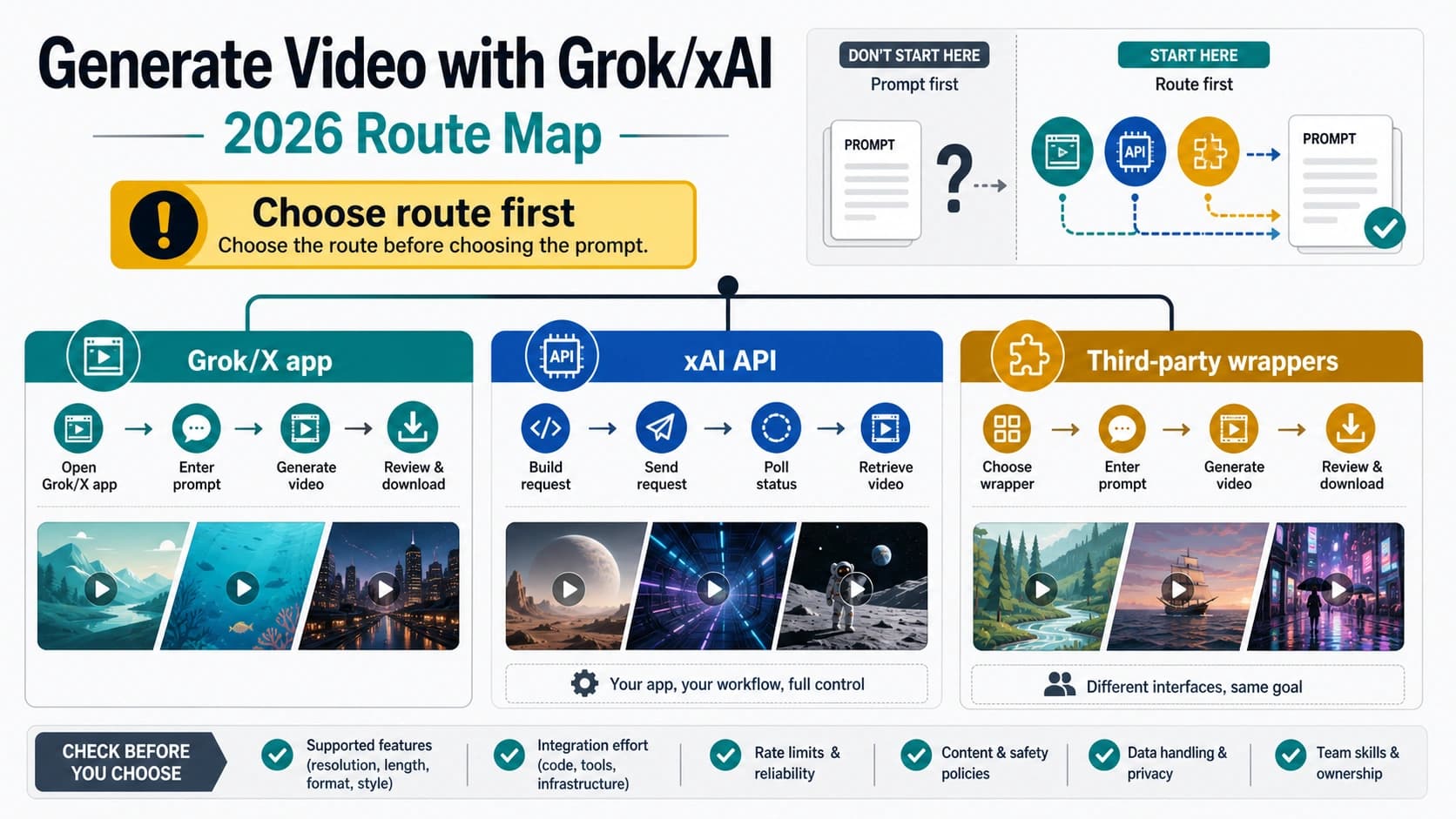

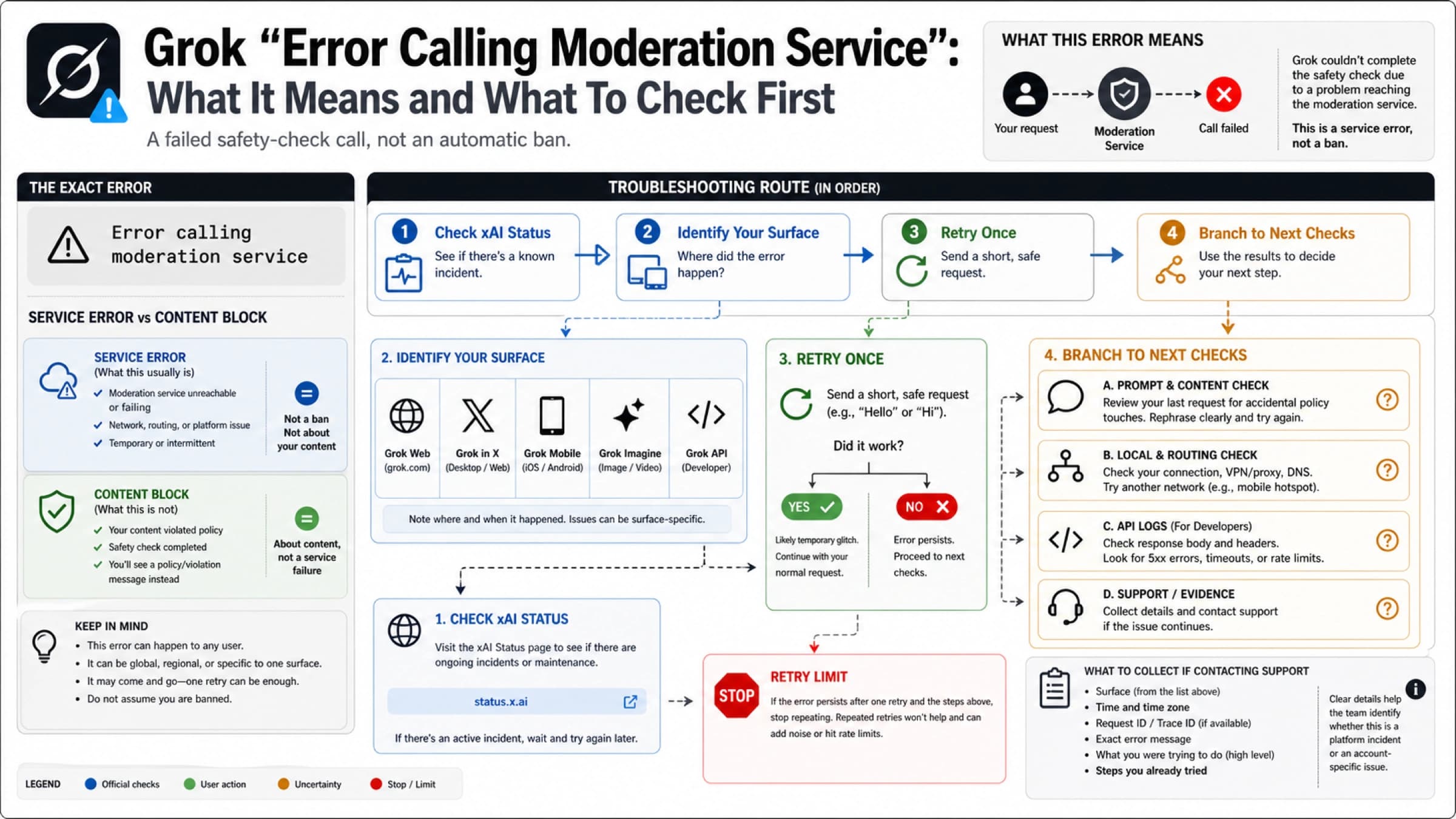

How to Access These Models Cost-Effectively

Accessing frontier AI models through official APIs is straightforward, but optimizing costs across multiple providers requires thoughtful architecture. Each provider offers different pricing tiers, discount programs, and access methods that can significantly impact your total spend. The good news is that both OpenAI and Google have made their latest models available through standard API endpoints with minimal friction, so the technical barrier to entry is lower than ever.

OpenAI's API platform provides direct access to both GPT-5.4 and GPT-5.3 Codex through the standard chat completions endpoint at platform.openai.com. The platform offers batch API processing at approximately 50% discount for workloads that can tolerate 24-hour turnaround times, making it a compelling option for non-time-sensitive tasks like content generation, data processing, or offline analysis. Cached input tokens receive a roughly 50% discount on repeated prompts, which benefits applications with consistent system prompts or few-shot examples. For teams evaluating GPT-5.4's computer use capabilities, OpenAI provides a dedicated computer use API that accepts screenshots as input and returns structured action commands, enabling desktop automation workflows through a clean programmatic interface.

Google's AI Studio and Vertex AI provide access to Gemini 3.1 Pro and Flash-Lite through Google's standard generative AI endpoints. Google's free tier still offers generous rate limits for development and testing, making it the most accessible option for individual developers exploring these models before committing to paid usage. For production workloads, Vertex AI offers committed use discounts and enterprise-grade SLAs that guarantee uptime and provide dedicated support channels. Gemini's context caching feature deserves special mention because it can dramatically reduce costs for applications that repeatedly reference the same base documents, instructions, or system prompts. Rather than paying full input pricing for the same 100K-token system prompt on every API call, context caching lets you pay once for the cached content and then reference it at a fraction of the cost on subsequent calls.

For teams that need access to models from both OpenAI and Google, along with Claude and other providers, API aggregation platforms provide a unified endpoint that simplifies multi-model architectures. Rather than managing separate API keys, billing accounts, and SDKs for each provider, aggregation services like laozhang.ai let you access all models through a single OpenAI-compatible API, often at competitive rates. This approach also enables intelligent model routing, where different types of queries are automatically directed to the most cost-effective model that meets quality requirements. For example, a customer support application might route simple FAQ responses through Flash-Lite, technical troubleshooting through GPT-5.3 Codex, and complex multi-step reasoning through GPT-5.4, all through the same API endpoint. This kind of multi-model routing strategy can reduce overall costs by 20-40% without sacrificing output quality for any individual query type.

The Bottom Line - Making Your Choice in 2026

The March 2026 AI model landscape offers genuine choice rather than a single dominant option. After analyzing 11 benchmarks, six pricing tiers, and dozens of API features across GPT-5.4, GPT-5.3 Codex, and Gemini 3.1 Pro, the clearest conclusion is that the right model depends entirely on your specific workload. Here is the distilled recommendation based on everything covered in this guide.

Choose GPT-5.4 if you need computer use capabilities, the strongest knowledge work performance, or the largest output window (128K tokens). GPT-5.4 is the most versatile model in this comparison: it leads 5 out of 11 benchmark categories, offers native desktop automation that no competitor matches, and delivers the strongest general-purpose reasoning at $2.50/M input. For enterprises building agentic workflows that coordinate multiple tools and applications, GPT-5.4 is the clear frontrunner and the first model that genuinely replaces certain categories of manual digital work.

Choose GPT-5.3 Codex if terminal-based development is your primary use case and cost efficiency matters. At $1.75/M input with 77.3% Terminal-Bench performance, it delivers the best value for developer tooling applications. GPT-5.3 Codex was purpose-built for the command line, and even after GPT-5.4's release, it retains its edge in the specific domain it was designed for. Teams whose entire workflow revolves around terminal environments, build systems, and package management will get more value from Codex's specialized optimization than from GPT-5.4's broader but shallower coverage of coding tasks.

Choose Gemini 3.1 Pro if you need maximum context (2M tokens), the strongest scientific reasoning (94.3% GPQA), or the best price-to-performance ratio for general-purpose tasks at $2.00/M input. Gemini's combination of the largest context window in the industry, the lowest output pricing among frontier models ($12.00/M), and the highest science reasoning scores makes it the optimal choice for research, document analysis, and any workflow that processes large volumes of text. Google's free tier and Flash-Lite at $0.25/M also make the Gemini ecosystem the most accessible entry point for developers exploring frontier AI capabilities.

Consider a multi-model strategy for the best results. The 120x price gap between the cheapest model (Flash-Lite at $0.25/M) and the most expensive (GPT-5.4 Pro at $30.00/M) means that routing queries intelligently across models can save substantial costs while maintaining quality for every individual query. The era of a single "best AI model" is definitively over. The winners in 2026 will be teams that learn to use the right model for each task, matching workload characteristics to model strengths rather than defaulting to a single provider for everything.

Frequently Asked Questions

Is GPT-5.4 better than Gemini 3.1 Pro?

GPT-5.4 leads in knowledge work (83.0% GDPval), computer use (75.0% OSWorld), and advanced coding (57.7% SWE-Bench Pro). Gemini 3.1 Pro leads in scientific reasoning (94.3% GPQA Diamond), web browsing (85.9% BrowseComp), and offers a larger context window (2M vs 1M tokens) at a lower price ($2.00 vs $2.50/M input). Neither is universally better; the right choice depends on whether you prioritize enterprise automation and knowledge work (GPT-5.4) or research and value (Gemini).

What's the difference between GPT-5.3 and GPT-5.4?

GPT-5.4 adds native computer use capabilities (75% OSWorld), 128K max output tokens, and stronger knowledge work performance (83% GDPval). GPT-5.3 Codex retains a slight edge in terminal-based coding (77.3% vs 75.1% Terminal-Bench) and costs 30% less per input token ($1.75 vs $2.50/M). GPT-5.4 is the better choice for enterprise automation and agentic workflows, while GPT-5.3 remains optimal for terminal-first development.

Which AI model is cheapest in 2026?

Gemini 3.1 Flash-Lite offers the lowest cost at $0.25/M input tokens and $1.50/M output tokens. Among frontier models, GPT-5.3 Codex ($1.75/M input) is the cheapest, followed by Gemini 3.1 Pro ($2.00/M input), then GPT-5.4 ($2.50/M input). GPT-5.4 Pro is the most expensive at $30.00/M input. Using free GPT API access tiers can help reduce costs during development and testing phases.

Can GPT-5.4 do computer use?

Yes. GPT-5.4 is the first model in this comparison with native computer use capability, scoring 75.0% on OSWorld. It can take screenshots, identify UI elements, generate mouse clicks and keyboard inputs, and navigate multi-step workflows across desktop and web applications. Neither GPT-5.3 nor Gemini 3.1 Pro currently offer equivalent computer use features through their APIs.

Should I use multiple AI models or stick with one?

For most production applications, a multi-model strategy delivers the best combination of quality and cost. Route terminal coding tasks to GPT-5.3 Codex, scientific reasoning to Gemini 3.1 Pro, enterprise automation to GPT-5.4, and simple queries to budget models like Flash-Lite. This approach can reduce costs by 60-80% compared to using a single frontier model for everything.